Human-AI collaboration means people and computers working as a team. Together, they tackle tough problems neither could solve alone, often in new and faster ways. The World Economic Forum estimates this teamwork could add trillions to the global economy through smarter work and new ideas.

We see it already. A radiologist uses AI to scan images for subtle signs a human eye might miss. A marketing team uses it to brainstorm campaign angles. The big change comes when AI stops being a separate app you open and becomes a natural part of how you work every day.

To see where this is headed, keep reading.

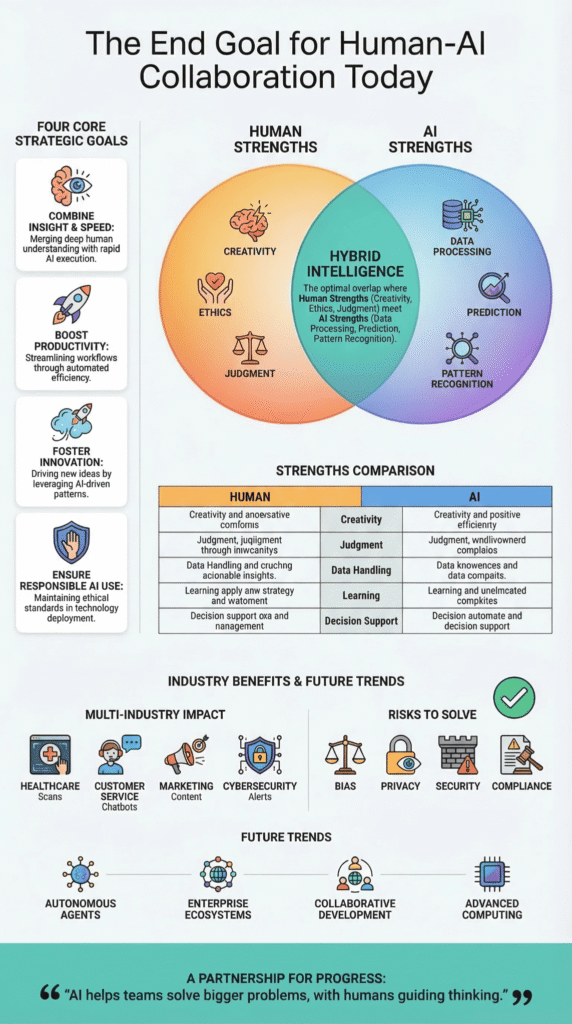

Core Goals Driving Human-AI Collaboration

The partnership is guided by a few clear objectives.

- To combine human insight with machine speed, creating a more capable “hybrid” intelligence.

- To make people more productive and innovative, especially in fields like healthcare and cybersecurity.

- To build this future responsibly, on a foundation of trust, clear rules, and secure technology from the start.

What Is Human-AI Collaboration?

It’s teamwork between people and computers. Humans contribute their judgment, ethics, and creative spark. Computers contribute their ability to calculate, predict outcomes, and spot hidden patterns. By combining these strengths, the partnership can tackle challenges that are too difficult for either side on its own.

Research, including work cited by the Harvard Business Review, finds that teams using AI can see productivity jump by around 40%. This boost is biggest in jobs filled with data and complex analysis.

We see it in our training. A developer uses an AI to suggest code, but then reviews every line themselves against our security standards. This kind of workflow is becoming common in AI-assisted development, where tools speed up coding while humans still verify the logic and security. That’s the balance, moving fast without cutting corners on safety.

For businesses, the task is to build workflows where AI, people, and data operate in sync. The aim isn’t to swap out employees, but to build a true partnership.

In this partnership, humans usually handle:

- Understanding context and making strategic calls

- Considering ethics and overseeing data privacy

- Being creative, especially in content and product design

AI typically handles:

- Processing huge amounts of data

- Providing automated insights and predictions

- Helping quickly with tasks, like sorting through alerts

This combined approach is sometimes called “Hybrid Intelligence.” It’s where human and machine strengths make each other better.

Is the Ultimate Goal Symbiosis Between Humans and AI?

The ultimate goal is symbiosis. It’s not a new idea. J.C.R. Licklider first described it in 1960, a continuous partnership where people and intelligent machines learn from one another.

As noted by The Decision Lab

“Ultimately, achieving effective human-AI collaboration isn’t just a technical challenge – it’s a behavioral one… Together, behavioral scientists, social scientists, and leaders in AI are already recognizing the need for those who understand humans to shape the continued evolution of AI into a symbiotic, not purely algorithmic, collaboration.” – The Decision Lab

Current research confirms these collaborations lead to better choices. But there’s a key rule: humans must stay in charge. They interpret the AI’s output and direct its work.

Look at a common example. An AI analyzes massive customer datasets to identify a pattern. A manager then uses that insight to build a new plan. Their capabilities are distinct, yet complementary.

- People contribute: strategic direction, moral reasoning, and novel ideas.

- AI contributes: finding obscure correlations in data, accelerating analysis, and processing at an immense scale.

You get quicker, more dependable outcomes. When the partnership works, the quality of decisions gets better. This often boosts customer satisfaction and streamlines how a business operates.

What Practical Outcomes Does Human-AI Collaboration Aim to Achieve?

The partnership aims for concrete, better results: higher productivity, fresh innovation, and significant economic growth. Analysts at Price Waterhouse Coopers estimate AI could contribute over $15 trillion to the global economy by 2030.

You can see these benefits across many industries. Take healthcare. An AI can analyze medical scans to help doctors identify issues faster. Yet, the doctor remains the one who makes the final call on a diagnosis and communicates it to the patient.

In business, collaborative AI helps people make decisions. It uses predictions, automation, and real-time data to speed things up.

Here are some specific examples from different industries:

- Healthcare

- AI reviewing scans and patient records.

- Faster disease detection using machine learning.

- Customer Experience

- AI chatbots are handling thousands of customer questions.

- Service platforms are personalizing interactions for each person.

- Marketing and Media

- Generative AI writing drafts for ads and articles.

- Using data to figure out what content people like.

- Cybersecurity

- Systems that automatically sort through security alerts.

- Human teams then investigate those results using proper procedures.

In our own work training developers, we stress secure coding from the start. When you build AI features on a solid, secure foundation, you can scale up without creating new security risks.

How Do Humans and AI Complement Each Other?

People and AI are good at different things. Humans are great with gut feelings, ethics, and creative thinking. AI is fantastic at handling huge amounts of data, finding patterns, and working fast.

A Harvard Business Review article noted that teams mixing humans and AI solved some analytical problems twice as fast as humans alone. As workplaces experiment with next-generation AI coding tools, developers increasingly pair machine speed with human judgment to complete complex technical work faster.

The difference comes down to two simple facts.

Humans understand the “why” and the bigger picture.

AI crunches numbers and finds connections in an instant.

Put them together, and you get a powerful team.

The table below shows how people and AI naturally complement each other.

| Core Strength | Humans | AI Systems |

| Creativity | Inventing new ideas and stories. | Mixing and matching patterns from existing data. |

| Judgment | Making calls based on ethics and context. | Running the numbers to get probabilities and scores. |

| Handling Data | Working with a manageable amount of information. | Crunching huge datasets in an instant. |

| Learning | Gaining wisdom from life and reflection. | Improving through automated training loops. |

| Decision Support | Setting the strategic direction. | Providing predictive models and data insights. |

This combination is why working together beats working alone. The most successful companies we see use AI to make their people more capable, not to replace them.

What Risks Must Be Solved Before Human-AI Collaboration Reaches Its Goal?

Alt text: What is the end goal for human-AI collaboration, including ethical risk assessment covering bias, privacy, and security alerts.

Several big problems need fixing first. The 2025 Future of Jobs Report highlights skill gaps (63%) and tech adoption barriers like AI as top hurdles.

Based on our experience, moving quickly without a secure base creates hidden issues that are expensive to repair later. The main risks are:

- Algorithmic Bias: An AI will mirror and even exaggerate the biases in its training data if it’s not regularly audited.

- Data Privacy: Using personal or medical data means following strict rules, like the EU’s GDPR.

- Security Vulnerabilities: AI systems open up new ways for attacks, so cybersecurity must be built in from the start.

- Regulatory Compliance: New laws, such as the EU AI Act, force companies to be transparent about how their AI works and who is responsible for it.

Managing these risks needs strong governance and clear rules for building and using AI. Teams also need to think carefully about the ethical implications of AI code generation, especially when automated systems influence software behavior or security decisions. It also means training people to know when to rely on the AI’s advice and when to use their own judgment.

What Could the Future of Human-AI Collaboration Look Like?

Credits: TED

In the future, AI will probably be a steady partner in our everyday work. It will be woven into the tools we use, assisting with decisions, sparking ideas, and unpacking complicated information.

As highlighted by The World Economic Forum

“The future of human-AI collaboration depends on our ability to measure and refine skills. Organizations that prioritize these efforts will foster innovation, empower their workforces, and thrive in a world of constant change.” – The World Economic Forum

We already have early examples. Systems called autonomous AI agents can now manage multi-step tasks, automating entire chunks of a workflow.

From our own tests in building agents, the biggest boost happens when the AI acts as an assistant. It does the heavy lifting of analysis in the background, but a person stays in charge, guiding the process and making the final judgment.

A few key trends are pushing this forward:

- Autonomous AI agents

- Tools from companies like OpenAI and Anthropic are showing how AI can execute a series of steps on its own.

- Enterprise AI ecosystems

- Business platforms are starting to weave AI insights directly into their core systems, like customer service software.

- Collaborative development

- New coding practices let developers work with AI to prototype ideas much faster.

- Advanced computation

- Future tech, like quantum computing, could give AI dramatically more power to solve complex problems.

Research supports this direction. Studies, including ones cited by Harvard Business Review, show that human-AI teams make better decisions when the people involved understand how the AI works. Companies that invest in this kind of collaborative training see their teams use AI more effectively and get more done.

The end goal isn’t machines taking over jobs. It’s about creating a work environment where AI handles the scale and speed, freeing up people to focus on what they do best: creative thinking, understanding emotions, and setting strategy.

FAQ

What does human-AI collaboration mean for everyday work?

Human-AI collaboration means people and artificial intelligence working together to complete tasks and solve problems. AI systems use machine learning models and deep learning to analyze data quickly. Humans guide decisions, set goals, and review results.

This Hybrid Intelligence helps teams manage knowledge work, support content creation platforms, and automate repetitive tasks. A strong human-centered approach and better AI literacy help organizations reach goals and improve workplace productivity.

Can AI systems help doctors make better medical diagnosis decisions?

AI tools can support doctors in medical diagnosis by analyzing medical imaging and electronic medical records. Machine learning models study patterns in large datasets and provide predictive analytics that highlight possible health issues.

Doctors review the results and make the final medical decision. In human-AI collaboration, these systems provide real-time assistance while healthcare teams maintain data privacy and perform bias audits to reduce algorithmic bias.

How do AI tools improve customer experience and workplace productivity?

AI tools help businesses improve customer experience and workplace productivity by handling routine tasks. AI-powered chatbots answer questions, organize support tickets, and give real-time assistance.

Collaborative AI also supports enterprise workflows by offering content suggestions and helping teams manage digital campaigns and product launches. When employees spend less time on repetitive work, they can focus on creative tasks that improve customer satisfaction and Net Promoter Scores.

Why do rules and ethics matter in human-AI collaboration?

Rules and ethics guide responsible human-AI collaboration. Organizations must protect data privacy, follow regulatory standards, and monitor cybersecurity procedures to reduce security risks.

Teams also evaluate machine learning models for algorithmic bias through bias audits. Regulations such as the EU AI Act encourage responsible AI development. Training programs also build AI literacy so employees understand AI capabilities and apply a human-centered approach in daily work.

What future changes may happen as AI capabilities grow?

The future of human-AI collaboration focuses on stronger Hybrid Intelligence, where people and AI systems work together more effectively. Generative AI, AI-driven language processing, and multi-modal AI systems will support content creation, enterprise workflows, and global teamwork.

Predictive analytics and emerging technologies such as quantum computing may help organizations solve complex problems and improve workplace productivity while contributing to long-term global GDP growth.

Where Human-AI Collaboration Is Ultimately Heading

AI will not replace people. It will change how people work. Like any tool, it helps those who learn how to use it well. When humans guide the thinking, and AI helps with speed and scale, teams solve bigger problems. That is the real promise of human-AI collaboration.

But strong tools need strong skills. If you want to build AI systems the right way, start by learning how to write safer code. You can join the Secure Coding Practices Bootcamp and practice real-world secure development. The future will reward builders who learn early.

References

- https://www.european-cyber-resilience-act.com/

- https://digital-strategy.ec.europa.eu/en/news/commission-publishes-feedback-draft-guidance-assist-companies-applying-cyber-resilience-act