A new law for EU software is coming. The Cyber Resilience Act requires secure-by-design products, active patching, and incident reporting. Companies have until September 2026 to comply.

The law applies to nearly any product with digital elements, including apps, IoT devices, and cloud services. Penalties can reach €15 million or 2.5% of a firm’s global annual revenue.

For development teams, this translates to concrete technical and procedural changes. The 2026 compliance date is firm. Continue reading for a breakdown of the key requirements.

Cyber Resilience Act Essentials for Developers

Software teams working in or selling to the EU are about to face a new set of security expectations.

- The EU Cyber Resilience Act makes developers legally responsible for secure by design software and vulnerability handling across the full product lifecycle.

- Incident reporting to the European Union Agency for Cybersecurity begins in 2026, with full CRA requirements by December 11, 2027.

- Early adoption of Secure Coding Practices, SBOM automation, and structured vulnerability management reduces regulatory and operational risk.

What Is the Cyber Resilience Act and Why Does It Matter?

The EU’s new Cyber Resilience Act is a major cybersecurity law. It sets mandatory security rules for most hardware and software sold in Europe. Enforcement starts in 2026 and 2027.

It targets makers of “products with digital elements.” That covers nearly everything, from phone apps and smart home gadgets to the software inside them. We’re talking about roughly 90% of all digital products on the market.

This law is different from others like NIS2. While NIS2 focuses on how companies are run, the CRA digs into the product itself. For us as developers, that’s a big shift. It means the security of our code is now a legal requirement, not just a best practice.

As explained in a DevOps analysis of the CRA’s impact on development,

“The CRA fundamentally redefines how software will be built and maintained, pushing organizations to adopt more structured, transparent, and security-centered development strategies. And if you’re like most commercial software developers who incorporate open source components, you’ll need to account for your dependencies.” – DevOps.com

The core obligations are clear: build security in from the start, have a plan to find and fix flaws, report serious incidents, and keep detailed technical records. You must also get a CE mark before selling your product in the EU. Get it wrong, and the fines are severe, up to €15 million or 2.5% of your company’s global revenue.

In short, security is now part of your product’s legal foundation. It’s not optional.

Does the CRA Apply to Your Software or SaaS Product?

Alt text: Infographic covering cyber resilience act overview, developers must know, including timelines, task flow, and compliance penalties.

If you sell software or connected hardware in the European Union, the CRA almost certainly applies to you. The rules are broad, and understanding how the EU cyber resilience act affects different product types helps teams determine whether their software falls within the law’s scope.

Pure SaaS is generally exempt, but SaaS as remote data processing for a product with digital elements falls in scope. Official EU guidance supports this view.

Here’s a simple way to check:

- Do you sell or distribute it in the EU?

- Do you make money from it?

- Does it connect to the internet or handle data remotely?

- Is it part of a larger digital product?

This includes commercial open-source software. Major foundations have warned that if you profit from an open-source project, you likely have obligations.

Our advice? If you build apps, IoT devices, backend services, or embedded software for the EU market, assume the CRA applies. Starting your compliance work now is much easier than facing an audit later.

What Developers Must Actually Do

Credits: The Linux Foundation

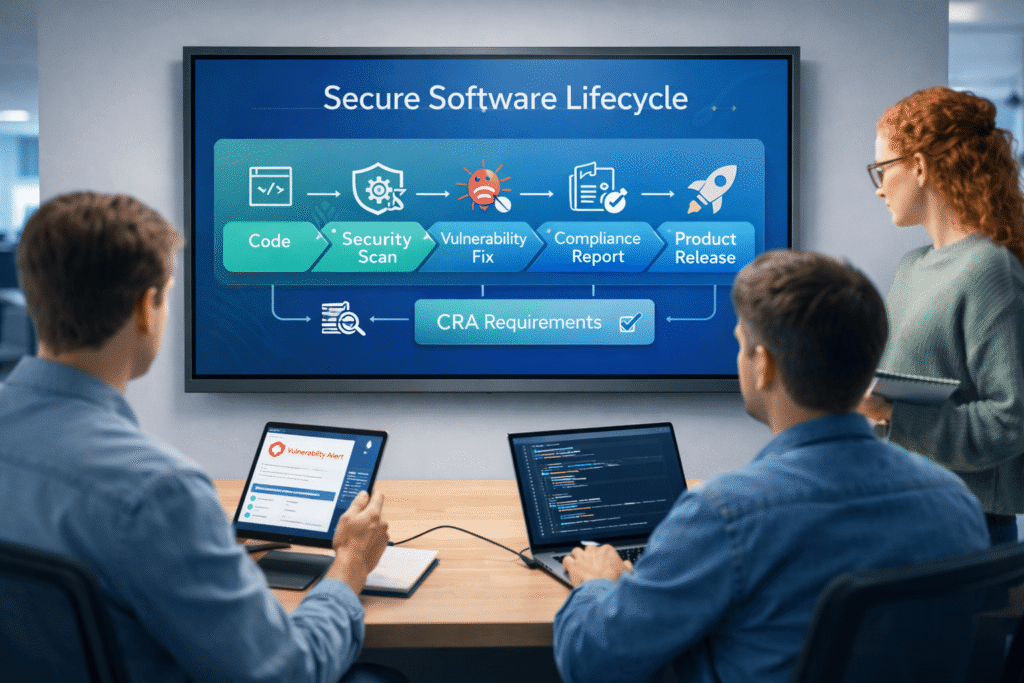

The law sets four main jobs for developers. Build security in from the start. Find and fix security holes. Keep a list of all software parts (an SBOM). Report serious incidents to ENISA within 24 hours, starting in 2026.

Building Security In From the Start

“Security by design” means thinking about risks and reducing threats while you build the product, not after.

The legal language is explicit. According to Prime Security,

“Products with digital elements shall be designed, developed, and produced in such a way that they ensure an appropriate level of cybersecurity based on the risks.” – Prime Security

In our training, we see teams struggle with this. The practical way to start is with secure coding. When we add mandatory code reviews, lock down access controls, set secure defaults, and only collect necessary data early on, we cut future problems. It’s much harder to add this later.

Official EU cybersecurity guidance says you must reduce “attack surfaces” and never use default passwords. For us, that translates to a few key actions during development:

- Doing threat modeling when planning features.

- Designing systems so users only have the access they absolutely need.

- Using techniques that make it harder for attacks to succeed.

- Writing down how you built security in before you release.

Handling Vulnerabilities and Updates

You have to keep managing security flaws for the entire life of your product.

You must provide security updates for a set time. For many products, that’s understood to be up to five years. If a severe incident happens, you must report it to ENISA within 24 hours, with more details in 72 hours. This info comes straight from the EU’s own site on the law.

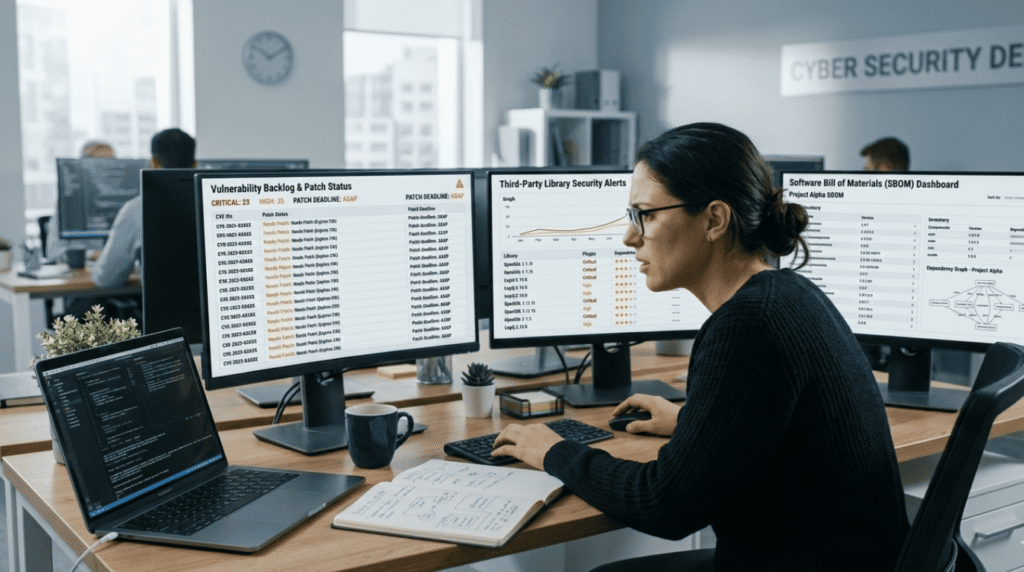

This changes everything for dev teams. You can’t just fix bugs when you feel like it. You need a clear, documented process for finding, disclosing, and patching vulnerabilities. Teams that fully understand why the cyber resilience act matters tend to prioritize structured vulnerability handling earlier in their development cycle.

You also need a team ready to respond to security incidents.

The Mandatory Software List (SBOM) and Paperwork

You must create a Software Bill of Materials (SBOM) to get your CE mark.

Groups like the Open Source Security Foundation push for formats like CycloneDX to track open-source parts. Your SBOM must list every component, any known risks, and your plan for updates.

Here’s what you need to do and when:

| What You Need | What You Have to Do | When It’s Due |

| Security by Design | Do threat modeling, write secure code, and remove default passwords. | Before release. |

| Vulnerability Handling | Have a process to find, tell users about, and fix flaws. | Continuously. |

| SBOM | Document every software part and its risks. | When you get your CE mark. |

| Incident Reporting | Send an early warning to ENISA within 24 hours. | Starting September 2026. |

Your technical documents are now legal proof. They’re not just for your team anymore.

How the Rules Work in the Real World

For most software, you check your own work. But for high-risk “critical” products listed in the law’s Annex III, you need a third-party auditor. Either way, you end up with an official EU Declaration of Conformity and the right to put a CE mark on your product.

Here’s the basic breakdown:

| Product Type | How It’s Checked | Result |

| Standard product | You assess it yourself. | You can use the CE mark. |

| Critical Class 1 | An outside auditor checks it. | You can use the CE mark. |

| Non compliant | Authorities take action. | Fines or a sales ban. |

EU market watchdogs in any member country can ask for your paperwork anytime. If you handle a serious incident poorly, they can suspend your CE marking.

What does this mean for your team? You must keep specific records ready:

- Documents showing you designed with risks in mind.

- Logs of every security incident you report.

- Records of how you found and fixed vulnerabilities.

- Proof of your secure development process.

We’ve seen teams get this wrong. They think they can add the paperwork later. Trying to create all this evidence for old, messy code is a huge, expensive headache. It’s far cheaper to build the process as you go.

What’s the Hardest Part for Developers?

Talking to teams, we hear the same three struggles: creating the software list (SBOM), promising five years of updates, and hitting the 24-hour incident report clock.

In the open-source world, there’s real fear about who gets blamed when a free project is used in a paid product. Groups like the Linux Foundation have pointed out the strain of new audits and paperwork.

The common pain points are clear:

- Building an SBOM for a massive, tangled codebase.

- Writing security docs for software that’s years old.

- Dropping everything to report an incident in a single day.

- Finding the time and people to do all this, especially for small teams.

It’s tricky for open source. If a company takes a free project and sells it, that company now has legal duties, even if the original creators were volunteers.

But it’s not all doom. The rules aren’t the same for every product. Truly critical systems face the toughest checks. For lower-risk apps, a well-organized self-check might be enough.

Yes, following the law adds work. But a major data breach or getting kicked out of the EU market is far worse.

Getting Ready Before the 2026 Deadline

You should focus on four things now: bake security into your code, automate your software list, set up a vulnerability process, and use existing security standards.

A step-by-step plan works best.

First, lock down your development process. Start adding threat modeling to your regular planning. Make secure coding practices part of mandatory code reviews, not optional. Invest in training. Good programs, like those from LF Education, teach the practical IT and engineering security skills that match standards like ISO 27001.

Second, let machines handle the list. Don’t create your SBOM by hand. Set it up to generate automatically every time you build your software. Use tools to track every piece of code, especially in open-source projects on GitHub.

Third, prepare for the worst. Write down exactly what to do when a flaw is found. Who gets told? Who fixes it? Designate a security response team. Have the template for reporting to ENISA ready to fill out now, not during a crisis.

Research from places like Harvard Business Review shows that getting ahead of risk like this makes your whole operation more stable.

Starting now stops the 2026 panic. More importantly, it just makes your product better and safer today.

FAQ

What does the Cyber Resilience Act mean for developers?

The Cyber Resilience Act from the European Union sets clear cybersecurity requirements for products with digital elements. These include digital products, IoT devices, non-embedded software, and SaaS as part of products with digital elements (e.g., remote data processing).

Software developers must follow CRA requirements such as secure by design, risk assessments, vulnerability management, and regular Security Updates throughout the full development lifecycle and product lifecycle.

Do open source projects have to follow the EU Cyber Resilience Act rules?

The EU Cyber Resilience Act applies differently to open source software depending on how it is used. Open source projects shared for free without commercial activity may not face the same cybersecurity obligations.

However, products containing them, such as a hardware product or connected products, must meet cybersecurity compliance. Teams must strengthen vulnerability handling processes and follow proper Vulnerability Reporting practices.

What steps help meet CRA cybersecurity requirements?

Developing secure software under CRA requirements requires structured security requirements and documented processes. Teams should apply secure by design practices, Risk-Based Design, Secure Configuration, Data Minimization, and Access Control from the start.

Developers must prepare a Software Bill of Materials, manage vulnerability handling, address severe incidents, and ensure incident reporting. Strong information security controls help reduce cyber threats and data breaches.

How do I complete a conformity assessment for digital products?

To sell a product with digital elements in the European market, developers must complete a conformity assessment procedure. Certain categories listed in Annex III, including Critical Class 1 and Critical Class 2, may require review by a notified body.

After meeting Annex I cybersecurity requirements, companies must issue an EU Declaration of Conformity and apply the CE mark before market placement.

How does the CRA connect to other EU legislations?

The Cyber Resilience Act works alongside other EU legislation, such as the NIS2 Directive. Guidance from the European Union Agency for Cybersecurity supports consistent cybersecurity standards across Member States. Market surveillance authorities monitor compliance and CE marking.

The Blue Guide provides direction on regulatory compliance. Together, these frameworks strengthen cybersecurity compliance and protect the European market from growing cybersecurity threats.

Preparing for the Cyber Resilience Act Era

The Cyber Resilience Act is pushing teams to build safer code. That change does not start with tools. It starts with people who know what secure code looks like. Small habits matter: checking inputs, guarding data, and fixing weak spots early. When developers learn these skills, software stops being fragile and starts being trusted.

If you want hands-on practice, you can join the Secure Coding Practices Bootcamp. It is two days of real coding labs, simple lessons, and clear examples. No heavy security talk, just practical steps developers use every day. Learn the basics, practice them, then ship code that stands up to the real world.

References

- https://devops.com/the-eus-cyber-resilience-act-redefining-secure-software-development/

- https://www.primesec.ai/resources/eu-cyber-resilience-act-cra-the-complete-guide-to-security-by-design-compliance