AI-generated code will break, leak, or fail in production if no human ever checks it first. Not because AI is useless, but because it’s doing something narrower than we wish it did.

It’s a powerful autocomplete, not a teammate that understands your system, your users, or your risk tolerance. It predicts tokens, it doesn’t reason about edge cases, security, or long-term maintenance. The result is often impressive-looking code that hides quiet, dangerous flaws.

This isn’t about panic or purity tests. It’s about using AI with clear eyes, and knowing where your judgment has to step in, so keep reading.

Key Takeaways

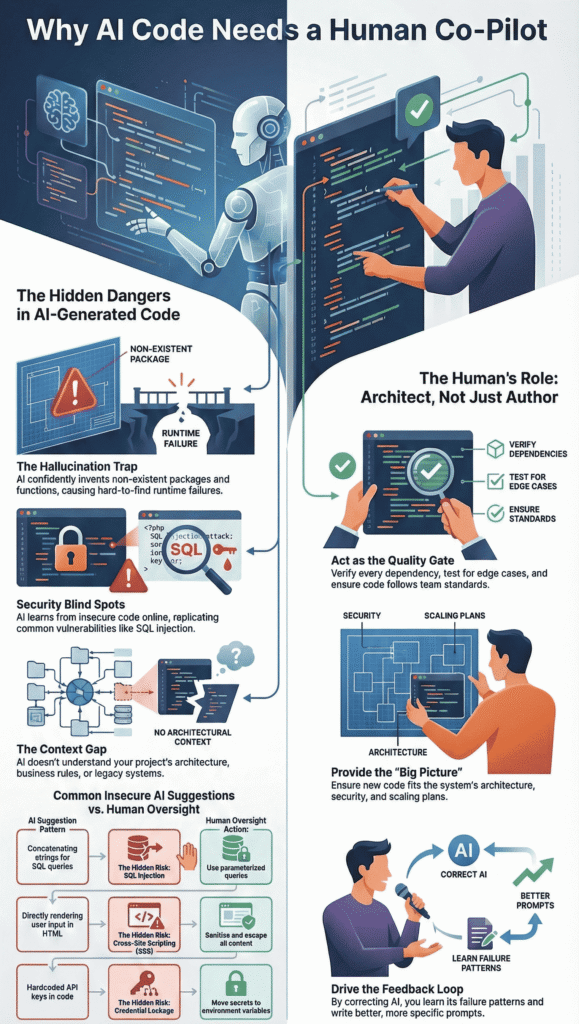

- AI frequently invents non-existent packages and functions, leading to hidden runtime failures.

- It replicates insecure patterns from its training data, creating glaring security blind spots.

- AI lacks project context and architectural judgment, requiring human review for logic and integration.

The Hallucination Trap: When AI Invents Reality

AI models don’t comprehend code. They predict the next most probable character or token.

This statistical parlor trick leads to “hallucinations,” where the tool confidently suggests libraries, functions, or entire API calls that are pure fiction.

In fact, research shows this isn’t hypothetical: “AI-generated code often isn’t secure, and the risk is likely already in your stack,” with 45 % of outputs introducing security flaws despite appearing correct at first glance [1].

This kind of overconfident output is common in vibe-driven coding patterns, where code feels correct at a glance but lacks grounding in real implementations.

The code looks syntactically perfect, it passes a linter’s basic checks, but it’s built on a foundation of nothing. You won’t find the error until runtime.

- Fabricated Packages: Studies suggest models hallucinate non-existent open-source packages at a notable rate. The names sound plausible, fast-crypto-secure, node-advanced-logger.

- Ghost Functions: It might call user.encryptPasswordSHA3() when the actual method in your framework is user.hashPassword().

- Runtime Failures: These dependencies and calls fail only when the code path is executed, often surfacing late in testing or, catastrophically, in production.

Scenario: You prompt, “Write a function to connect to a PostgreSQL DB with SSL.” The AI responds with code using pg.connect_secure(). It’s a convincing method name. But the real library uses pg.connect() with an ssl_mode parameter. Your deployment crashes at 2 a.m.

Security Blind Spots in Automated Logic

Here’s the uncomfortable truth: AI trains on the public internet. Its dataset includes millions of lines of beautiful, efficient, and horribly insecure code. It learns the most common patterns, and unfortunately, common doesn’t mean safe.

According to independent research, this pattern shows up in real ecosystems too, testing found that only 55 % of AI-generated code was free of known cybersecurity vulnerabilities, leaving 45 % of outputs containing flaws like SQL injection and XSS [2].

The AI’s goal is to solve the immediate syntactic puzzle, not to protect your application from threat actors. It will often suggest the shortest path to a working snippet, bypassing fundamental security hygiene.

We must implement secure coding practices as our first line of defense. This means treating every AI suggestion as untrusted input.

| AI Suggestion Pattern | The Hidden Risk | Human Oversight Action |

| Concatenating strings for SQL queries. | SQL Injection vulnerability. | Use parameterized queries or ORM methods. |

| Directly rendering user input in HTML. | Cross-Site Scripting (XSS) attack vector. | Sanitize and escape all dynamic content. |

| Hardcoded API keys or secrets in code. | Credential leakage if repo is exposed. | Move secrets to environment variables. |

| Suggesting a known-deprecated crypto function. | Weak encryption, data breach risk. | Verify against current best-practice libraries. |

The table shows the gap. The AI replicates patterns it saw frequently in its training data, many of which are flawed.

Your job is to intercept these patterns and apply the secure alternative. A static code scanner might catch some of these, but not all. The human reviewer understands the intent of the code and can foresee how it could be maliciously used.

The Context Gap: Why AI Misses the Big Picture

Think of AI as a brilliant bricklayer. You ask for a wall, and it lays bricks with astonishing speed and perfect mortar consistency.

But it doesn’t ask why you want the wall. It doesn’t know you’re building a children’s library and need windows at a specific height. It doesn’t know the land slopes. It just lays bricks.

This is the context gap. Your project has history, architecture, business rules, and legacy systems. The AI sees none of it.

- Business Logic Gaps: AI can’t know your specific user approval workflow or regional data privacy constraints.

- Legacy Integration: It will blithely suggest a modern module that clashes violently with your ten-year-old core system.

- Edge Case Blindness: AI code typically follows the “happy path.” It won’t account for the network timeout, the malformed input file, or the race condition.

A function can be perfectly valid JavaScript and a total disaster for your application’s logic. The AI writes code in a vacuum. You bring the world into the room.

Architecture vs. Snippets

AI is strong at local problems, weak at whole systems. It can spit out a clean function to validate an email, parse a CSV, or call an API.

But that’s very different from deciding where those pieces live, how they talk to each other, and how they age over time. That bigger picture, the architecture, still sits with us.

You can see the split pretty clearly:

- AI: “Here’s a function that works in isolation.”

- You: “How does this fit our services, security, logging, and scaling plan?”

AI doesn’t track:

- how that validation should plug into your existing microservices,

- how it behaves when traffic surges at midnight in a different region,

- how errors should be logged so they match the rest of your observability stack.

We’re the ones holding system coherence in our heads. We remember the legacy module no one wants to touch, the reporting jobs that run overnight, the compliance rules that warp how data flows.

The model just sees a local puzzle: a function, a class, a file, maybe a repo at best.

When no one is thinking about architecture, you end up with a scattered pile of clever snippets, each one “fine” on its own, but misaligned in naming, error handling, logging, retries, or security assumptions.

They don’t click into a single, trustworthy system. The codebase starts to feel like a city built by dozens of contractors who never saw the master plan.

The integrity of the system still depends on someone holding the map, not just admiring each freshly paved street.

That map is human work: understanding tradeoffs, enforcing patterns, and deciding how the whole thing is supposed to behave when it’s under pressure, not just when a single function passes a unit test.

Technical Debt and Training Data Flaws

The problems go beyond runtime errors and security. AI-generated code can silently mortgage your project’s future.

- Outdated Libraries: Training data is a snapshot in time. The AI might enthusiastically suggest a module that was popular three years ago but is now deprecated and unsupported. You inherit maintenance dead ends.

- License Violations: It might replicate a snippet from a GPL-licensed project, creating legal risk for your commercial product. AI doesn’t understand software licensing.

- Optimization Gaps: The code often works but is verbose or inefficient. It might pull in a massive library for a simple task or use a O(n²) algorithm where O(n) exists. This gets deployed, and your cloud bill quietly grows.

The technical debt accumulates not with a bang, but with a thousand small, seemingly correct suggestions. Each one adds a tiny weight.

Soon, the application is slow, expensive to run, and a nightmare to update. You’re paying for the AI’s shortcut.

The Human-in-the-Loop Framework

So what do we do? We build a review framework. We make oversight systematic, not anxious. This isn’t about distrust, it’s about establishing a quality gate.

Here’s a checklist to apply to any significant AI-generated block before it gets merged.

- Verify Every Dependency. Search for each imported package name. Confirm it’s real, maintained, and has a license you can use.

- Sanitize All Inputs. Assume any user or external data is hostile. Manually check for proper escaping and validation.

- Test the Edges. Write unit tests for the failure modes the AI ignored, null values, empty strings, network failures.

- Check Compliance. Does it follow your team’s style guide? Are there any hardcoded values that should be configs?

- Tune Performance. Read the code. Is it doing redundant work? Can a loop be simplified? Refactor for clarity and efficiency.

This process turns you from a proofreader into a director. You’re not just fixing, you’re guiding. This kind of hands-on review mirrors how effective AI debugging and refinement workflows actually work in practice, where human judgment shapes raw output into production-ready logic. You ensure the code doesn’t just run, but that it belongs in your codebase.

Mentorship and the Feedback Loop

One quiet advantage of strict human review is how much teaching it does, almost by accident. Every time a junior developer steps through a shaky AI suggestion, why it’s insecure, why it mishandles errors, why it breaks idempotency, they’re not just fixing code, they’re learning the rule underneath it. The principle sticks because they’ve seen the failure up close.

You can think of it in two layers of growth:

- Juniors learn why standards and guardrails exist.

- Seniors learn how the AI tends to slip, and where to press harder.

There’s another layer, too: correcting the model’s output trains the human, not the machine. As you edit, you start to notice its habits, how it names variables, how it fakes configuration, how it invents missing details. That shapes how you prompt next time:

- You pre-empt common mistakes (“don’t invent APIs, use only documented endpoints”).

- You steer it toward your patterns (“match our existing logging format and error types”).

Over time, this creates a real feedback loop: better prompts, cleaner first drafts, fewer wild errors, faster reviews.

Teams that actively debug AI-generated code effectively start recognizing failure patterns earlier, long before they surface as production incidents.

The AI doesn’t “learn” your corrections in a literal sense, but you do. You become sharper at asking for what you actually need, and at spotting where it’s most likely to go off the rails.

Velocity does go up, but the quality bar doesn’t move. The human review stays where it belongs: as the final checkpoint, the keeper of standards, and the place where both junior and senior engineers quietly get better at their craft.

FAQ

Why does AI code hallucinate and cause runtime errors in real projects?

AI code hallucinations can lead to fabricated packages, invented functions, and syntactic correctness that hides deeper semantic errors.

These issues often surface as runtime errors or dependency failures during execution. Human oversight catches problems AI misses by validating logic, testing assumptions, and ensuring the code actually works in real environments, not just on the surface.

How do security risks explain why AI code needs constant human oversight?

AI-generated code can introduce security risks like cross-site scripting, log injection flaws, insecure patterns, and vulnerability injection.

Because code scanning fails to catch every issue, security blind spots and threat replication remain real concerns. Human validation helps detect subtle risks, apply secure practices, and reduce exposure before the code reaches production.

Why do context limitations make AI code unreliable without human review?

Context limitations mean AI often misses project architecture, business logic gaps, edge case misses, and legacy integration needs.

Domain ignorance and lack of system coherence can break workflows or create fragile solutions. Human reviewers understand the full context, align code with real requirements, and ensure novel solutions actually fit the system.

How do AI model shortcomings lead to production failures?

AI model shortcomings include training data flaws, outdated libraries, license violations, and optimization gaps.

These issues can cause technical debt buildup, scalability issues, and production failures over time. Human oversight ensures dependencies stay current, code remains compliant, and performance tuning happens before problems grow costly.

What oversight benefits explain why AI code needs constant human oversight?

Oversight benefits include bug mitigation, quality assurance, risk reduction, and standards adherence. Through human validation, code review process, compliance checks, and feedback loops, teams improve error detection and system reliability.

Mentorship cycles and continuous monitoring also help teams apply ethical judgment and deliver more stable, maintainable code.

Steering the Ship

AI coding assistants are incredible force multipliers. They handle boilerplate, propose alternatives, and unstick you when you’re staring at a blank file.

But they’re not navigators. They still lack the judgment, context, and ethical responsibility needed for secure, maintainable systems.

Human oversight is not a temporary patch, it’s a permanent part of the partnership. Your role shifts from sole author to editor, reviewer, and architect.

Let the AI shape the raw clay, but you decide what ships. If you want to sharpen that judgment, join the Secure Coding Practices Bootcamp and level up your secure coding skills.

References

- https://www.veracode.com/blog/ai-generated-code-security-risks

- https://www.itpro.com/technology/artificial-intelligence/researchers-tested-over-100-leading-ai-models-on-coding-tasks-nearly-half-produced-glaring-security-flaws