Using vibe coding for legacy code modernization works best when AI is embedded into structured workflows with strict testing, modular architecture oversight, and Secure Coding Practices from day one.

We’ve seen teams accelerate refactoring of monoliths, update legacy APIs, and even modernize COBOL systems without introducing hidden debt, but only when guardrails prevent uncontrolled code generation.

Modernization is as much about planning as tooling, and conversational programming amplifies both speed and risk. In this guide, we show how to leverage AI safely, avoid common pitfalls, and keep your legacy systems stable and maintainable. Keep reading to learn more.

Quick Wins – Modernizing with AI Without Breaking Things

- Vibe coding accelerates scaffolding and test generation, but unchecked AI code generation creates hidden technical debt.

- Hybrid workflows combining AI augmentation and human validation reduce migration risks and hallucination errors.

- Secure Coding Practices should anchor every modernization strategy before AI-driven refactors begin.

What Is Vibe Coding and Why Is It Entering Legacy Modernization?

Vibe coding uses natural language prompts with AI tools like GitHub Copilot to generate code from intent instead of writing line by line.

As vibe coding becomes more common in modernization efforts, teams increasingly rely on it to speed up refactoring while preserving existing system behavior. By 2025, improvements in large language models encouraged development teams to experiment with AI-assisted refactoring for legacy systems.

We’ve applied this approach ourselves. In a recent Java monolith modernization, AI scaffolded CRUD modules rapidly, saving days of manual coding.

However, undocumented business rules and hidden dependencies forced us to pause, validate, and supplement AI output with human review. Without these safeguards, automation alone risks introducing inconsistencies or technical debt.

The main drivers for legacy modernization usually include:

- Growing maintenance burdens from legacy debt

- COBOL and mainframe modernization demands

- Migrating monolithic services to microservices

- Productivity stagnation in development teams

We’ve learned that speed is enticing, but maintainability determines long-term success. By combining AI-assisted coding with controlled testing, incremental refactoring, and Secure Coding Practices, teams can modernize safely while preserving architectural integrity.

Why Does Vibe Coding Create Instant Legacy Debt?

Vibe coding can accelerate refactoring, but without strong tests and clear architectural ownership, AI-generated changes often lack traceability. On Reddit, threads with 67 upvotes describe vibe-generated code as “legacy code in disguise,” citing opaque logic, unpredictable outputs, and hidden assumptions.

AI predicts likely intent, not actual semantics. When we’ve used it to refactor undocumented modules, edge case handling sometimes disappears silently. Debugging those outputs becomes a reverse-engineering exercise, developers spend hours interpreting AI reasoning rather than improving the system.

Insights from OWASP indicate :

“Although it was never a good idea to copy code snippets from blogs or websites without thinking twice, the problem is exacerbated in this case.” – OWASP

Debt accelerators usually include:

- Minimal unit testing or skipped TDD

- AI hallucinations inside critical business logic

- Migration of CRUD prototypes to complex domains

- Direct promotion of draft code to production

At Secure Coding Practices, we treat vibe-generated code as a draft. Human review, test supplementation, and security validation come first, not as a recommendation, but as a survival strategy.

When Can Vibe Coding Accelerate Modernization Safely?

Vibe coding can accelerate modernization when applied to narrowly scoped tasks rather than full system rewrites.

Teams see the most consistent results when modernization follows advanced workflows that constrain AI output through testing, review, and incremental delivery. We’ve seen it add the most value in AI scaffolding, documentation generation, and small refactoring patterns.

For example, translating isolated Fortran utilities to Python, generating test harnesses for undocumented functions, or extracting service boundaries from a Java monolith using the strangler pattern.

It becomes risky in complex domains. Deep migrations like financial rule engines or healthcare compliance modules often hide regulatory nuance that AI cannot reliably infer. From our experience, safe AI-assisted modernization works in three recurring scenarios:

- Generating unit tests before refactoring a monolith

- Producing documentation to support legacy inventory and code archaeology

- Suggesting refactoring patterns that are reviewed and approved by engineers

We follow hybrid workflows inspired by Google Cloud guidance, emphasizing incremental evolution over big-bang rewrites. AI is there to extend engineers’ capabilities, not replace architectural thinking. That distinction keeps modernization controlled and prevents hidden legacy debt.

What Does a Hybrid Workflow Look Like in Practice?

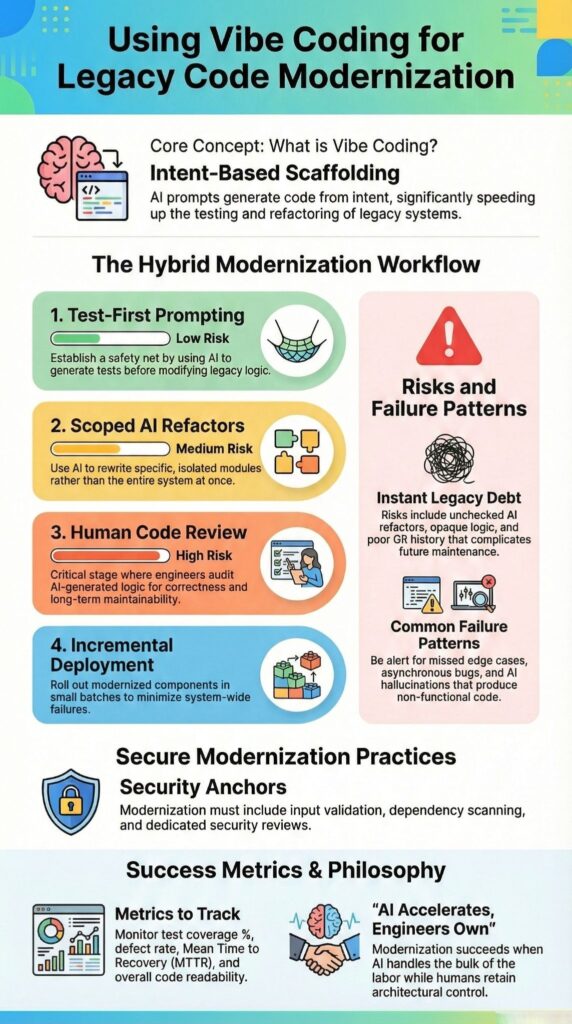

A hybrid workflow balances AI acceleration with human oversight to keep legacy modernization safe and maintainable.

Maintaining code quality with AI in these workflows depends on small changes, layered validation, and clear human accountability at every stage. We’ve found a structured four-step process works best in production projects.

- Test-First Prompting – We start by generating unit tests for legacy functions before any refactor. This establishes baseline behavior and ensures coverage.

- Scoped Refactor Prompts – AI suggestions stay narrow, such as converting loops to async Python. Outputs are compared against logs, and edge cases are verified manually.

- Human Code Review – Engineers check architecture alignment, naming consistency, and dependency integrity. Secure Coding Practices enforce input validation and security scanning.

- Incremental Deployment – New modules are isolated with feature flags. Canary releases monitor defect rates and recovery metrics before broader rollout.

Below is the modernization workflow summary:

| Phase | AI Role | Human Role | Risk Level |

| Tests | Generate test harness | Validate coverage | Low |

| Refactor | Suggest pattern changes | Review and adjust | Medium |

| Architecture | Limited support | Lead design decisions | High |

This hybrid approach keeps AI scaffolding contained while preserving code maintainability and long term evolution.

What Are Real Failure Patterns in Vibe-Led Migrations?

Credits : Computer Science Made Easy

Vibe coding can accelerate legacy modernization, but without strict oversight, it introduces subtle failure patterns. We’ve seen firsthand that even well-intentioned AI scaffolding can silently break business logic or async flows under load.

- Statistical Rewrites of Undocumented Constraints – AI guesses missing rules, creating hidden edge case failures.

- Overconfident Test Generation – Unit tests reflect AI assumptions rather than real-world scenarios.

- Architecture Drift – Incremental AI-driven refactors can fragment service boundaries in microservices conversions.

- Opaque Logic – Engineers struggle to trace AI changes, increasing maintenance burden.

In one billing system migration, asynchronous conversion introduced race conditions we only discovered under production-like stress tests. Online communities call this “AI spaghetti,” where incremental conversational edits accumulate technical debt quietly.

We treat AI outputs as draft scaffolding. Human validation, explicit edge case documentation, and security scanning are non-negotiable. The AI accelerates execution. We remain responsible for correctness, maintainability, and compliance. That mindset keeps modernization controlled, traceable, and safe.

How Secure Coding Practices Anchor Vibe Coding in Legacy Code Modernization

Secure Coding Practices form the first line of defense in any vibe coding workflow. AI-generated code reflects statistical patterns, not security intent, and legacy systems often harbor decades of unpatched vulnerabilities. Without a security baseline, AI-assisted refactoring can accelerate risk instead of reducing it.

We frequently encounter legacy systems with hardcoded credentials, outdated encryption libraries, and missing input validation. In these contexts, AI scaffolding can unintentionally reproduce unsafe patterns.

As highlighted by NIST :

“Follow all secure coding practices that are appropriate to the development languages and environment to meet the organization’s requirements.” – NIST

For example, we’ve seen code generation replicate SQL string concatenation simply because it statistically appeared in training data. Without explicit review gates and precise prompts, those flaws propagate silently.

Secure Coding Practices provide structured guardrails:

- Mandatory input validation and output encoding

- Dependency scanning during automated refactoring

- Security-focused AI code review checkpoints

- Test supplementation for authentication and authorization flows

Including spec-driven constraints, such as “adhere to OWASP standards,” improves both safety and hallucination mitigation. When we apply these standards consistently, engineers feel confident evolving AI-augmented modules.

FAQ

How does vibe coding help modernize complex legacy code safely?

Vibe coding allows teams to use AI-assisted coding and natural language prompts to modernize legacy code in controlled stages.

Instead of rewriting entire systems at once, teams apply conversational programming to test refactoring patterns and reduce technical debt incrementally. Human validation, structured unit testing, and clear code quality metrics improve code maintainability and reduce migration risks throughout the modernization process.

What risks should I watch for when modernizing legacy systems with AI?

Common risks include AI hallucinations, opaque AI logic, and complex refactor failures that introduce new defects.

Because AI code generation relies on statistical patterns, it can misinterpret undocumented code or overlook domain knowledge. Without careful debugging of AI outputs and thorough edge case handling, teams may create AI spaghetti or untestable logic that increases long-term maintenance burden.

How can I avoid technical debt during legacy code modernization?

Technical debt increases when prototype code from rapid prototyping moves into production without review.

During code modernization, teams should track code quality metrics, apply automated refactoring carefully, and enforce refactor safety guidelines. Test-driven development, consistent unit testing, and test-first prompting protect code readability and prevent legacy debt accumulation across evolving systems.

Can AI-assisted migration handle large monolithic systems effectively?

AI-assisted migration can support monolithic refactoring, microservices conversion, and COBOL modernization when guided by a clear modernization strategy.

Teams should begin with a detailed legacy inventory and structured legacy analysis before initiating changes. For scenarios such as Fortran to Python conversion or updating a Java monolith, hybrid workflows that combine AI augmentation with strong domain expertise reduce migration risks and bus factor risks.

What best practices improve results with vibe-driven development?

Effective vibe-driven development requires disciplined prompt engineering, consistent prompt iteration, and deliberate prompt optimization.

Teams should treat AI prototyping as a technical spike rather than final production code. Spec-driven coding, structured AI code review, and systematic AI debugging strengthen code lineage and AI code hygiene while preventing common vibe code pitfalls during long-term code evolution.

How to Use Vibe Coding for Legacy Code Modernization Strategically

Vibe coding accelerates legacy modernization only when experimentation speed is balanced with human-led governance and maintainability. Hybrid approaches, disciplined scaffolding, and careful metric tracking, like test coverage, defect rate, and MTTR, prevent AI from introducing hidden debt.

We’ve seen AI scaffold legacy lifts effectively when paired with Secure Coding Practices, manual validation, and incremental deployment. Modernization isn’t a full rewrite, it’s controlled evolution, where engineers guide AI outputs and preserve architectural integrity.

Explore how Secure Coding Practices can strengthen your modernization journey.

References

- https://en.wikipedia.org/wiki/Vibe_coding

- https://news.microsoft.com/source/features/ai/vibe-coding-and-other-ways-ai-is-changing-who-can-build-apps-and-how/