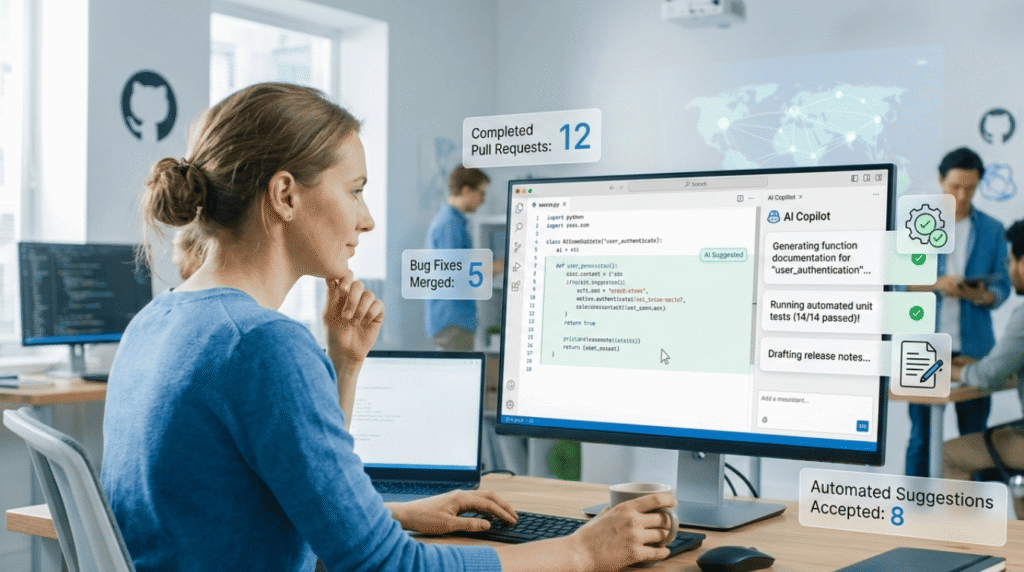

Open source is changing because AI writes code. This creates many more pull requests. Developers now focus on planning and review, not just writing.

This shift shows up in Linux and GitHub reports. AI tools generate patches, docs, and tests almost instantly. Many developers use them daily.

It causes issues, too. Maintainers face floods of automated code. Security concerns are growing. And the community argues whether this helps or harms the spirit of collaboration.

See what this means for how we code and work together next.

What Developers Should Know About AI and Open-Source Contributions

AI is changing how we work on open source. Code gets written faster, but we have new jobs to do.

- AI speeds up building things. Most coders now use it for writing, fixing, and explaining code.

- It’s harder for project leaders. They see more bad code from AI, security risks, and people they don’t know.

- Safe coding is more important than ever. We must check AI-made code before it gets into other software.

How Is AI Changing the Way Developers Contribute to Open Source?

We’re not just writing code anymore. Now, we’re often directing and checking the work of an AI that writes it for us.

This change started when AI coding helpers became common. GitHub’s own report says most developers now use AI tools while they work. It changes how people add to public projects.

We see this in our own work. We still write code, but often we tell an AI what to do first, then fix up what it gives us. That small step changes how a coding session feels.

Now, the AI might write a patch first. The developer’s job is to review it, plan how it fits, and make sure it’s safe. They aren’t the only ones writing it anymore.

As highlighted by Red Hat

“We don’t see AI as a replacement for developers. The goal is to automate tedious tasks to free them up for complex, creative problem solving. We believe in a future where developers are amplified, not automated. Our principle of human accountability reframes AI as a powerful assistant and tutor, not a replacement.” – Red Hat

Here’s how it used to work:

- A person wrote the code.

- They sent a pull request.

- A project maintainer checked it and added it.

Here’s how it often works with AI:

- The AI creates a starting point or common code.

- The developer fixes and checks it.

- The maintainer looks at the design and security.

As the AI gets better, it can write whole pieces of software. Groups working on tools like NVIDIA’s systems already use machine suggestions to work faster.

The bottom line is different now. Coding is less about typing. It’s more about directing what the AI can do.

What Positive Impacts Could AI Bring to Open-Source Projects?

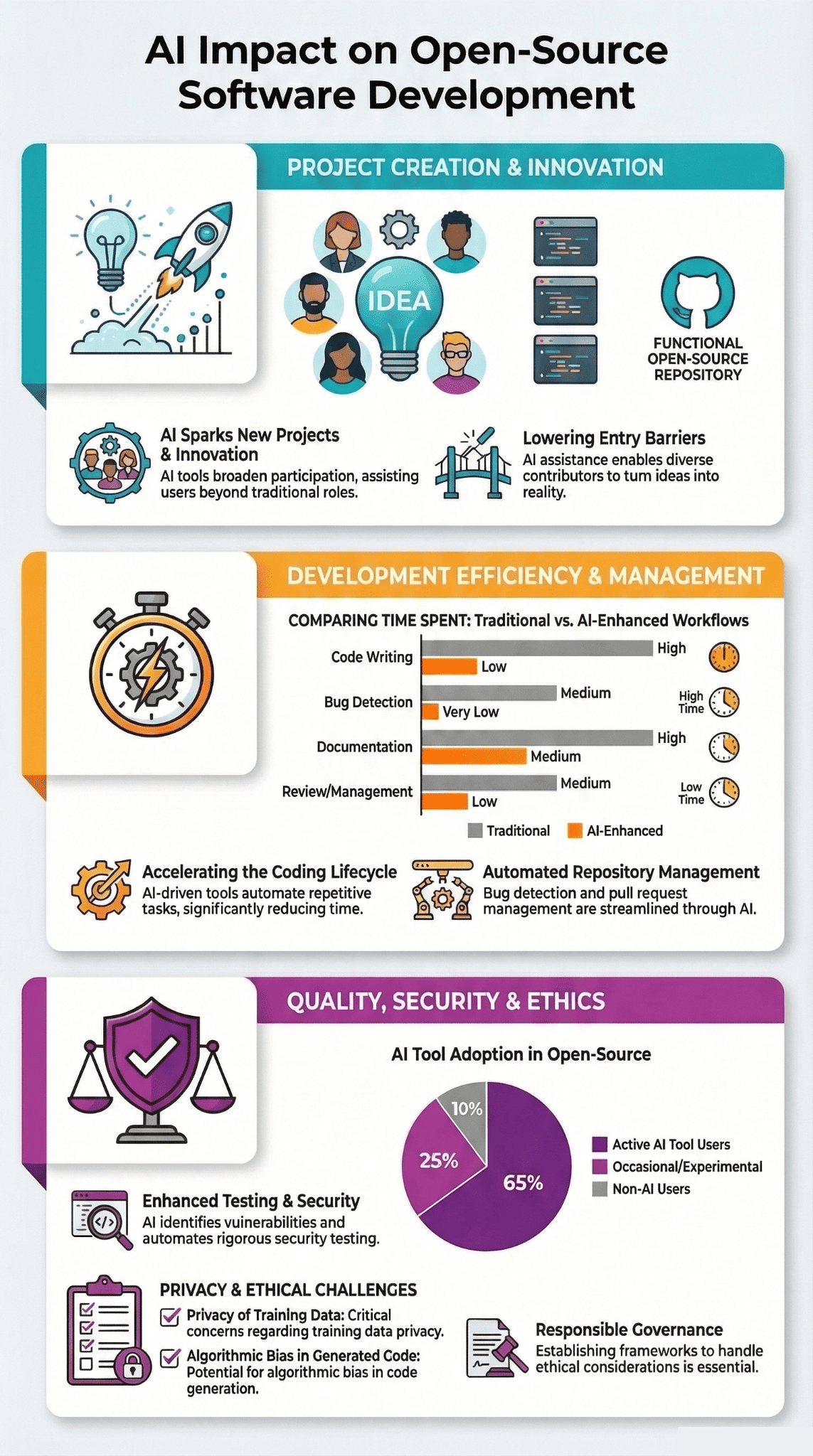

AI can make open source projects much more productive. It handles jobs like writing docs, finding bugs, and creating simple code. This lets maintainers handle more work.

First, it helps developers get more done. A Stack Overflow survey found that most developers use AI for daily tasks. We see this too in projects that use these tools.

Every day jobs get faster. Writing notes for code, explaining a problem, or making tests can happen in moments with AI. The human work moves to checking facts and polishing.

In a recent analysis by Florida Online Journals

“The 80% of project time traditionally spent on data engineering is now drastically reduced through AI assistance, allowing open-source teams to focus on innovation rather than infrastructure, a shift that’s fundamentally changing development economics and team composition.” – Florida Online Journals

We’ve felt this ourselves. Updating a guide that took half an hour can now get a first draft in seconds. We spend our time making it right, not starting from nothing.

AI also makes it easier for new people to join. If someone doesn’t know a big project, they can ask AI to explain parts of it or suggest a fix. This is important because many projects need new helpers.

A few clear benefits show up in many places:

- Faster bug fixing with AI help.

- Docs written automatically for projects.

- Ideas for fixing common errors.

AI also helps people build open-source AI models. Communities on Hugging Face or around local models let developers train and share machine learning systems more easily.

This creates a cycle. AI speeds up building tools, and those better tools then help build the next AI.

What Problems Are Emerging From AI-Generated Contributions?

AI can flood projects with bad code. It also adds security risks. This means maintainers spend more time checking code than writing it.

The biggest problem is too much code. AI makes it very easy to create code, so projects get too many contributions.

Maintainers in many projects talk about “AI slop.” This means automated patches add code that is not needed or is just a copy. News sites like Ars Technica and developers have seen this in big projects like xz Utils.

Security is another big worry. AI sometimes copies unsafe code from what it learned. If no one checks it well, that bad code can get into other software and cause problems.

We’ve seen how bad this can be. The SolarWinds attack showed that a broken software supply chain can hurt thousands of companies.

We think the same risk is happening now. AI writes code fast, but fast does not mean safe, which is why many teams are paying closer attention to the future of AI-assisted development and how automated tools should be reviewed before entering production systems.

A few problems keep showing up:

- Pull Requests that are not useful or are copies.

- Code that uses other software without checking it.

- Hidden bugs or old, unsafe ways of writing code.

This is why secure coding is so important. In our work, we always check for security first. Any patch from AI must go through our tools and be checked by a person.

Without these steps, automated code can slowly make a project less safe.

How Are Maintainer Roles and Developer Skills Evolving?

Open-source maintainers are starting to manage AI more than write code. Their skills are changing to focus on design, checking work, and setting rules.

You can see this change in reports from groups like the Linux Foundation.

Maintainers don’t just write code now. They spend a lot of time looking at what AI has made and deciding where the project should go.

From our own work on projects, the real effort often happens before the code is added. Talking about how to build something, how to test it, and how to keep it safe is now more important than the code itself, especially as developers experiment with ideas like vibe coding, where AI helps generate large portions of software while humans guide the structure.

Because of this, developers need new skills.

- How to ask an AI the right questions.

- How to use AI to find and fix bugs.

- How to check what a model made and see if it’s good.

This matches what people talk about at events like the PyTorch Conference. They discuss how open-source AI changes how engineers work.

Developers who know about data systems and how models work have a big edge now.

A software developer’s job is part builder, part checker of machine work.

In the end, a person’s decision is still the most important safety step.

How Are Open-Source Communities Responding to AI PR Flooding?

Credits: DigitalOcean

Projects are setting up guardrails for the wave of AI-generated code.

Many projects are changing how they work. Some now ask people to talk about a change first before sending code. Others might stop taking outside code for a while.

People who run projects, like Daniel Stenberg, who made curl, have said they don’t want lazy, automated patches.

The goal isn’t to stop AI. It’s to build better tools to handle it.

Projects are now using automated systems that check contributions first. A computer looks at the code before a human does.

| Problem | New Solution | Example |

| Too many bad AI pull requests | Systems that sort them automatically | Pipelines that check code in CI |

| Security risks from AI code | Tools that scan for unsafe parts | Supply chain analysis tools |

| Not knowing who to trust | Systems that check who is contributing | Verified contributor workflows |

Big groups like the Linux Foundation are also making rules for safe development. Meetings like the SIMPL Conference talk about how communities can work together on these rules.

People also want to know when AI wrote the code. They want the person who sent it to say they checked it.

This makes secure coding very important, especially in big systems like Linux. We teach these practices because they are the first line of defense.

Will AI Help Open Source Compete With Proprietary Software?

AI might make open source stronger. It could let small teams build software that competes with big companies.

Before, big tech companies had an advantage. They had huge teams and their own systems.

AI changes that.

Now, a small group of open-source developers can build strong apps. They can use shared tools like NVIDIA’s platforms.

We see this working in some communities already.

| Area | How AI Helps Open Source | How Proprietary Software Works |

| Speed | AI helps code faster | Uses big internal teams |

| New ideas | The whole community tries things | A company’s research team does it |

| Openness | The code is public for anyone to see | Development is hidden |

Events like Open Source AI Week and awards in Europe show open work is getting more important in the AI age.

Even big companies and research groups use open ecosystems to build things.

In this way, open-source AI isn’t just a way to build software. It’s a real choice instead of closed, private platforms.

The real strength is that the whole world can work together.

What Will Open-Source Contributions Look Like by 2026 and Beyond?

By 2026, AI will probably write a lot of open-source code. People will focus more on planning, making rules, and checking for safety.

We can already see this starting. Research shows over 100,000 developers are part of Linux Foundation AI work. They are building the tools for this future.

In the future, AI programs might send patches to projects on their own.

People will still be very important.

They will design how things fit together, check the AI’s work, and make sure the code is safe. This becomes even more important as teams experiment with managing large-scale projects with vibe coding, where AI generates major components while humans coordinate architecture and security.

A few things seem likely to happen:

- AI programs that write and send code by themselves.

- More small, local AI models for devices.

- Better tools for developers.

New hardware also helps. Platforms from NVIDIA and others make it easier to run powerful models on regular computers.

At the same time, how a community runs itself will matter more. Developers will need to figure out trust, security rules, and how to keep people helping.

The social part of open source will become just as important as the code.

FAQ

How could Open Source AI change teamwork in open source projects?

Open Source AI can improve teamwork in open source projects. AI tools analyze source code and suggest fixes or improvements. Software developers still review Pull Requests before changes are accepted.

This review process keeps software development reliable. Generative AI may improve developer productivity, but human interaction remains important. Open-source developers guide AI capabilities to ensure that tools support real community contribution and healthy open source activity.

Why are Machine Learning ideas growing inside open-source repositories?

Machine Learning ideas are growing inside open-source repositories because many developers share data, models, and experiments. Open-source AI and open-source models allow software developers to study and improve foundation models together.

This shared work helps teams build better AI-powered applications. Software engineers also review benchmark scores and model capabilities to maintain quality and protect the dependency ecosystem from potential security problems.

Can generative AI improve Pull Request quality in open source software?

Generative AI can help improve Pull Requests in open-source software by drafting source code, suggesting fixes, and writing simple tests. Software developers review every Pull Request before accepting the change.

This process ensures that the code meets project standards. Human review also improves developer experience, strengthens developer tooling, and keeps software development aligned with the goals of the open source community.

What security risks appear when AI tools write source code?

Security risks can appear when AI tools generate source code too quickly. AI may introduce hidden bugs or unsafe patterns into the dependency ecosystem. Malicious hackers can also attempt backdoor attacks or phishing campaigns targeting open-source repositories.

Software developers must review Pull Requests and check lines of code carefully. Strong supply chain security practices help protect open source software and the broader software development environment.

Why are local open models important for future open source development?

Local open models are important because they allow open source software to run without relying on proprietary tech. Software developers can test model capabilities directly and improve foundation models. These models support AI-powered applications and edge computing systems.

Open-source repositories also enable collaborative filtering research and data sharing systems, which strengthen open source activity and help software engineers increase developer productivity.

What the Future of AI Means for Open-Source Contributors

Open source has never been about tools. It has always been about people choosing to build together. AI may write code faster than any developer alive. But speed alone cannot protect users or keep software safe. That work still depends on people who care about quality and strong Secure Coding Practices.

Think of open source like a shared city. AI can stack the bricks, but builders must make sure the walls hold. If you want to write safer code and lead the next wave of development, you can join the Secure Coding Practices Bootcamp and start building software that people trust.

References

- https://www.redhat.com/en/blog/accelerating-open-source-development-ai

- https://journals.flvc.org/FLAIRS/article/download/139001/144119/276299