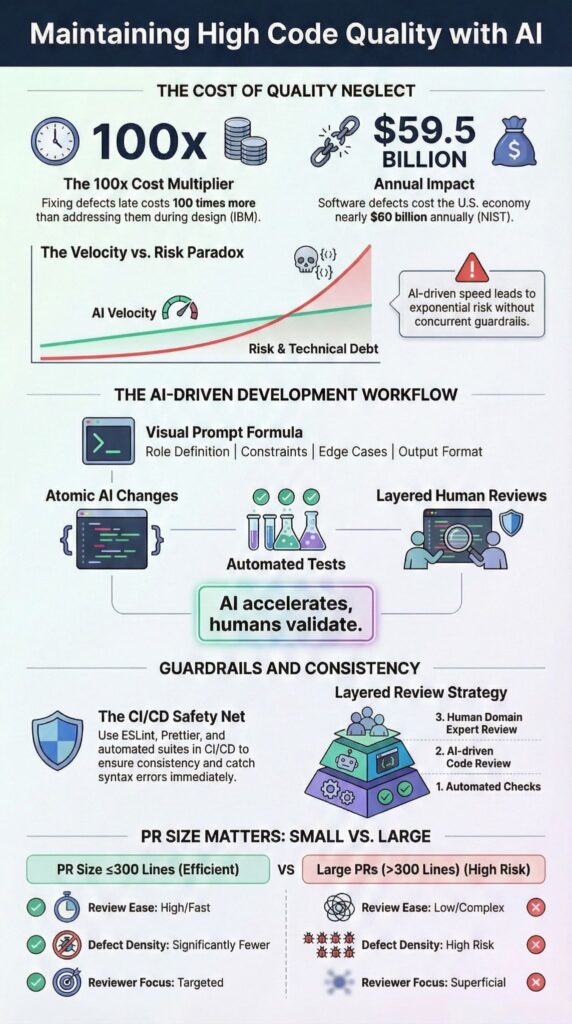

Maintaining high code quality with AI requires structured workflows, strict testing, and layered human oversight to balance speed with reliability. AI can accelerate development, but without guardrails, small errors compound quickly, creating architectural debt.

We have integrated AI code generation into production teams and consistently see velocity spike alongside risk when safeguards are absent. Teams that treat AI as a tool, not an authority, embed it into rigorous processes, testing, and review cycles.

This guide explains how to maintain high code quality with AI using secure coding practices, controlled changes, and collaborative review. Keep reading to implement this framework responsibly.

Key Takeaways

- High code quality with AI depends on Secure Coding Practices, strict testing, and small, reviewable changes.

- AI-assisted refactoring and AI code review improve speed, but humans must validate domain logic and edge cases.

- Layered automation, code quality metrics, and structured prompts reduce technical debt and AI drift.

What Does “High Code Quality” Mean in an AI-Assisted Workflow?

High code quality with AI means combining automated generation with human validation to ensure readability, maintainability, security, and performance meet agreed engineering standards.

Teams experimenting with vibe coding often learn that quality only holds when speed is paired with explicit structure and accountability We focus on measurable outcomes rather than assumptions, embedding AI into structured workflows rather than relying on it blindly.

In our teams, we evaluate quality across five dimensions:

- Readability and naming conventions

- Maintainability through modular design and code metrics

- Testability with AI-generated unit tests

- Security validation under Secure Coding Practices

- Performance and scalability optimization

AI changes the workflow dynamic. Speed increases dramatically; in some internal sprints, feature scaffolding time dropped nearly 40 percent. But risk rises alongside it. AI can sound confident even when producing incorrect code.

The difference between prototype-level and production-grade code comes down to discipline. Prototype code may run once. Production code must survive refactors, schema evolution, concurrency challenges, and future developer edits. In our Secure Coding Practices bootcamps, we emphasize building workflows that enforce this discipline from day one.

Why Does AI-Generated Code Drift Toward “Slop”?

AI-generated code tends to degrade when prompts lack architectural context, automated tests are skipped, or large diffs overwhelm reviewers. The result is inconsistent logic, hidden regressions, and subtle structural decay.

From our experience, common causes of drift include:

- Large multi-file updates without clear boundaries

- Missing performance and scalability constraints

- Absence of Secure Coding Practices in prompts

- Skipped automated testing or unit coverage

- No enforcement of code quality thresholds

Early warning signs often appear quietly. Naming conventions shift across modules, DRY principles erode, and KISS adherence weakens. Cyclomatic complexity creeps up unnoticed. We’ve seen AI pair programming generate scaffolds quickly but fail on async flows or threading edge cases.

As highlighted by GitHub Blog :

“The best drivers aren’t the ones who simply go the fastest, but the ones who stay smooth and in control at high speed.”

The issue isn’t AI intelligence, it’s context. Without clear boundaries and verification layers, AI optimizes locally but breaks global consistency. In our Secure Coding Practices bootcamps, we emphasize building workflows that enforce boundaries, tests, and governance so AI becomes a productivity multiplier rather than a source of technical debt.

How Do You Structure Small, Contained AI Changes?

We limit AI to one or two files per task, enforce atomic commits, and isolate work in feature branches. This keeps review clarity high and reduces the risk of defects spreading across the codebase.

We follow three strict steps:

Step 1: Define a narrow scope

Each task targets a single module, function, or API endpoint. Acceptance criteria include:

- Explicit inputs and outputs

- Performance expectations

- Security constraints aligned with Secure Coding Practices

Step 2: Enforce atomic commits

Every commit represents one logical change. We avoid mixing refactoring with new features. Clear commit messages improve traceability and help teams track AI-driven modifications efficiently.

Step 3: Keep pull requests small

| Practice | Why it works | Risk if ignored |

| ≤300 lines per PR | Reduces cognitive load | Hidden regressions |

| Single responsibility | Ensures modular code | Tangled logic |

| Focused reviewer | Strengthens accountability | Diffused ownership |

Small, disciplined diffs improve CI checks, automated testing, and rollback strategies. In our experience, teams that respect these boundaries maintain higher code quality and safer production deployments when AI is involved.

How Should You Prompt AI Like a Senior Engineer?

We have found that assigning AI a clear engineering role with explicit constraints greatly improves structural correctness and adherence to core principles like SOLID. It transforms outputs from generic scaffolding into maintainable, production-ready suggestions.

In practice, we structure prompts with a repeatable formula:

- Role definition

- System context

- Constraints, including Secure Coding Practices

- Edge case requirements

- Output format expectations

For example, we instruct AI to act as a senior backend engineer, reviewing for database schema stability, fault tolerance, and scalable code patterns.

Insights from IBM Think indicate :

“AI code review tools often provide suggestions or even automated fixes, helping developers save time and improve code quality.”

Targeted follow-ups refine outputs further:

- Evaluate cyclomatic complexity

- Flag SOLID principle violations

- Check for memory leaks or performance risks

- Review error handling and exception management

When we treat AI as a junior assistant guided by senior constraints, maintainability improves. When teams treat it as an autonomous architect, technical debt often goes unnoticed. In our Secure Coding Practices sessions, we emphasize prompting discipline as the difference between AI accelerating productivity safely versus introducing hidden risks.

Why Are Tests Non-Negotiable in AI-Assisted Development?

We have learned from hands-on experience that AI-generated code can look correct but still introduce hidden defects.

Applying AI refinement through critique, revision, and verification cycles helps surface these failures early. Confidence alone isn’t enough, post-release bugs are expensive and often complicated to fix. That’s why testing must be non-negotiable in any AI-assisted workflow. Treat AI output like a junior developer draft: fast, iterative, but never final without validation.

Our workflow enforces discipline at every stage:

- Generate feature code – AI produces an initial implementation

- Create unit tests – automated suites for all generated code

- Manual review – humans verify tests, edge cases, and constraints

- Integration testing – confirm modules interact correctly

- Code coverage validation – ensure critical paths are tested

Humans complement AI in areas it cannot reliably handle:

- Edge cases: AI often misses rare scenarios; humans validate

- Security: AI may patch superficially; humans enforce Secure Coding Practices

- Performance: AI assumes efficiency; humans benchmark and stress test

Dynamic analysis, static scans, and automated CI catch surface errors, but only human oversight guarantees intent, architecture, and compliance. In our Secure Coding Practices bootcamp, this approach consistently reduces post-deployment defects and ensures AI accelerates development without sacrificing reliability.

Testing transforms AI-generated code from a suggestion into production-ready, maintainable software.

How Do Linters, Formatters, and CI Pipelines Protect Quality?

In our experience, automated tooling acts as a first line of defense for code quality. In more advanced workflows, these tools operate as enforcement layers that prevent drift before humans even review changes.

Linters and formatters enforce consistent style, reduce drift across teams, and highlight violations before they reach production. CI pipelines extend this by verifying every commit against defined standards, preventing regressions and ensuring reliability across the codebase.

We rely on automation to handle:

- Code duplication reduction – catching repeated logic early

- Naming conventions consistency – keeping modules readable and maintainable

- Code complexity metrics – tracking maintainability indicators like Halstead metrics

- Security vulnerability scanning – identifying risks before deployment

- Deployment safety checks – ensuring builds pass before merging

Humans still provide judgment. We review architectural decisions, evaluate edge cases, and confirm that AI-generated changes adhere to Secure Coding Practices. Automation catches surface-level errors quickly, but combining it with human oversight transforms code quality from a guideline into a reliable, enforceable standard.

This hybrid approach keeps teams productive, reduces technical debt, and ensures AI-assisted code meets enterprise-grade expectations.

What Is a Layered Review Model for AI Code?

Credits : Learning To Code With AI

A layered review model helps teams manage AI-generated code efficiently by separating automated checks from domain-level evaluation. In our experience, this structure reduces cognitive overload while ensuring architectural and business integrity remain intact.

Layer 1: Automated Checks

- Formatting and linting with AI

- Unit test execution for AI-generated code

- Static and dynamic analysis

Layer 2: AI Code Review

- Detect code smells automatically

- Suggest refactoring patterns

- Improve readability and maintainability

Layer 3: Human Domain Review

- Validate business logic against requirements

- Ensure Secure Coding Practices are followed

- Check architecture alignment across services and microservices

We have observed that when teams adopt this approach, human reviewers can focus on higher-value concerns, like integration risks, schema stability, and system scalability, while AI handles repetitive checks. Layering also encourages accountability.

Engineers understand what is automated versus what requires judgment, making AI-assisted workflows more predictable and safer. This combination of automation and human oversight protects both speed and depth, ensuring AI code contributions remain reliable and production-ready.

FAQ

How can we use AI without lowering code quality standards?

Many teams worry that automation will reduce standards. In practice, we treat AI code generation best practices as guardrails that define how prompts are written, reviewed, and validated.

We combine AI code review, linting with AI, and static analysis tools AI with clearly defined code quality metrics. Human-AI code collaboration works best when developers actively verify logic, enforce naming conventions AI, and continuously evaluate software maintainability AI.

What processes help measure AI-driven code quality effectively?

You need objective and measurable indicators. We rely on code complexity metrics such as cyclomatic complexity AI, Halstead metrics AI, and maintainability index AI to quantify structural quality.

Teams also monitor code coverage AI and enforce continuous integration AI code quality checks in every build. Monitoring code quality AI dashboards highlight code smells detection AI findings and support proactive technical debt tracking AI.

How do we keep AI-generated code maintainable long term?

Maintainability requires intentional design and consistent review. Apply SOLID principles AI, DRY principle enforcement, and KISS principle AI during AI-assisted refactoring to avoid unnecessary complexity.

Encourage modular code AI and consistent design patterns AI implementation across services. Code documentation AI and inline comments AI must explain decisions and trade-offs. Regular code debt reduction initiatives and structured refactoring patterns AI preserve long-term clarity.

How can AI improve testing without replacing human judgment?

AI should strengthen structured testing practices. Teams implement test-driven development AI workflows supported by automated code testing and unit testing AI generated cases to expand coverage.

Integration testing AI validates interactions between modules, while edge case handling AI increases resilience. Security vulnerability scanning AI and dynamic analysis AI add additional runtime validation. Developers still review business-critical logic before release.

What safeguards prevent hidden risks in AI-generated code?

Risk control requires layered verification. We conduct security vulnerability scanning AI, performance optimization AI reviews, and memory leak detection AI during pre-release validation.

Strong error handling AI strategies and thorough exception management code reviews reduce runtime instability. Pull request automation integrated with version control AI enforces policy checks. Defined rollback strategies AI and structured deployment safety AI procedures protect production systems from regressions.

Maintaining High Code Quality with AI in the Long Term

Maintaining high code quality with AI requires disciplined scope control, Secure Coding Practices, layered reviews, and rigorous testing.

We’ve seen that embedding AI within structured workflows, rather than replacing human judgment, delivers speed without sacrificing stability. Enforcing security, scalability, and compliance from the start prevents hidden technical debt. Teams scaling AI should start small, test aggressively, and build layered oversight into every sprint.

Implement Your AI Code Quality Framework Today

References

- https://github.blog/ai-and-ml/generative-ai/speed-is-nothing-without-control-how-to-keep-quality-high-in-the-ai-era/

- https://www.ibm.com/think/insights/ai-code-review