Custom AI coding agents are autonomous systems that plan, write, debug, and improve code using tools, memory, and structured reasoning. If you want to understand how to build custom AI coding agents, it’s more than creating a chatbot.

These agents execute code, refactor repositories, call APIs, and handle errors automatically. The real distinction comes from integrating tools, reflection, and state management.

This guide walks through every step, from designing the architecture to deploying your agents in real projects, showing what makes them truly autonomous. Keep reading to learn exactly how to create your own powerful AI coding agents.

Quick Wins – Building Reliable Custom Coding Agents

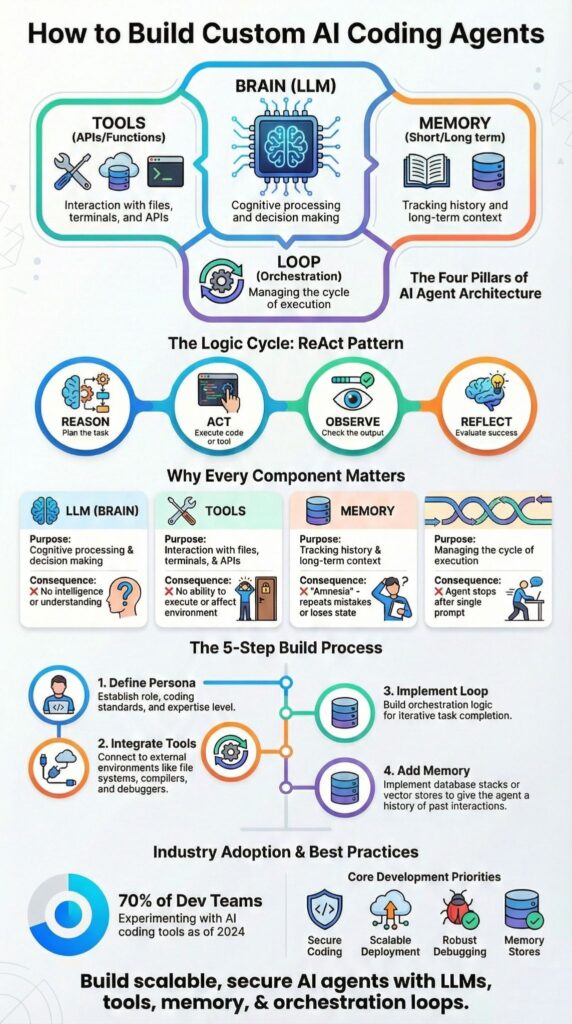

- Building custom coding agents requires LLM reasoning, tools, memory, and orchestration loops working together.

- Structured workflows like ReAct pattern agents reduce hallucinations and improve debugging reliability.

- Production-ready systems require Secure Coding Practices, monitoring, and scalable deployment infrastructure.

What Is a Custom AI Coding Agent and How Does It Work?

A custom AI coding agent is an autonomous system powered by an LLM like GPT-4o that plans, writes, debugs, and iterates on code using tools, memory, and reasoning loops.

At the core is the “brain.” This can be GPT-4o coding agents, Claude Sonnet coders, Gemini tools, or Mistral AI agents. We’ve seen multi-step reasoning improve significantly when tool use is required rather than optional, confirming research from Stanford’s Human-Centered AI Institute.

As highlighted by Langflow :

“Most developers have at least tried developing with an AI coding agent, but not many have built their own.” – Langflow

We use vector stores like FAISS, ChromaDB, or Pinecone so agents can search large codebases without losing context.

A typical architecture includes:

- Brain: handles reasoning and task decomposition

- Tools: for file edits, API calls, and automation

- Memory: short-term buffers and long-term vector storage

- Loop: cycles of planning, execution, and reflection

When these pieces work together, you get autonomous code generators capable of full-stack builds or safely refactoring large projects, all while keeping control over outputs.

What Core Components Do You Need to Build One?

To build a functional AI coding agent, you need four main components: an LLM, tool integrations, memory, and an orchestration loop.

When teams experiment with vibe coding, these components become even more important because loosely scoped prompts can quickly amplify errors without strong memory and execution boundaries.

- LLM: serves as the reasoning engine. Larger models like GPT-4o coding agents handle multi-step debugging better than smaller local LLMs.

- Tools: handle real-world actions such as code execution, Git integration, testing, and API calls.

- Memory: keeps the agent aware of context. We use Redis for session memory and Pinecone or FAISS for long-term retrieval.

- Loop: the orchestration cycle, reason, act, observe, reflect, enables autonomy and self-debugging.

| Component | Purpose | Failure Without It |

| LLM | Reasoning and planning | Shallow outputs |

| Tools | Code execution and file changes | No real-world action |

| Memory | Context and codebase awareness | Repetition and drift |

| Loop | Iterative autonomy | Single-step replies |

We always prioritize Secure Coding Practices at this stage. That means sandboxed execution, input validation, and strict permission boundaries before expanding capabilities.

How Do You Build a Custom AI Coding Agent Step by Step?

Define the agent’s persona, integrate tools, implement a reasoning loop, add memory, then deploy and monitor performance.

First, define persona and goals. A system prompt might specify a senior backend API engineer focused on performance and Secure Coding Practices.

Second, integrate tools. Add file editing agents, subprocess execution layers, Git integration agents, and API orchestration agents. Tool-calling LLMs and function calling agents enable direct invocation of these capabilities through structured outputs.

Third, implement the loop. The agent reasons, executes code, observes output, and applies reflection mechanisms. This structure enables self-debugging agents and code review bots.

Insights from Monday.com indicate :

“Custom agents beat generic tools: building your own agents gives you tighter control over data access, behavior, and integrations with existing systems.” – Monday.com

Before moving forward, we often separate responsibilities using hierarchical agent teams.

- Supervisor agent patterns coordinate tasks

- Sub-agent delegation assigns frontend code agents or backend API coders

- Database schema agents manage migrations

- Testing suite generators validate outputs

Finally, deploy and monitor. We use evaluation frameworks such as LangSmith tracing and Phoenix monitoring to track task completion rates and error recovery loops.

Which Framework Should You Use for Building AI Coding Agents?

We usually tell our bootcamp students to choose based on flexibility and scale.

As systems grow, advanced workflows help teams coordinate multi-agent roles, manage orchestration logic, and keep framework choices aligned with long-term maintainability. CrewAI frameworks are great for multi-agent setups and supervisor patterns. In our experience, they handle hierarchical teams well but limit some low-level control. They are ideal for:

- Coordinating multiple roles in larger projects

- Startup automation where speed matters

- Multi-agent orchestration without heavy infrastructure

For full control, custom Python setups with LangChain agents or LlamaIndex tools work best. We’ve used these for production systems where granular orchestration and precise debugging matter. They require more boilerplate but let you:

- Customize task flows

- Integrate complex tools

- Debug step by step

| Framework | Pros | Cons | Best For |

| CrewAI frameworks | Multi-agent orchestration | Less granular tuning | Startup automation |

| Custom Python | Full flexibility | More boilerplate | Production systems |

| No-code builders | Fast prototypes | Limited complexity | MVP experiments |

| OpenAI Assistants API | Built-in RAG and hosting | Less infra control | Rapid deployment |

Hosted APIs like the OpenAI Assistants API simplify deployment. In our labs, function-calling agents with built-in vector storage and managed LLMs let us spin up prototypes quickly.

What Are Common Pitfalls When Building Coding Agents?

From our experience running secure development bootcamps, one of the biggest mistakes is relying on vague prompts. Generic system prompts often cause autonomous agents to hallucinate functions.

Without enforced tool use, agents may skip verification steps, and SWE-bench evaluations show that unstructured outputs sharply reduce execution success rates.

Memory management is another frequent challenge. We’ve seen agents lose context or repeat logic during large refactors when vector store integration is missing. Proper memory setup, short-term buffers plus long-term vector stores, is essential for consistent performance.

Common risks include:

- Missing reflection mechanisms

- No error recovery loops

- Insufficient sandbox isolation

- Lack of Secure Coding Practices

We once observed an agent modify 12 files correctly but fail during deployment because dependency validation was overlooked. That experience shaped our approach.

Now, we always include testing suite generators and CI/CD pipeline agents before approving any final outputs. These steps ensure that agents can work autonomously without compromising security or reliability, giving students a practical, safe environment to experiment and learn.

What Real-World AI Coding Agents Already Exist?

Credits : Tech With Tim

In our bootcamps, we’ve seen several types of AI coding agents in action. Research synthesizers combine web scraping coders with SerpAPI search agents to generate reports. These systems often run on FastAPI backends with Streamlit UIs, letting agents gather and summarize information automatically.

Code review bots are another common example. They analyze pull requests, suggest diffs, and run refactoring assistants. We teach students that human-in-loop validation is essential, someone must check suggested changes before merging to prevent errors.

Full-stack autonomous app builders are more complex. We’ve observed that structured, multi-agent workflows consistently outperform single-shot LLM outputs in complex debugging tasks, as confirmed by LiveCodeBench metrics.

Emergent patterns we encounter in practice include:

- Hierarchical agent teams

- Memory-augmented agents

- State machine agents and finite-state coders

- Reinforcement learning agents for optimization

As these frameworks mature, scalable agent clusters and serverless agent functions are becoming standard. In our teaching, we focus on safe, controlled setups so students can experiment with multi-agent systems without risking production environments.

FAQ

How do custom coding agents differ from standard AI agent development approaches?

Custom coding agents are designed for specific engineering workflows instead of general conversation.

They apply task decomposition AI, planning-execution cycles, and agentic workflows to handle coding tasks end to end. Unlike basic AI agent development, they can run code execution loops, review results, apply reflection mechanisms, and iteratively improve outputs until the solution meets technical requirements.

What makes autonomous code generators reliable for real development work?

Autonomous code generators become reliable when they include self-debugging agents, structured error recovery loops, and human-in-loop agents. These systems validate outputs, detect failures, and retry with improved logic.

Adding code review bots and evaluation frameworks ensures the generated code follows best practices, maintains readability, and reduces the risk of hidden functional or security issues.

How do memory-augmented agents improve long coding tasks?

Memory-augmented agents store previous decisions, code changes, and context across sessions.

With vector store integration and RAG-enabled coders, they can retrieve relevant past information when solving new problems. This capability prevents repeated work, supports long-running projects, and helps maintain consistency across complex systems with many connected components.

Why are planning and delegation important in agentic coding systems?

Planning and delegation allow agentic systems to manage complexity effectively. Planning-execution cycles define clear steps, while hierarchical agent teams enable sub-agent delegation for focused tasks.

This structure mirrors real engineering teams, reduces conflicting actions, and allows custom coding agents to handle large codebases without losing direction or producing fragmented solutions.

What is required to make production-ready AI coding agents scale safely?

Production-ready agents require clear state management, monitoring, and async agent execution.

Scalable agent clusters depend on evaluation frameworks, logging, and controlled permissions. Security audit agents and CI/CD pipeline agents help validate every change, ensuring reliability, traceability, and safe deployment as systems grow in size and operational complexity.

How to Build Custom AI Coding Agents That Scale and Stay Secure

Building custom AI coding agents requires more than connecting an LLM to a tool. From our experience, it demands structured planning-execution cycles, memory-augmented retrieval, reflection mechanisms, and strict Secure Coding Practices.

Autonomy without safeguards becomes a liability, so sandbox policies, API permissions, and evaluation loops are essential. Start small, validate each loop, and expand infrastructure only after reliability is proven.

Start building your custom AI coding agent system today.

References

- https://www.langflow.org/blog/ai-coding-agent-langflow

- https://monday.com/blog/ai-agents/how-to-build-ai-agents-for-beginners/