Meta description: Why does 90% of financial damage hit audited software? The flaw isn’t in the code syntax, but in the business logic it enables.

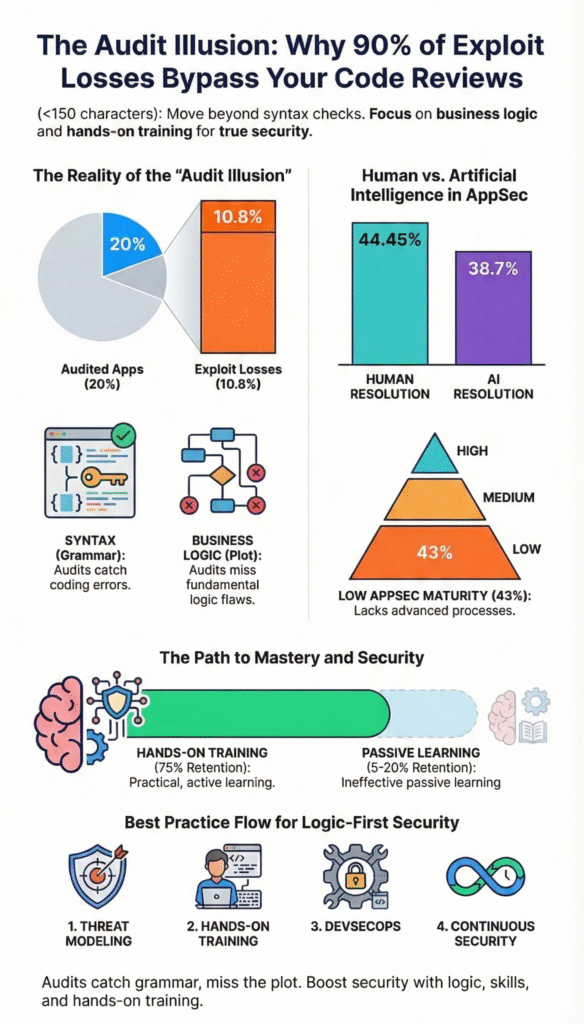

A recent analysis of $10.77 billion in application security breaches found a startling pattern: only 20% of exploited applications had undergone a professional security audit. Those audited applications accounted for just 10.8% of the total value lost.

The data seems to suggest audits work. But a deeper look reveals a systemic blind spot where traditional reviews fail catastrophically. The failures point not to bad code, but to a fundamental misunderstanding of how systems operate as businesses.

This isn’t about syntax; it’s about logic, process, and a growing gap between what we can automate and what we must understand. Keep reading to see why your current security strategy might be missing the real target.

AppSec Reality Check: Where Automation Falls Short

This highlights how gaps in tools, maturity, and skills combine to shape today’s real application security risks.

- Automation Creates a “Review Gap”: AI-powered tools speed up cycles but miss over 61% of critical fixes identified by expert human reviewers.

- Low Maturity is High Risk: 43% of organizations operate at the lowest AppSec maturity level, creating widespread exposure to preventable breaches.

- Hands-On Training Closes the Gap: Practice-based learning achieves 75% knowledge retention, directly combating the skills deficiencies behind 82% of breaches.

The Grammar Check That Misses the Plot

The numbers from that $10.77 billion analysis tell a clear story: audits reduce losses. But the story within the story is more troubling. Look at Euler Finance, a protocol reviewed by six different firms across ten audit engagements before a $197 million exploit.

The exploited function was only in scope for one of those engagements. The code was syntactically correct. The flaw was in its interaction with the lending mechanism, a business process invisible to a standard code-level review.

This is the audit illusion. We check the grammar of the system but miss the plot.

It’s a pattern repeated across post-audit breaches. Audited protocols like Merlin DEX, Swaprum, and Arbix Finance were exploited through admin privilege abuse, risks often relegated to “informational findings” in a report, not critical business threats.

Other massive losses, like the $1.46 billion Bybit breach attributed to Lazarus Group, came from a compromised developer workstation, an operational attack surface completely outside the scope of a smart contract audit.

Sometimes, the code itself changed after the review, as in the $190 million Nomad Bridge exploit, where only 18.6% of the critical contract matched the audited version.

The core issue isn’t that audits are useless. They’re essential. The issue is what they’re designed to see, and what they’re not.

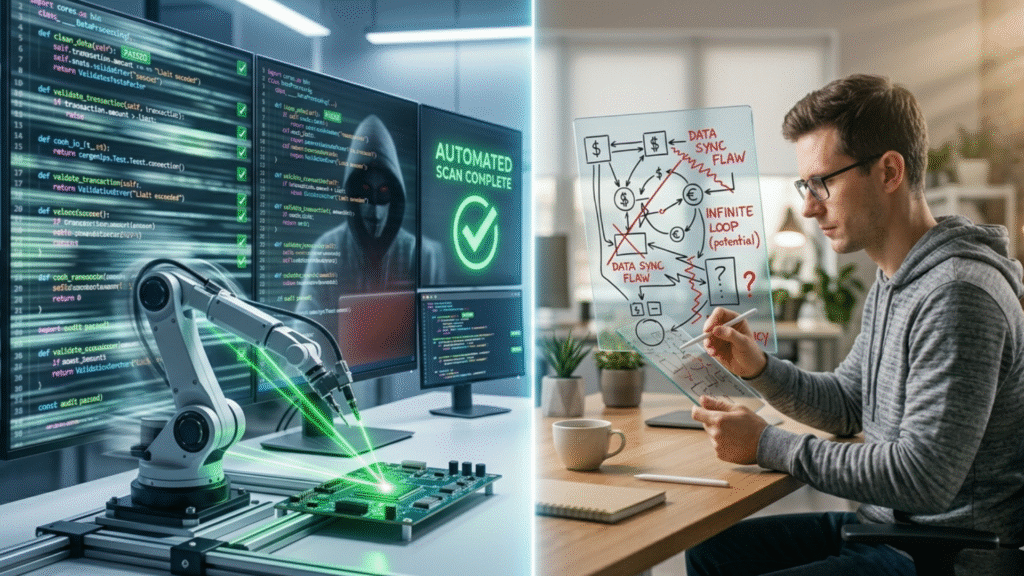

The Speed of Automation Versus the Depth of Understanding

We’re racing to automate everything. A large-scale study on AI-powered code review found it can reduce human-written comments by over 35% and cut median pull request cycle time by nearly 31%. That’s a powerful efficiency gain.

But the same study revealed a concerning gap in effectiveness. According to a recent arXiv research study, when measured for code resolution rate, how often a suggested fix actually addresses the problem, automation achieved a 38.7% rate, while human reviewers achieved 44.45%.

That’s a difference of over 5 percentage points, meaning automation, in its current state, misses a significant portion of actionable issues a human would catch.

As noted by SCP demonstrates

“AI tools are incredibly effective at identifying known vulnerability patterns and speeding up development cycles, but they lack the contextual understanding required to detect business logic flaws and novel attack paths. The gap between a 38.7% and 44.4% resolution rate highlights that human judgment is still essential in secure code review, especially as ‘vibe coding’ accelerates the creation of code developers may not fully understand.” –Secure Coding Practice

This isn’t a failure of the tool. It’s a limitation of its perspective. Automated scanning and AI-assisted review excel at finding known patterns: syntax errors, common vulnerability templates, dependency conflicts.

They struggle with the novel, the contextual, and the logical, the exact terrain where the most expensive exploits occur, especially when teams don’t know how to handle unexpected AI code behavior in production systems where outputs don’t always align with intent.

Here’s a cleaner version with proper citation placement and anchor text:

According to industry analysis from Softprom, this gap is widening with the rise of “vibe coding,” with predictions that by 2027, nearly 30% of all application vulnerabilities will stem from developers using AI assistants to write code they don’t fully understand.

This creates a new class of security debt, code that looks right but behaves wrong in its context, that traditional tools are ill-equipped to find.

The AppSec Maturity Trap: Why Most Companies Are Vulnerable

Credits: Next LVL Programming

The pressure to move fast often means security becomes a checkpoint, not a culture. Industry data paints a stark picture of the current state: 43% of organizations remain at Level 1 AppSec maturity, the lowest possible level. The average maturity score across all organizations is just 2.2 out of 10.

This means for a large portion of the industry, application security is reactive and fragmented. It’s about scanning for known vulnerabilities after the code is written, not designing secure systems from the start.

This low-maturity environment is a perfect breeding ground for the audit illusion. You can buy a top-tier audit, but if your development process doesn’t integrate security thinking, you’re just checking a box on a fundamentally risky asset.

Current State of Global Application Security Maturity

| Maturity Metric | Industry Average | Impact on Risk |

| Organizations at Level 1 | 43% | High exposure to known exploits |

| Overall Maturity Score | 2.2 / 10 | Significant systemic weakness |

| Vulnerability Prioritization | ASPM Adoption | Can reduce alerts by 75% |

The consequences are measurable. Research indicates that 82% of security breaches are linked to human skills gaps. Developers aren’t trained to think like attackers. They write code to fulfill functions, not to withstand manipulation.

When an automated scanner floods a team with thousands of alerts, most of them low-priority or false positives, “vulnerability fatigue” sets in. Critical issues get lost in the noise. Proper vulnerability prioritization can reduce these alerts by 75%, but that requires a maturity level most organizations haven’t reached.

The cycle is vicious: low maturity leads to skills gaps, which lead to breach-prone code, which leads to overwhelming alerts, which further demoralizes and distracts the team, keeping maturity low. Breaking this cycle requires changing how we build capability, not just how we buy tools.

Where Passive Learning Fails and Hands-On Training Wins

We know the problem: a lack of deep understanding creates logical flaws tools can’t see. We know the cause: widespread skills gaps and low security maturity. The solution, then, must address the human element directly.

According to research from INE, the way we typically try to upskill developers, through lectures, videos, and compliance-mandated courses, results in very low knowledge retention, with effectiveness rates of only 5% to 20%.

Compare that to hands-on, practice-based learning. The same research shows this approach achieves up to 75% knowledge retention. That’s a 3.75x to 15x improvement. This isn’t about memorizing OWASP Top 10 lists; it’s about building “muscle memory” for secure coding.

It’s writing code under guidance, then attacking it yourself in a controlled lab. It’s seeing how a small logic error in a donateToReserves() function can be manipulated to drain reserves, and learning how to debug AI-generated code effectively when those flaws are introduced by automation rather than human intent.

This also reinforces why AI-assisted development still needs constant human oversight, especially when developers are building intuition through real-world attack scenarios.

This is where we see the path forward.

- Move beyond checklists. Use frameworks like OWASP as a baseline for peer review, not as a substitute for critical thinking.

- Integrate threat modeling actively. Before a line of code is written, ask: “How could someone abuse this feature to make money?”

- Focus on high-retention training. Invest in bootcamp-style, adversarial training that forces developers to think from both sides of the keyboard.

- Bridge the maturity gap. Use the 75% retention rate of hands-on learning to directly attack the 43% Level-1 maturity problem plaguing the industry.

The audit illusion isn’t a conspiracy. It’s a natural consequence of relying on tools to do human work. Audits and automated scans are vital components of a security strategy, but they are components. They are the grammar check.

The plot, the logic, the business intent, that requires a human mind trained to see it. The data shows that when we develop that mind through practice, we retain the knowledge. When we don’t, we lose billions.

FAQ

Why do exploit losses still happen after security audits and code reviews?

Even with strong security audits and code reviews, exploit losses happen due to incomplete audit coverage and hidden business logic flaws. Many software vulnerabilities appear only during real usage.

Issues like zero-day vulnerabilities, third-party integrations, and audit fatigue reduce effectiveness. This creates the audit illusion, where audited protocols still face post-audit breaches and unexpected security breaches.

What types of vulnerabilities are commonly missed during code-level review?

Code-level review often misses complex issues like reentrancy vulnerability, integer overflow, buffer overflow, and access control bypass. It may also overlook economic attacks, oracle manipulation, and incentive misalignment.

Static analysis and SAST tools help, but they can produce false positives. Without dynamic analysis, mutation testing, and fuzz testing, deeper protocol exploitation risks remain undetected.

How do DeFi exploits bypass audited protocols so easily?

DeFi exploits often bypass audited protocols through flash loan attacks, admin privilege abuse, and unsafe upgradability. Attackers exploit cross-chain bridges, proxy contracts, and delegatecall risks.

Many smart contract hacks stem from storage collision or initialization flaws. Even audited systems can fail due to governance attacks, MEV exploits, and complex interactions across distributed software environments.

What security practices go beyond traditional web3 audits?

Beyond web3 audits, teams should adopt layered security with defense in depth and zero trust architecture. Practices like penetration testing, red teaming, threat modeling, and runtime monitoring improve security assurance.

Using formal verification, invariant checks, and symbolic execution strengthens coverage. Secure SDLC and DevSecOps also help reduce risks from application security gaps.

How can teams reduce financial losses from future security breaches?

To reduce financial losses and billion dollar hacks, teams need strong incident response and continuous risk assessment. Implement least privilege, monitor for abnormal behavior, and secure third-party integrations.

Bug bounty programs, responsible disclosure, and security researcher bounties help catch issues early. Combining compliance auditing with real-time monitoring limits damage from future breaches.

Seeing Beyond the Syntax

The next breach won’t come from a simple coding mistake, but from a deeper misunderstanding of how systems behave in the real world. Secure coding is not just about syntax, it’s about thinking in terms of risk, intent, and how features can be abused. The data shows most damage happens where audits don’t look, which means developers need a stronger, practical security mindset.

If you want to build that mindset, the Secure Coding Practices Bootcamp focuses on hands-on training with real scenarios, not theory. It helps developers understand vulnerabilities like OWASP Top 10, authentication flaws, and unsafe dependencies through guided labs, so they can confidently write secure code from day one.

References

- https://arxiv.org/html/2601.01129v2

- https://softprom.com/application-security-strategy-2026-how-ai-devsecops-and-platform-consolidation-are-changing-the-game

- https://ine.com/newsroom/enterprises-tackle-skills-gaps-with-hands-on-training