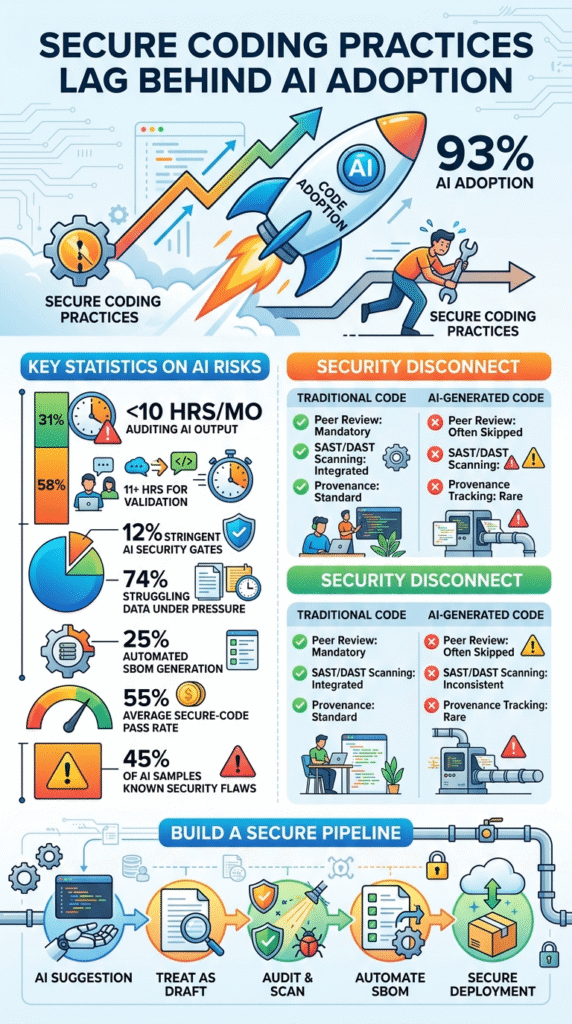

The data is clear. AI code adaption is outpacing secure coding practices. While 93% of organizations use AI-generated code, the security rigor applied to its output remains dangerously low.

This creates a new, automated pipeline for vulnerabilities. If you write, review, or deploy software, you need to understand the widening gap between development speed and security control.

The following statistics and analysis explain why, and what you must do now.

Key Statistics on AI Code Security Risks

The rapid integration of generative AI into software development has created a significant oversight gap. Velocity has increased, but the rigor applied to securing machine-generated artifacts hasn’t kept pace with traditional coding standards.

- 93% – Nearly all organizations have integrated AI-generated code into production, as reported by Cloudsmith in 2026.

- 31% – A third of developers spend 10 hours or less per month auditing AI-produced outputs, according to the same Cloudsmith report.

- 58% – Over half of developers now dedicate at least 11 hours to validation and security checks to mitigate AI risks, Cloudsmith data shows.

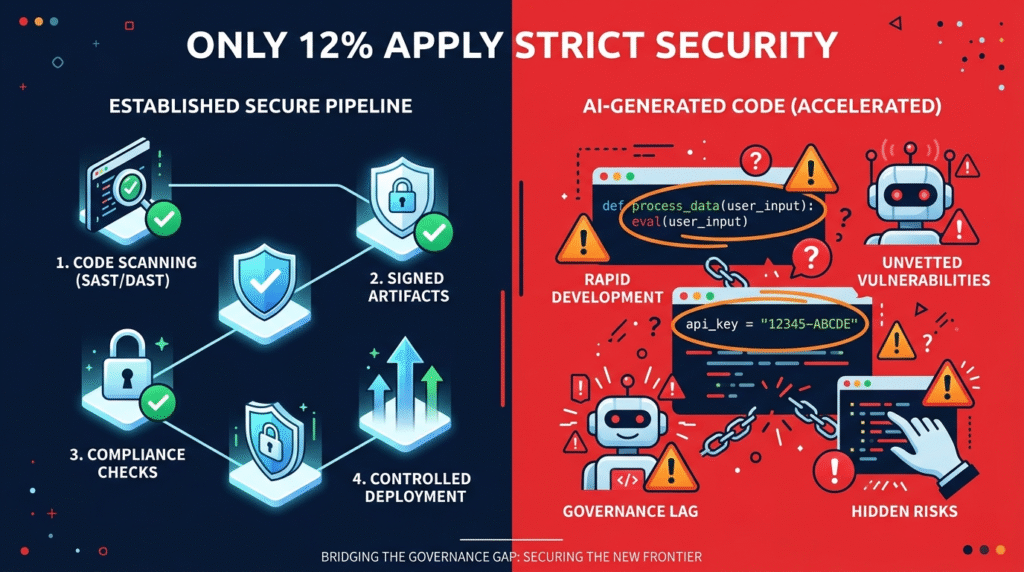

- 12% – Only a small fraction of organizations apply the same stringent security practices to AI artifacts as they do to traditional code.

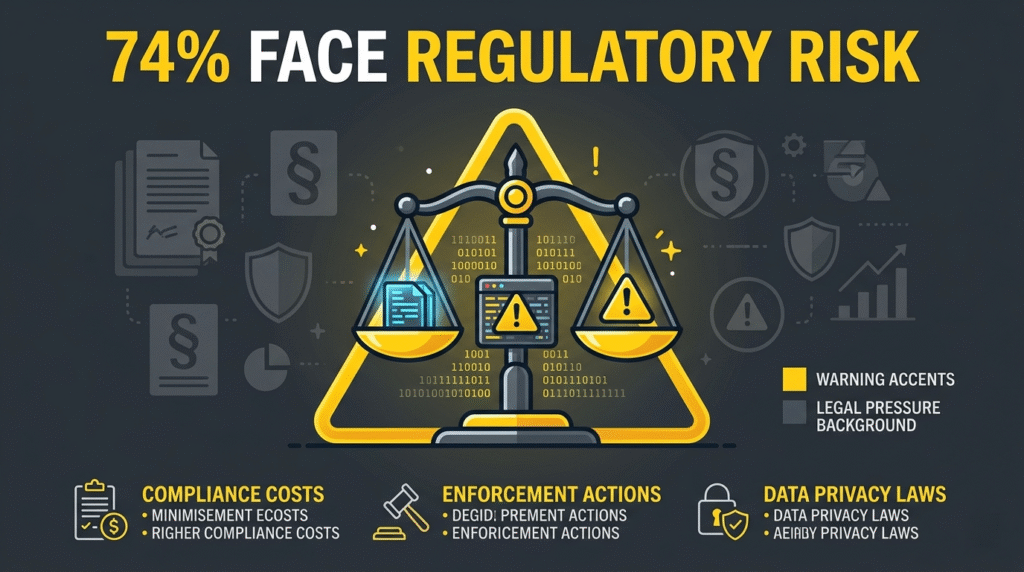

- 74% – A majority of organizations would struggle to provide provenance data quickly under regulatory pressure.

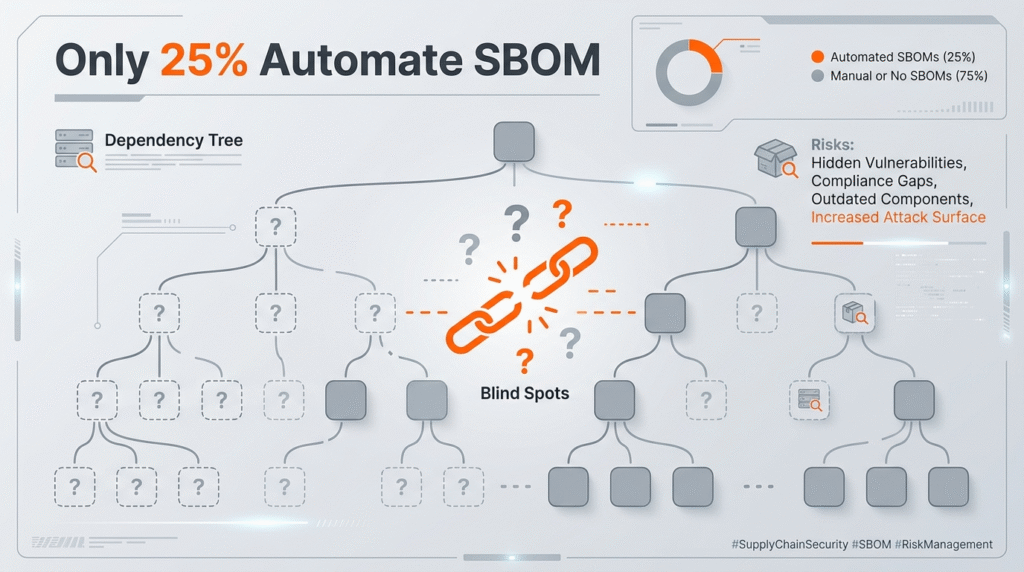

- 25% – Only a quarter of organizations have automated SBOM generation, leaving a massive visibility gap in AI-driven supply chains.

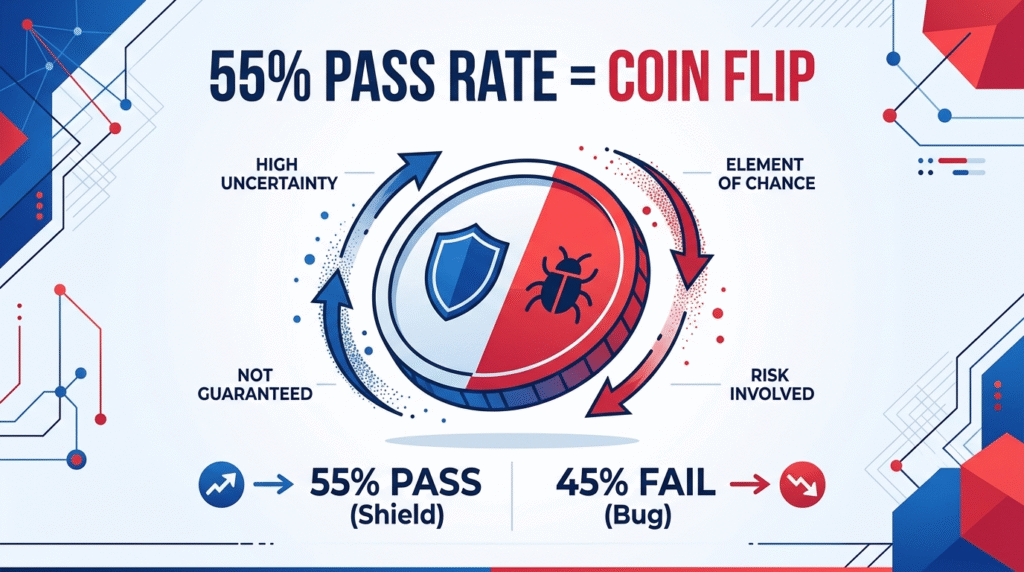

- 55% – The Veracode Spring 2026 GenAI Code Security Update found the average secure-code pass rate for AI models is barely above a coin flip.

- 45% – Veracode testing revealed nearly half of AI-generated code samples introduced at least one known security flaw.

- 96% – A staggering percentage of developers do not fully trust AI-generated code to be functionally correct, per the Sonar 2026 survey.

- 48% – Despite high distrust, fewer than half of developers always check AI-assisted code before committing it.

Why 93% of Organizations Now Use AI-Generated Code

The adoption is no longer optional for competitive firms. It’s driven by a simple, relentless pressure. The demand for rapid feature deployment and the reduction of tedious boilerplate tasks all point toward velocity. According to the Cloudsmith 2026 Artifact Management Report (reported by ITPro, Apr. 10, 2026), 93% of organizations now use AI-generated code. A developer can describe a function and have a working snippet in seconds. The business sees productivity soar and deadlines met.

What the business often misses, though, is the quiet substitution. The handcrafted logic, the considered architecture, and the security-minded patterns a senior dev might weave in are being replaced by a statistical model’s best guess. It’s not malice, it’s efficiency, but it’s efficiency with a hidden cost.

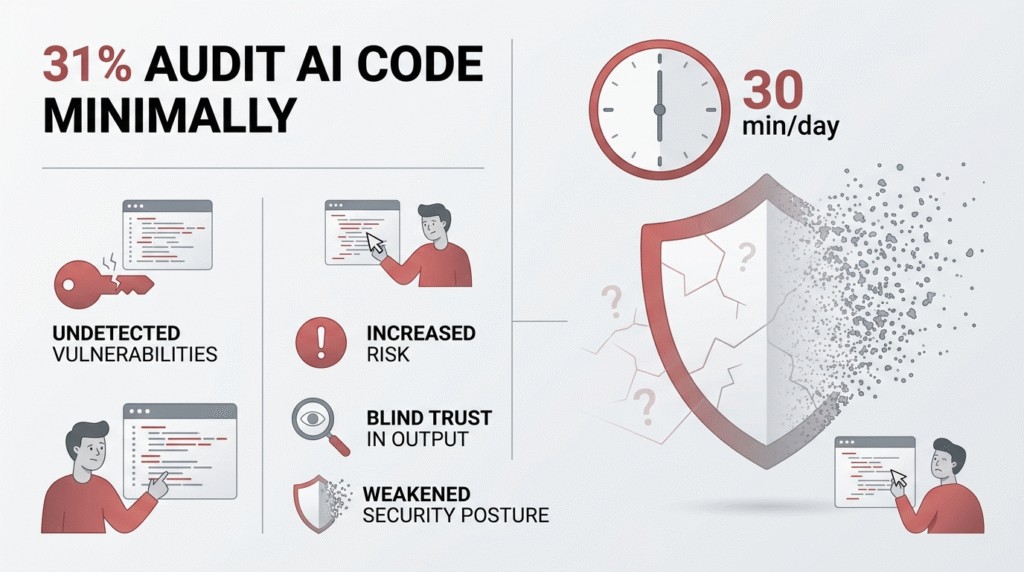

The Risk of 31% of Developers Spending Minimal Time Auditing AI

Ten hours a month. That’s about thirty minutes a day. For a third of developers, that’s the total time spent scrutinizing code that an AI produced. According to the Cloudsmith 2026 Artifact Management Report (Apr. 10, 2026), 31% of developers spend 10 hours or less per month auditing AI-generated code. This creates a blind trust scenario where speed is prioritized over structural integrity.

AI output is often treated as a finished product rather than a draft. The assumption is that if it runs, it’s safe. This isn’t necessarily laziness; it’s likely fatigue. The AI promises relief from the grind, and accepting its output without deep inspection feels like part of that reward. The risk is that subtle exploit embedding and business logic flaws slip through unnoticed.

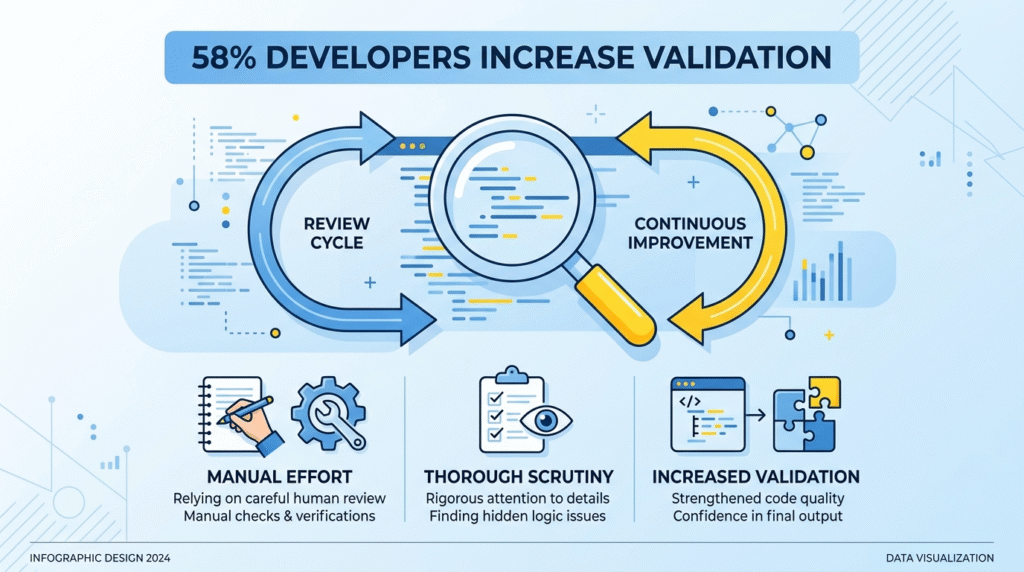

How 58% of Developers are Pivoting to Deep Validation

The majority of the developer community is reacting to these risks by increasing manual scrutiny. According to the Cloudsmith 2026 Artifact Management Report (Apr. 10, 2026), 58% of developers are now dedicating at least 11 hours to validation and security. This shift tells you something important: AI is not a “set and forget” solution. It requires a heavy human-in-the-loop tax.

These developers are manually tracing data flows in AI-generated code, checking identity boundary risks, and questioning authorization weaknesses. Their response is to become auditors. This pivot is a survival tactic, but it’s also a bottleneck. It turns the developer back into a reviewer, slowing the velocity the AI was meant to create.

Why Only 12% of Firms Apply Stringent Security to AI Artifacts

There’s a massive disconnect in governance. Human code is suspect and goes through gates, but AI code is often greeted as a gift. According to the Cloudsmith 2026 Artifact Management Report (Apr. 10, 2026), only 12% of firms apply the same stringent security practices to AI artifacts as they do to traditional software artifacts. Most firms lack the policy to treat AI-generated code with the same rigor as a third-party library from a stranger on the internet.

The table below shows the typical disparity.

| Security Practice | Traditional Code | AI-Generated Code |

| Peer Review | Mandatory | Often Skipped |

| SAST/DAST Scanning | Integrated in CI/CD | Inconsistent |

| Provenance Tracking | Standard | Rare |

The reason is probably a lack of architecture awareness. AI code feels native, it comes from your team’s prompt. But its origin is a vast, opaque training dataset. Without provenance tracking, you don’t know what you’ve imported.

The Regulatory Threat Facing 74% of Organizations

Under frameworks like CISA’s Secure by Design, provenance is no longer optional, it’s a liability issue. According to the Cloudsmith 2026 Artifact Management Report (Apr. 10, 2026), 74% of organizations would struggle to provide provenance data quickly under regulatory pressure. This statistic is a warning flare for software supply chains.

- Regulatory pressure is mounting around software supply chains.

- AI-generated code is a black box in that chain.

- Organizations are shipping code without knowing its lineage or the training data risks involved. This isn’t just about security, it’s about compliance. A breach traced to an AI-generated component could carry heavier penalties if you can’t explain how it got there.

Why 25% Automation in SBOM Generation is a Security Failure

A Software Bill of Materials (SBOM) is the foundation of supply chain security. For AI code, it’s critical because these tools routinely suggest external dependencies and packages. However, according to the Cloudsmith 2026 Artifact Management Report (Apr. 10, 2026), only 25% of organizations are automating SBOM generation.

Without automation, identifying vulnerabilities in AI-suggested dependencies becomes a slow, error-prone manual hunt. In a system where code is generated at high speed, your security process must match that pace. A 25% adoption rate means most teams are flying blind, unaware of what their AI has pulled into the project.

The 55% Pass Rate: Why AI Security is a Coin Flip

Recent testing provides a cold dose of reality regarding the safety of these models. According to the Veracode Spring 2026 GenAI Code Security Update (Mar. 24, 2026), the all-model secure-code pass rate was only 55%. This means nearly half of all suggestions could introduce a vulnerability. It is effectively a coin flip.

- The models are trained on correctness, not security.

- They replicate patterns from their training data, including insecure ones.

- A pass rate this low makes integrated security scanning non optional. You cannot rely on the AI to be secure. You must rely on your own systems to catch what it misses. This is the core argument for shifting left with more force than ever.

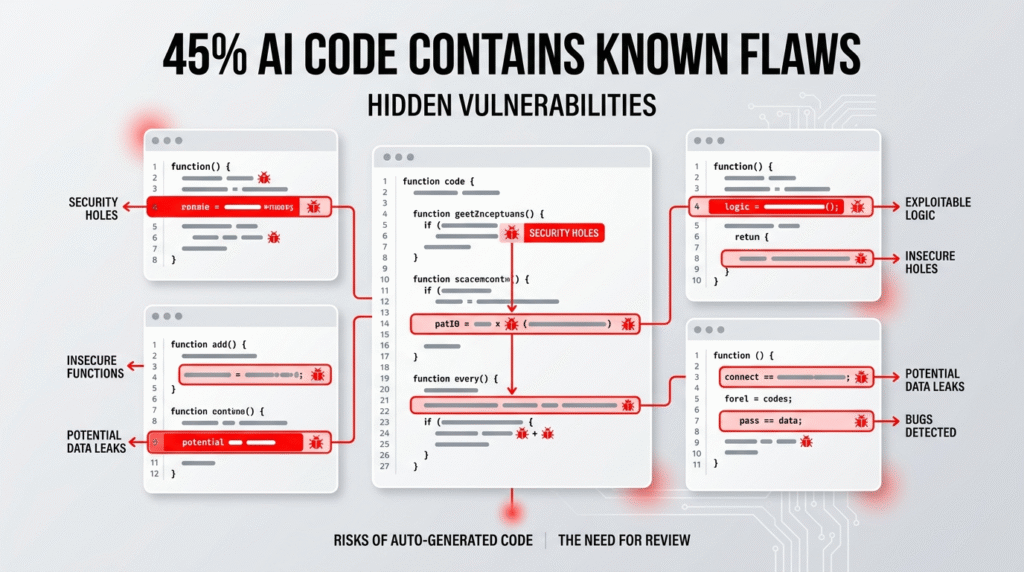

Addressing the 45% of AI Samples Containing Known Flaws

AI models often suggest deprecated patterns or insecure functions. According to the Veracode Spring 2026 GenAI Code Security Update (Mar. 24, 2026), 45% of AI-generated code samples introduced a known security flaw. These aren’t abstract issues; they include things like improper input validation or weak cryptographic patterns.

The models do not understand the specific human context of your application. They generate code that works in isolation but fails in a secure environment. This highlights the necessity of integrating scanners directly into the prompt-to-code workflow so that flaws are flagged before they ever reach production.

The Paradox of 96% of Developers Distrusting AI Code

There’s a profound psychological gap in the modern workplace. While the pressure to deliver drives adoption, the people using the tools remain skeptical. According to the Sonar 2026 State of Code Developer Survey (2026 report), 96% of developers do not fully trust AI-generated code to be functionally correct.

- The distrust is professional, instinctual.

- The adoption is managerial, economic.

- This creates internal conflict for the developer. They know they should check, but the sprint deadline looms. The tool says it’s done. This trust gap, identified by Sonar, is where vulnerabilities seep in. The developer’s better judgment is silenced by the workflow’s demand for speed.

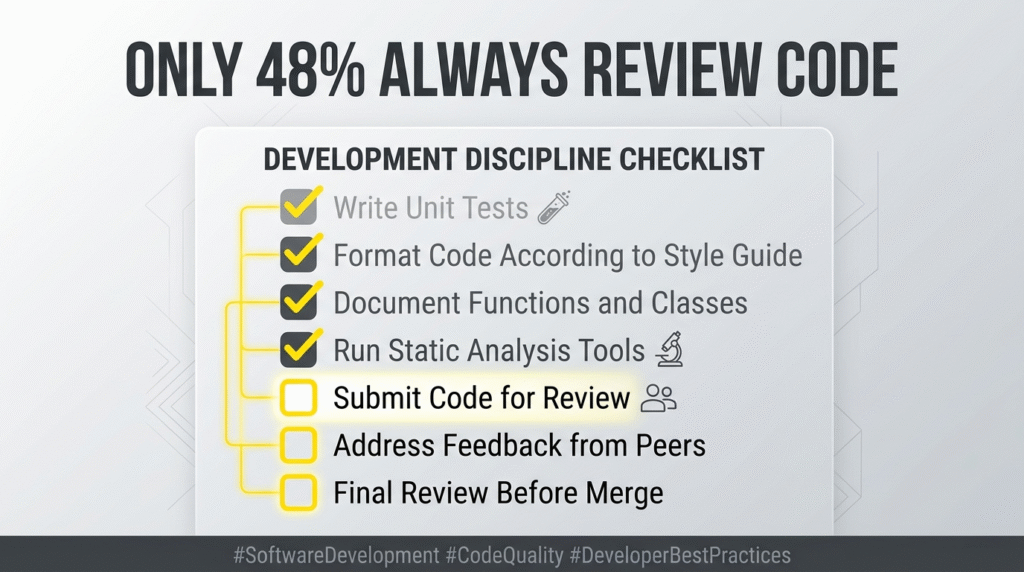

Closing the Gap: Why Only 48% of Developers Always Check Code

The final hurdle is the verification stage. Fewer than half of developers verify AI code before a commit. According to the Sonar 2026 State of Code Developer Survey (2026 report), only 48% of developers always check AI-assisted code before committing. This is the primary vector for modern vulnerabilities.

This behavior is exactly what’s driving the need for mandatory secure coding practices. Training can’t just be about writing secure code anymore; it must be about auditing AI-generated code. Organizations must reinforce the discipline of the review to catch issues before they merge. Without this, downstream cyber impacts are almost guaranteed.

What You Can Do About AI Code Adaption

The data paints a clear picture of a gap widening between creation and security. The solution isn’t to abandon AI coding tools, they’re here.

The solution is to evolve your practices to meet their output. Start by treating every AI suggestion as a draft, not a final product. Mandate that it passes through the same security gates as human code: peer review, SAST/SCA scanning, provenance tracking. Integrate security scanners directly into your AI assistant’s workflow, so flaws are caught in real time.

Most importantly, invest in training that focuses on the new failure modes. Train your team to spot the authorization weaknesses, the identity boundary risks, the subtle logic flaws that AI models miss. The goal is to build a guardrail, not a wall. To keep the velocity, but strip out the risk. Your next move is to assess your own pipeline. Look at your review rates, your scan coverage, your team’s comfort with auditing AI code. Then build the plan to close your gap.