Machines that write code are creating serious new problems. They can take code from other people, bake in security weaknesses, or copy human biases. When the AI’s code fails, who takes the blame? And what happens to all the programmers?

Tools like GitHub Copilot are being used by many teams, who say they work over 50% faster now. But this new speed has a cost. It risks everyone’s data privacy and makes software less safe.

We need better guardrails. Who is responsible for the code a machine writes?

Find out more below.

Ethical Implications of AI Code Generation: Key Points to Remember

A quick summary before we go on.

- AI that writes code is fast, but it can steal work, be unfair, and make it unclear who’s responsible.

- The code it makes often has security holes. You must check it carefully.

- New laws and company rules are being created to manage these risks while still letting people innovate.

What Are the Ethical Implications of AI Code Generation?

AI code tools are fast, but they create real problems: copying work, security flaws, unfairness, and murky responsibility. They’re also changing what it means to be a programmer.

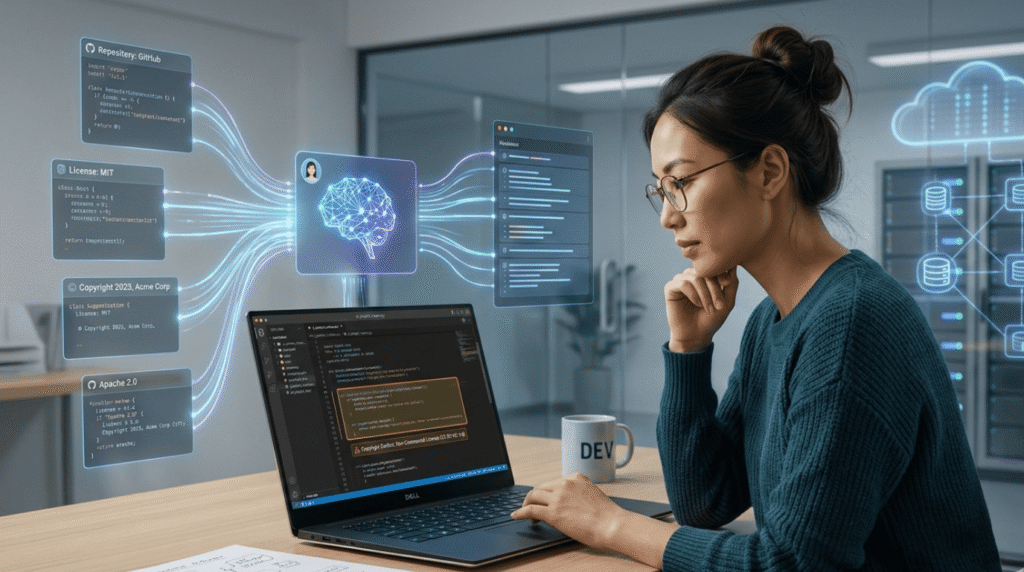

Tools like generative AI and machine learning now let computers create working software in seconds. They learn from billions of lines of public code, shaping the future of AI-assisted development as developers begin relying on machines to handle larger portions of everyday programming work.

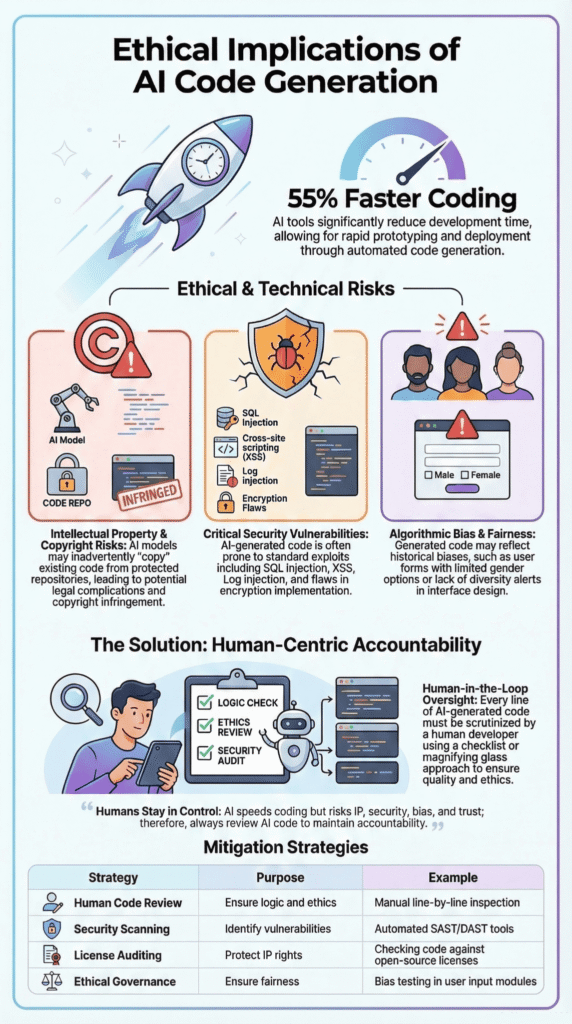

Many companies are adding these tools to how they build software. A report in The New York Times says developers using AI assistants can finish routine jobs about 55% faster.

But in our bootcamp, we’ve seen a problem. When code comes out too fast, developers might not think enough about whether it’s correct, secure, or easy to fix later.

A few key ethical issues keep coming up:

- It can break copyright laws.

- The code it makes can have security weaknesses.

- It can copy biases from its training data.

- It could change the skills programmers need.

This forces companies to create better rules. The goal is to keep human developers in charge.

How Does AI Code Generation Create Intellectual Property Risks?

AI trained on public code can copy licensed work without giving credit. This clashes with open-source rules and makes it unclear who owns what the AI creates.

These AI models learn from huge collections of code, like those on GitHub. That code has many different licenses.

Developers often think the AI’s code is new. But sometimes, it’s just repeating something that’s already copyrighted. Lawsuits against tools like Copilot highlight GPL code reproduction risks. Using that code might legally force a whole project to be made public.

We’ve caught this in our own training sessions. Once, a useful-looking code snippet was almost identical to a protected project. If we hadn’t checked, we could have had a legal problem.

Here are the main risks:

- Copying licensed open-source code.

- Not crediting the original coder.

- Translating code between languages in a hidden way.

- Unclear ownership for AI-made content.

The law usually says a human must be the author for copyright. Who owns code written by an AI? Nobody really knows. The law hasn’t caught up yet.

Good teams handle this by reviewing AI code carefully, just like code from another person. In our bootcamp, we teach students to always check AI output for license problems and security before using it.

Why Does AI-Generated Code Introduce Security Vulnerabilities?

Credits: Christopher Penn

AI-made code often has security holes. The AI copies bad patterns from old code and doesn’t understand the whole system it’s building, which raises bigger questions about whether all code could become AI-generated if developers start trusting automated outputs without deeper review.

Studies like Veracode’s and others find high rates of vulnerabilities (e.g., 62% in one analysis) in AI code.

These flaws come from its training on old, buggy code. A lot of that old code was not written securely.

We watch this happen in our labs. The AI spits out code that runs, but it’s missing the safety parts. It might not check what a user types in, or forget a login wall. Hackers love these gaps. They can steal data through them.

The big security holes we see are:

- SQL injection from messy database code.

- Cross-site scripting on web pages.

- Log injection that messes up system logs.

- Bad encryption that doesn’t really hide anything.

Our number one rule? Never just copy and paste. We scan every line the AI writes. We test it hard. Some teams use math to prove it’s safe. Research from Veracode backs this up, checking the code finds most of the problems.

Good, ethical development means treating AI code like an untrusted suggestion, not a final answer.

How Do Bias and Fairness Issues Appear in AI-Generated Code?

AI can write unfair or biased code. It learns from old data, and that data often has old human biases stuck inside it.

Studies posted on arXiv found bias in a lot of AI outputs. Depending on the test, between 13% and 49% of the code the AI wrote showed some kind of bias.

This happens because the AI’s training data is full of code written by people. People have biases, so their code can have biases too, sometimes without them even knowing.

We tested this in a class project. We asked an AI to make code for checking user profiles. The default form it created asked for a “first name” and “last name.” This doesn’t work for everyone. It also only offered “male” or “female” as choices. That left out many of our users.

Bias usually comes from:

- Training data that mostly has code from one part of the world.

- The way the AI is tuned to give “good” answers.

- Too much code from one popular software framework.

To fight bias, we use a few tricks:

- Giving the AI very specific examples of what we want.

- Testing the AI’s code with special datasets designed to find bias.

- Regularly checking the AI model itself for problems.

- Using more diverse data to train the AI.

These steps help, but they don’t fix everything. That’s why human review is still the most important step. Someone needs to look at the code and ask, “Is this fair for everyone?”

Fixing AI bias isn’t only about the code. It’s about the people writing the rules. A real committee needs to watch these projects.

Who Is Accountable for AI-Generated Code?

If an AI writes broken or unsafe code, who fixes it? The programmer who used it and the company that shipped it. The machine doesn’t get the blame; the people do.

Good engineering means you must know what your code does. But AI can make this confusing. A developer might not understand the code the AI gives them, but they use it anyway.

As highlighted by MIT News / MIT Computer Science and Artificial Intelligence Laboratory (CSAIL)

“Without a channel for the AI to expose its own confidence, ‘this part’s correct, this part, maybe double-check’, developers risk blindly trusting hallucinated logic that compiles, but collapses in production. … Our goal isn’t to replace programmers. It’s to amplify them. When AI can tackle the tedious and the terrifying, human engineers can finally spend their time on what only humans can do.” – MIT News

We see this in our training. Students sometimes copy and paste AI code without reading it. They think it works, so they use it. This is risky. They might be putting broken or unsafe code into a project.

On forums like r/gamedev, programmers talk about this. They worry about using AI as a “crutch.” This means relying on AI so much that you forget how to solve problems yourself.

The main accountability problems are:

- Developers can’t explain how the AI’s code works.

- It’s unclear if the tool maker or the programmer is to blame.

- It’s hard to track where the code originally came from.

Good companies fix this with clear rules. Many now make developers say when they used an AI to write code. They also require other people to review all AI-written code before it’s used.

Another good practice is using training data that has clear, open licenses. This helps avoid legal trouble later.

The main idea is simple: humans must stay in control. The AI is just a tool.

How Could AI Code Generation Affect Software Engineering Jobs?

AI coding tools are changing programmers’ jobs. They do the simple, boring tasks very fast. This changes what skills companies need.

Studies show AI can make routine coding about 55% faster. This lets developers finish simple work quickly.

This changes how teams are built. Jobs for new programmers often involve writing simple code and running basic tests. AI can do that now.

We see this in our boot camp. AI can set up the basic structure of a program in seconds. This used to be a key learning task for beginners. Now, they might not get that practice. They could miss out on learning core skills.

A few big changes are happening:

- Automation of simple, repetitive coding.

- Fewer jobs focused only on basic tasks.

- More need for people who can design big systems and manage AI tools.

Some experts think nearly half of all entry-level office jobs could be changed by automation in the next ten years.

But new jobs are appearing too. Programmers are now needed to plan systems, manage AI tools, and check the AI’s work, especially as teams start learning how to build custom AI coding agents that automate parts of testing, debugging, and code generation.

The big ethical question is this: as companies save time and money with AI, how will they help their workers learn new skills? They need to support their teams through this change.

What Regulations Govern AI-Generated Code?

New laws are being made around the world for AI that writes code. These laws ask for more openness, checks for bias, and clear labels.

Rules for AI are changing fast.

In the United States, laws like the California AI Safety Act make companies explain what data they used to train their AI models.

In a recent analysis by ACM (Association for Computing Machinery)

“The most important implication is that AI developers prima facie bear some measure of responsibility for some of the unintended uses and outcomes of their AI systems. This prima facie responsibility holds even if the developers did not want their AI to produce those outcomes, as long as such outcomes were reasonably foreseeable.” – ACM

A big European law, the EU AI Act, will soon require labels on anything made by AI. Parts of this law are expected to start in August 2026.

In Singapore, its AI Governance Framework focuses on responsible development. It asks for transparency and keeping a human in charge.

The U.S. Federal Trade Commission also says companies using AI must not trick people or be unfair.

The main trends in new laws are:

- Forcing companies to report how their AI works.

- Requiring checks for bias.

- Making developers document their training data.

- Setting clear standards for who is responsible.

These rules show that governments now see AI as important as other critical technologies, like bridges or power grids. It needs watching.

What Practical Strategies Reduce Ethical Risks in AI Code Generation?

Companies can lower the risks of AI code by using good rules, safe coding habits, and checking tools.

Using AI ethically means more than just turning it on. You need a plan.

In our bootcamp, the first rule is to always use secure coding practices. We treat code from an AI the same as code from a stranger on the internet. We check every line before it can be used.

We also use special tools to help.

- Security scanners look for hidden bugs and holes.

- Formal verification uses math to prove the code is correct.

- Automated tests make sure the code does what it should.

- License scanners check if the code is allowed to be used.

Teams that use these kinds of plans say their software becomes safer and more reliable.

| Strategy | Main Goal | What It Looks Like |

| Human code review | Keep people in charge | Another developer must approve all AI code. |

| Security scanning | Find security holes | Running the code through a scanner before using it. |

| License auditing | Avoid legal problems | A tool checks if the code’s license is safe to use. |

| Ethical governance | Make sure it’s used right | A team meets to set rules for how AI is used. |

Putting all these steps together helps catch problems. It lets teams use AI to work faster, but still keeps their code safe and legal.

FAQ

How does AI code generation affect intellectual property rights and open-source code use?

Artificial intelligence systems learn from large training data sets that often include open-source code and public GitHub repositories. This creates ethical concerns about intellectual property rights and license compatibility.

When AI code generation produces similar code, developers must check copyright laws and licensing rules. Ethical guidelines and ethical AI practices help software development teams respect creators and manage software reuse responsibly.

Can AI-generated content introduce security vulnerabilities in software coding?

AI-generated content can introduce security vulnerabilities during software coding if developers do not review the output carefully. Artificial intelligence models trained on mixed training data may reproduce unsafe patterns, including malicious code or SQL injection risks.

Teams reduce these risks through code review, automated testing frameworks, static analysis security tools, and strong software testing. These steps protect data security and reduce privacy concerns.

Why do bias and training data matter for Generative AI in software development?

Generative AI systems rely on machine learning, deep learning, and large language models that learn from training data. If the training data contains bias or inaccurate information, the AI-generated content may repeat those problems.

This creates ethical concerns in software development. Teams use data analysis, bias mitigation, and ethical AI practices to improve fairness and follow ethical norms in AI research and development.

What rules guide ethical considerations and AI governance for modern AI systems?

Many countries and organizations are creating rules to guide ethical considerations in artificial intelligence. Regulations such as the EU AI Act and frameworks like the Singapore AI Governance Framework focus on privacy and security, national security, and responsible AI system operations.

Companies often create an AI governance committee to monitor AI product and service development and ensure compliance with ethical guidelines.

Will AI-driven code generation change programming skills and workforce transition?

AI-driven code generation is changing software development workflows and the programming workflow. AI tools help developers write code faster and support tasks like automated testing frameworks and code review.

However, strong programming skills and human control remain essential to ensure code correctness and prevent security vulnerabilities. This shift may lead to workforce transition, but developers will still guide artificial intelligence responsibly.

The Future of Ethical AI Code Generation Depends on Developers

The future of AI code generation will not be written by machines alone. It will be written by the people who decide how those machines are used. Every line of AI-assisted code carries a choice. A shortcut, or a safeguard. Speed matters, yes, but trust matters more. The tools will keep getting smarter, but responsibility still belongs to the humans behind the keyboard.

Start where it counts. Build with Secure Coding Practices, ask better questions, and keep human judgment in the loop. If you want real skills to do that, you can join the Secure Coding Practices Bootcamp and learn how developers ship safer code every day. The future of software is not just faster code. It is code people trust.

References

- https://news.mit.edu/2025/can-ai-really-code-study-maps-roadblocks-to-autonomous-software-engineering-0716

- https://cacm.acm.org/opinion/multi-use-ai-and-moral-responsibility/