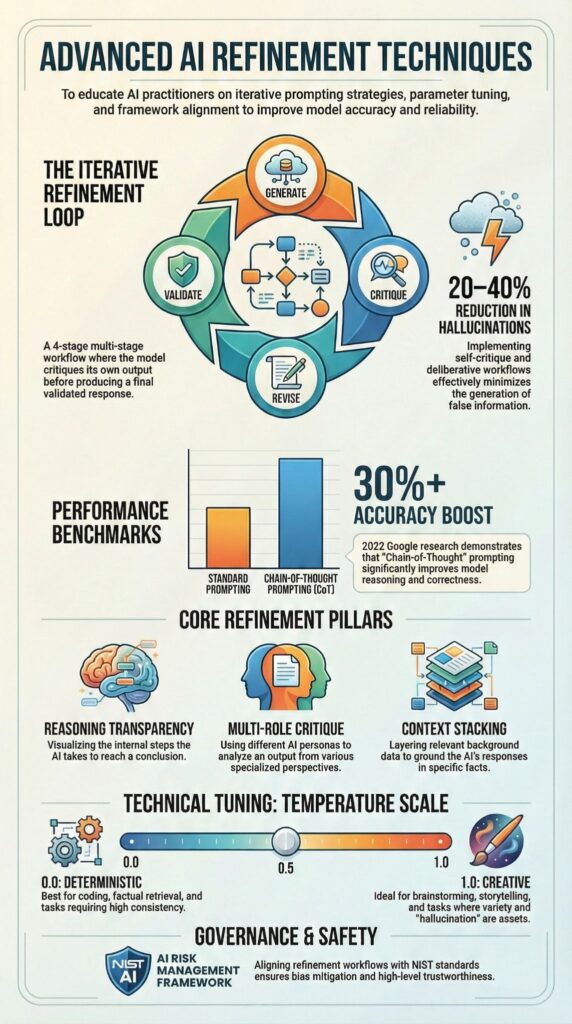

What are advanced AI refinement techniques? They are structured, multi-stage workflows that improve AI outputs through step-by-step reasoning, iterative feedback, and layered critique. Instead of accepting the first response, teams apply constraints, reasoning steps, and revision passes to reduce errors and hallucinations.

Research from Google in 2022 showed that Chain-of-Thought prompting increased arithmetic reasoning accuracy by over 30 percent in large models.

In our Secure Coding Practices, we see similar gains: applying iterative refinement in code generation lowers bug rates and strengthens output reliability. Keep reading to see how these methods turn “sounds right” into defensible results.

Quick Wins – Advanced AI Refinement That Works

- Iteration beats one-shot prompting

- Chain-of-Thought (CoT) improves reasoning accuracy

- Self-critique transforms plausible answers into defensible ones

Why Does Iterative Refinement Beat One-Shot Prompting?

Iterative refinement improves reliability by layering feedback, structured revisions, and verification steps.

In fast-moving teams experimenting with vibe coding, refinement loops are often what separate quick drafts from production-safe outputs. Single-pass prompts often produce outputs that look plausible but miss subtle errors.

In our experience teaching Secure Coding Practices, multi-round refinement consistently reduces hallucinations and logic mistakes, creating outputs we can trust in production workflows.

Each pass acts as a filter against inconsistency, bias, or missing constraints. We rarely deploy AI-generated code without at least two refinement rounds:

- First pass drafts the solution

- Second applies failure-mode analysis and bug-fixing loops

- Third (optional) pass performs output defense and constraint verification

A direct comparison shows the difference:

| Feature | One-Shot | Iterative |

| Accuracy | Variable | Higher with feedback |

| Control | Limited | Adjustable |

| Error Handling | Reactive | Proactive |

| Output Quality | Inconsistent | Refined |

Other key mechanisms we rely on include:

- Critique cycles to identify flaws early

- Constraint tightening after the first draft

- Context stacking in follow-up prompts

Iterative refinement turns prompting into a structured workflow, making outputs far more defensible and production-ready.

How Does Chain-of-Thought Prompting Improve Reasoning?

Chain-of-Thought (CoT) prompting improves logical accuracy by guiding models to reason step by step. Instead of jumping straight to an answer, the model lays out intermediate steps, making reasoning transparent and verifiable.

In a recent analysis by Google Cloud :

“Supervised Fine‑Tuning (SFT) emerges as one of the options to bridge this gap, offering the ability to tailor these powerful models for specialized tasks, domains, and even stylistic nuances.” – Google Cloud

When the AI explains why it chose a particular approach, developers can spot errors faster and refine solutions more precisely. Step-by-step reasoning also reduces hidden contradictions, making verification and entailment checks possible.

A practical CoT workflow includes:

- Ask the model to show reasoning step by step

- Break complex problems into smaller, manageable components

- Encourage intermediate verification before final outputs

In real-world application, CoT works best for math tasks, structured research synthesis, complex debugging, and even legal reasoning. By surfacing reasoning, we increase confidence in AI outputs and reduce the time spent on trial-and-error cycles, allowing teams to focus on higher-level design and secure implementation.

What Are Self-Critique and Deliberative Workflows?

Self-critique workflows prompt AI systems to review and revise their own outputs before they are finalized. Deliberative refinement pushes results from “plausible” to defensible by layering structured attack and revision cycles. In our experience, treating AI outputs like junior developer drafts, expecting review, testing, and iteration, dramatically improves reliability.

Deliberative alignment adds a second layer of reasoning. The AI critiques its own work, flags biases or unsupported claims, and rewrites responses with evidence or constraint anchoring. Benchmark studies show that multi-pass refinement with critique chains and constraint enforcement can reduce hallucinations by 20–40 percent.

As noted by Google Cloud

“Implement a Reranker to reorder retrieved documents based on relevance.” – Google Cloud

A practical multi-round workflow often looks like this:

- Generate an initial output

- Attack it from expert or adversarial perspectives

- Identify bias, factual gaps, or inconsistencies

- Revise with constraints

- Conduct verifiability and robustness checks

Techniques like perspective switching, counterfactual prompting, and ambiguity resolution strengthen the process. In our Secure Coding Practices courses, we combine these steps with red-teaming and adversarial testing. The result is process supervision, not blind trust, AI becomes a collaborator, not an oracle.

How Do Temperature Control and Parameter Tuning Affect Output Quality?

Temperature tuning shapes AI output by balancing creativity and precision. In more advanced workflows, temperature control is paired with refinement passes to stabilize outputs without sacrificing exploration.

Lower values, around 0.2, produce factual, deterministic results, while higher values, around 0.8, increase variability and exploration. In our experience, temperature settings directly affect reliability, misused high temperatures often cause incoherent outputs in early code drafts.

Temperature adjusts the token probability distribution:

- Narrow settings reduce randomness, ideal for factual tasks or code generation

- Broader settings let the model explore ideas, useful for brainstorming or design ideation

- Iterative refinement works best when paired with temperature adjustments to improve output reliability

| Temperature | Output Style |

| 0.0–0.3 | Deterministic, factual |

| 0.4–0.6 | Balanced |

| 0.7–1.0 | Creative, variable |

In practice, we apply low temperatures for structured outputs such as code or research summaries. High temperatures are reserved for creative exploration or conceptual ideation. Key best practices include:

- Start with control to reduce errors

- Adjust incrementally for creativity

- Combine with iterative refinement for consistency

This approach ensures outputs remain predictable when needed while still allowing AI to explore solutions safely.

What Are Meta-Prompts, Reverse Prompting, and Echo Prompting?

Meta-prompts guide AI to improve the prompt itself instead of only the output.

These techniques become critical when teams integrate vibe coding across shared systems and need consistent behavior over long interactions. Reverse prompting uncovers hidden assumptions, while echo prompting feeds prior outputs back into the workflow to maintain coherence across multi-turn interactions.

In our experience, combining these methods reduces token waste, improves consistency, and makes long agentic workflows easier to manage. Communities on Quora and Reddit often highlight role-based prompts, like combining engineer and analyst personas, to extract richer, multi-perspective insights.

Key techniques include:

- Meta-prompts: AI edits the instruction itself, not the answer

- Reverse prompting: Ask what question would produce a given answer to expose assumptions

- Echo prompting: Feed earlier outputs back into context stacking for continuity

Few-shot learning with 2–3 examples often boosts format compliance and task accuracy. In our Secure Coding Practices bootcamps, we layer these methods with hierarchical and modular prompting frameworks. Meta-learning prompts help developers refine instructions for complex coding workflows, making outputs more precise and verifiable.

What Are Real-World Edge Cases and Failure Points?

Credits : Edification Hub AI

Advanced AI refinement techniques often break down without proper context stacking, explicit constraints, and structured verification.

In real-world projects, we’ve seen over-creative outputs and missing defense rounds as the main failure points. When prompts lack specificity enhancement, vagueness pruning, or entailment checks, AI outputs can drift and introduce subtle errors.

Common failure points we encounter include:

- Overly high temperature leading to inconsistent outputs

- Missing conditional logic in generated code

- Skipping verification stages entirely

- Ignoring base-rate neglect prevention

- Omitting bias detection loops

Professional safeguards rely on disciplined workflows. Framework-first prompting, multi-turn outlining before expansion, and explicit evidence anchoring reduce risk.

In our Secure Coding Practices courses, we treat refinement as an ongoing process. Each output passes through hallucination mitigation checks, failure mode analysis, and robustness testing before being deployed.

We’ve observed that consistency builds trust. Teams using recursive refinement rather than isolated prompts see fewer edge-case failures and higher reliability. AI-assisted workflows become predictable only when repeated verification and structured iteration are embedded into daily practice.

FAQ

What advanced prompt engineering techniques improve AI output quality?

Advanced prompt engineering improves AI output by applying structured control methods. Techniques such as precision prompting, constraint injection, and meta-prompting clearly define scope, format, and boundaries.

Methods like multi-turn outlining, prompt chaining, and context stacking maintain logical continuity across steps. Combining few-shot learning with role-playing prompts shapes tone and structure. These practices reduce ambiguity, increase specificity enhancement, and produce consistent, high-quality responses.

How does chain of thought enhance reasoning accuracy?

Chain of thought improves reasoning by requiring explicit, step-by-step reasoning instead of short conclusions. Techniques such as tree of thoughts, logical decomposition, and scaffolded reasoning divide complex tasks into smaller logical units.

Through CoT distillation and structured critique chains, weak reasoning paths are identified and corrected. This process strengthens entailment verification, contradiction detection, and consistency enforcement, resulting in clearer and more defensible conclusions.

What strategies reduce hallucinations and improve reliability?

Reducing hallucinations requires systematic validation methods. Techniques such as hallucination mitigation, verifiability checks, and evidence anchoring ground responses in verifiable information. Teams implement bias detection loops, output defense rounds, and structured error correction cycles to identify weaknesses.

Applying uncertainty quantification, confidence calibration, and truthfulness elicitation improves transparency. Consistency enforcement and coherence boosting further stabilize outputs and reduce unsupported claims.

How does iterative refinement strengthen AI performance?

Iterative refinement strengthens performance through controlled feedback loops. Methods such as self-critique, recursive refinement, and adaptive feedback progressively improve clarity and structure. Techniques including reactive refinement, multi-perspective synthesis, and constraint satisfaction ensure outputs meet defined standards.

Teams conduct ablation testing and failure mode analysis to isolate weaknesses. This systematic cycle increases precision, strengthens logical structure, and improves fluency optimization.

What alignment techniques ensure safer and more controllable AI systems?

Alignment techniques ensure safer and more controllable systems by combining governance and technical safeguards. Approaches such as deliberative alignment, constitutional AI, and structured process supervision guide model behavior.

Advanced methods include reward modeling, RLHF variants, and layered safety layers to reinforce desired outcomes. Organizations apply red-teaming prompts, scalable oversight, and outcome oversight to test vulnerabilities and enforce responsible, stable system performance.

Advanced AI Refinement Techniques in Practice

Advanced AI refinement techniques combine structured prompting, iterative feedback, parameter tuning, and verification to improve accuracy, reliability, and real-world alignment.

Research from Google and standards from NIST show disciplined refinement outperforms one-shot prompting. In our Secure Coding Practices, iterative review, self-critique, and defense rounds make AI outputs more stable and verifiable.

This hands-on bootcamp trains developers in secure coding, covering OWASP Top 10, input validation, secure authentication, and safe dependency use.

Explore Secure Coding Practices for structured AI refinement.

References

- https://cloud.google.com/blog/products/ai-machine-learning/supervised-fine-tuning-for-gemini-llm

- https://codelabs.developers.google.com/codelabs/production-ready-ai-with-gc/8-advanced-rag-methods/advanced-rag-methods