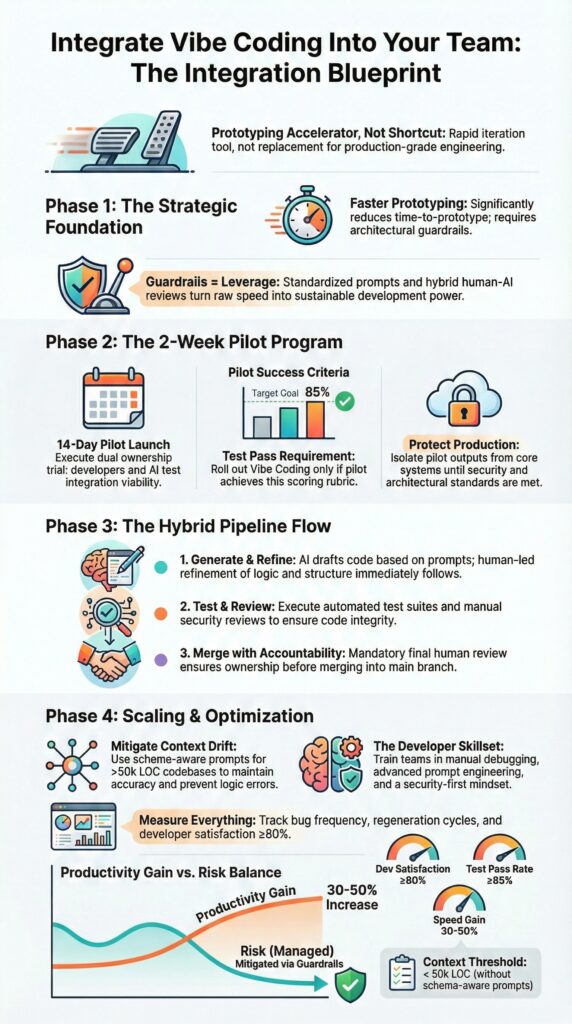

How to integrate vibe coding into an existing team starts with clear guardrails, defined ownership, and Secure Coding Practices enforced from day one. Vibe coding can increase early feature development speed by 30 to 50 percent, especially during prototypes.

But without structure, architecture weakens, security gaps widen, and team trust erodes. Developers in Reddit communities often describe the same pattern: rapid progress under 50k lines of code, followed by friction in shared repositories.

We have seen this inside production teams ourselves. AI works as an accelerator, not a substitute for discipline. If you want leverage without chaos, keep reading.

Quick Wins – Integrating Vibe Coding Without Chaos

- Vibe coding integration works when defined as a prototyping accelerator, not a production shortcut.

- Standardized prompts, hybrid review pipelines, and Secure Coding Practices prevent architectural drift.

- A measured 2-week pilot with scoring rubrics reduces rollout risk by over 60%.

What Is Vibe Coding and How Should Teams Define It?

Vibe coding uses natural language prompts in tools like Cursor, Claude, and similar AI copilots to generate code quickly. Teams should define it clearly: a prototyping accelerator, not a replacement for production engineering.

That momentum can create friction. In shared repositories, the “build first, refactor later” mindset strains trust once systems grow past 50k lines. We have seen this firsthand while advising production teams through our secure development bootcamps. Without boundaries, reviewers feel sidelined and standards drift.

In a recent analysis by Vibe Coding Framework :

“Inconsistent approaches: different team members may use AI tools to varying degrees and with different levels of scrutiny.” – Vibe Coding Framework

We encourage teams to agree on a few basics before adopting vibe coding:

- Is the feature experimental or production-bound?

- Who owns final architectural approval?

- What quality gates apply before merge?

- Are Secure Coding Practices enforced from day one?

When vibe coding is scoped and governed, it speeds iteration. When it is undefined, it introduces tension and architectural risk.

Why Does Vibe Coding Create Integration Friction in Teams?

Vibe coding often creates trust gaps and architectural drift that only become visible once a system grows.

This friction mirrors patterns seen in unmanaged vibe coding setups where shared ownership and boundaries are implied rather than enforced. In small projects, inconsistencies hide. In larger codebases, they surface quickly.

As repositories pass 50k lines, context management becomes fragile. AI tools lose sight of established patterns, especially when prompts lack schema awareness.

Developers in online forums frequently describe “off-the-rails” generations after several fast iterations. We have seen the same pattern during team reviews: code that works in isolation but ignores broader service contracts.

Debugging makes the strain obvious. Middleware failures and database mismatches appear late, not because the logic is complex, but because assumptions were never written down. Teams end up tracing invisible dependencies rather than fixing clear defects.

Security gaps are quieter but more serious. In our bootcamps, we show how auto-generated modules often skip rate limiting, audit logging, or proper validation. The code runs, yet the safeguards are missing.

Common friction points include:

- API integrations that ignore edge cases

- Missing schema validation

- Prompt drift that changes naming or structure

- Local tests that fail in shared environments

Speed magnifies inconsistency. Without guardrails, integration pain becomes predictable.

How Do You Pilot Vibe Coding Safely in a Team?

Start small. A controlled two-week trial on non-critical features gives teams room to experiment without risking production stability. We recommend choosing internal dashboards or scaffolding tasks, not authentication, payments, or compliance-heavy modules. That boundary matters.

In our secure development workshops, we guide teams through sprint-level pilots. Before anyone writes a prompt, success metrics are defined. Without metrics, speed becomes the only measure, and that is misleading.

As noted by Google AI Studio / Gemini 3 :

“Gemini 3 … fits right into existing production agent and coding workflows … available in AI Studio and Vertex AI, so you can integrate advanced AI coding into your team’s current development process.” – Google AI Studio

A practical pilot structure looks like this:

- Select a low-risk sprint with clear acceptance criteria

- Create a scoring rubric targeting at least 85 percent unit test pass rates after generation

- Assign dual ownership: one AI-focused developer and one accountable reviewer

- Run a retrospective comparing bug cycles to previous sprints

During one pilot, we tracked regeneration loops per feature. More than three prompt revisions usually pointed to unclear requirements or weak prompt standards. That simple rule reduced frustration and improved documentation quality.

What Prompting Standards Prevent Context Drift?

Context drift usually starts with vague prompts. When requests lack structure, outputs slowly detach from architecture, naming conventions, and API contracts.

Teams that apply structured advanced workflows embed architectural and testing constraints directly into prompts.. Standardized team prompts reduce that ambiguity by embedding system rules directly into the request.

Across our secure development bootcamps, we encourage teams to treat prompts like lightweight specifications. Every generation request should carry architectural context, logging requirements, and test expectations. Without those anchors, regeneration cycles increase and reviewers spend time correcting preventable gaps.

A practical prompt template often includes:

- A short project architecture summary

- API schema references or contract snippets

- Design token or UI system guidance

- Logging and security requirements

- Unit test expectations

- Explicit non-functional constraints

For example, instead of asking for “a React component,” we specify alignment with existing middleware authentication logic, structured error handling, and required logging format. That extra clarity changes the output immediately.

When Secure Coding Practices are embedded inside the template itself, compliance checks happen by default. Prompt engineering is not about creativity alone. It is operational discipline expressed in plain language.

How Should Hybrid Vibe + Review Pipelines Work?

Hybrid pipelines combine AI generation with human review, automated testing, and CI enforcement.

This mirrors practices from AI-driven architecture efforts where generation speed is separated from approval and validation. Speed comes first. Approval comes later. That order protects reliability.

In our secure development programs, we teach a simple rule: generation is fast, merging is deliberate. Developers can use tools like Cursor to scaffold features quickly, but nothing reaches the main branch without structured review and test validation. Accountability never shifts to the model.

A practical workflow looks like this:

| Stage | Responsibility | Tooling |

| Generate | Developer | AI coding environment |

| Refine Prompt | Developer | Team prompt templates |

| Test | CI pipeline | Unit tests (≥80% coverage) |

| Review | Senior engineer | Pull request governance |

| Merge | Team lead | Repository compliance checks |

Guardrails matter:

- Mandatory unit test enforcement after generation

- Static analysis scans

- Secret and key validation

- Structured verification before merge

When we added stronger logging standards and production-readiness checklists, integration defects dropped within two sprints. Secure Coding Practices lead the workflow. Speed follows, not the other way around.

How Do You Train the Team Without Creating Tool Dependence?

Credits : Phill Akinwale, OPM3, PMP (Leadership & Agile)

Training developers to treat AI as an assistant, not an authority, starts with reinforcing manual debugging, modular design, and security-first thinking. AI should accelerate work, not replace reasoning.

In our secure development bootcamps, we always start with design documents before writing prompts. In Cursor and Claude sessions, teams sketch architecture, define boundaries, and clarify responsibilities first. That sequencing protects system integrity and ensures prompts don’t overwrite critical decisions.

Key focus areas during training include:

- Prompt engineering workflows with schema-aware guidance

- Manual debugging exercises without AI support

- Embedding Secure Coding Practices in every task

- Simulated AI debugging teams with reality-check annotations

We also address common concerns directly. Developers often worry about endless prompt loops, burnout, or losing core skills. We acknowledge those concerns and reinforce that scaling from solo experimentation to team-wide AI adoption requires discipline, not hype.

Dependence fades when AI is positioned as one tool among many, supporting but never replacing critical thinking. Our approach ensures teams remain accountable, confident, and capable even when AI is unavailable.

FAQ

How can we plan a smooth vibe coding integration for our team?

A smooth vibe coding integration starts with a clearly defined scope and measurable outcomes. We recommend running vibe sprint pilots before expanding team vibe coding across critical systems.

AI-assisted coding teams should test prompt engineering workflows under real delivery pressure. Establish code generation team practices, define ownership, and apply integration rubrics AI to evaluate quality, speed, and architectural alignment objectively.

What workflows help conversational programming adoption without losing control?

Conversational programming adoption requires structured governance. Prompt standardization teams must create shared team prompting templates and enforce schema-aware prompting to reduce ambiguity.

Hybrid review pipelines that combine AI code review hybrid processes with human oversight protect architectural fidelity AI. This trust-verify coding model limits off-the-rails generations and reinforces structured authority vibes in collaborative development environments.

How do we prevent context drift in large codebase vibes?

Context drift mitigation in large codebase vibes demands modular vibe packages and well-documented team schema prompts. When working with 50k LOC context or more, context loss solutions must be proactive rather than reactive.

Teams should monitor LOC compliance tracking and bug cycle metrics to identify degradation early. Overcoming drift scale requires consistent monitoring vibe outputs and formal team rubric evaluation.

What metrics prove production readiness vibes are real?

Production readiness vibes must be validated through scalability rubrics, accuracy scoring rubrics, and structured enterprise vibe audits.

Teams should verify unit test post-vibe coverage, assess middleware error vibes, and evaluate non-functional requirements AI performance. Production vibe deployment must pass compliance vibe checks and strict vibe code verification. Continuous monitoring vibe security logging reinforces secure keychain coding standards.

How do we gain team buy-in during a solo-to-team vibe shift?

The solo-to-team vibe shift succeeds when leaders address skeptical pushbacks AI with transparent data and practical examples. Running 2-week vibe trials and sprint subgroup pilots generates lived experiences coding insights.

Teams should discuss hard truths integration openly and compare measurable outcomes against previous workflows. When burnout endless prompts decrease and efficiency improves, sustained 90% team adoption becomes realistic.

How to Integrate Vibe Coding Into an Existing Team the Right Way

Vibe coding works best when teams define scope, pilot safely, enforce Secure Coding Practices first, and scale using measurable results. Context drift mitigation, hybrid review pipelines, and schema-aware prompts keep AI outputs aligned with architecture. We’ve seen teams accelerate prototypes while avoiding hidden debt by combining discipline with AI leverage.

Start Your Secure Vibe Integration Roadmap Today

References

- https://docs.vibe-coding-framework.com/team-collaboration

- https://blog.google/innovation-and-ai/technology/developers-tools/gemini-3-developers/