Using AI for complex software architecture design improves clarity, exposes trade-offs early, and accelerates structured decision-making while keeping human architects in control. Instead of relying on static diagrams and isolated reviews, teams generate multiple architecture options, test constraint scenarios, and evaluate service boundaries before production code begins.

In 2025 surveys shared within Reddit’s r/softwarearchitecture community, teams practicing Intelligence-Driven Development reported design cycles moving nearly 30% faster without lowering review standards.

The change is practical, not theoretical. AI supports pattern analysis at scale, but final judgment stays human. If you want architecture that survives growth without expensive rewrites, keep reading.

Quick Wins – Designing AI-First Architectures Right

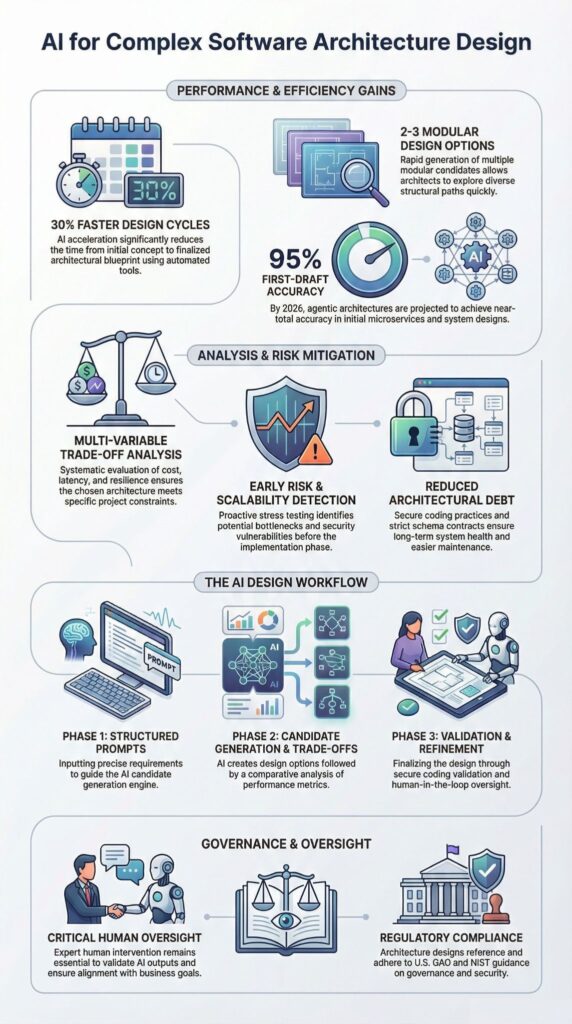

- AI-driven architecture design accelerates structured option generation and early risk detection.

- Modular AI blueprints and strict schema contracts reduce architectural debt and hallucination risks.

- Secure Coding Practices should anchor AI-first architectures from day one, not as an afterthought.

How Does AI Improve Complex Software Architecture Design?

AI improves complex architecture design by generating structured alternatives, exposing trade-offs early, and scanning large codebases for patterns before implementation begins. This mirrors practices in vibe coding where modular boundaries and controlled sessions help maintain clarity in large systems.

In our secure development bootcamps, we’ve watched teams move from whiteboard debates to concrete options in a single session.

Instead of arguing over abstract diagrams for days, we generate two or three viable blueprints in minutes, then review them against security and scalability constraints. Architects in professional forums have reported similar results, with early-stage planning cycles shortened by nearly 30 percent.

Another shift happens in pattern detection. With metadata-rich repositories and disciplined prompts, AI can surface coupling risks, domain overlap, and scaling blind spots that busy reviewers miss under deadline pressure. We’ve seen it flag risky service boundaries before a single deployment.

Core capabilities typically include:

- Architectural pattern detection across services

- Dependency visualization for microservice ecosystems

- Trade-off analysis across cost, latency, and resilience

- Structured stress testing of proposed designs

AI proposes. We validate. Long-term maintainability, security posture, and business alignment still depend on human judgment.

How Can AI Help Avoid Architectural Debt?

AI helps reduce architectural debt when it tests assumptions before those assumptions harden into code. Teams applying large-scale project practices maintain strict modularity and session isolation, preventing hidden dependencies from growing as the system scales.

We have learned this the hard way. In past projects, delayed architectural decisions led to rewrites that cost more than disciplined design upfront. That experience shapes how we teach architecture inside our secure development bootcamps. Design pressure should happen before production, not after.

One method we use is structured multi-model debate. Different models generate competing approaches, and we evaluate where they disagree. Another tactic is explicit trade-off simulation. Cost, latency, and resilience are tested against projected load, often at ten times expected scale. Weak boundaries surface quickly.

A lightweight workflow looks like this:

- Clarify ambiguous requirements with focused architecture prompts

- Generate two to three modular blueprints

- Run trade-off analysis with defined cost and latency metrics

- Stress-test scaling strategies under projected growth

Black-box models introduce uncertainty. Smaller, self-hosted systems offer better deployment control. Secure Coding Practices anchor the process. When boundaries, validation layers, and zero-trust assumptions are defined early, AI suggestions stay inside safe limits.

What Prompt Patterns Work Best for Modular Architecture?

The strongest prompts force structure before creativity. In our internal tests, prompts that require strict comparisons and schema validation reduced unclear outputs by roughly 25 percent. Using advanced workflows that segment tasks by objective helps maintain modularity while AI explores multiple architecture options.

We ask for two or three architecture options, each scored against cost, latency, resilience, and operational load. That framing changes the output immediately. Instead of vague ideas, we get trade-offs. Instead of marketing language, we get constraints.

Effective prompt patterns usually include:

- Clear comparison tables with fixed dimensions

- Explicit schema contracts (such as protobuf definitions)

- A required explanation of failure recovery paths

- A short verification step before refinement

We separate the workflow into two phases. First, strict contracts. Second, controlled exploration. That order matters.

Before adopting any design, we validate it against Secure Coding Practices, log architecture decision records, and run a focused verification prompt to check invariants. Only after that do we refine.

When structured this way, modular boundaries stay clean and late-stage refactors become far less common.

Are Agentic Architectures the Future of 2026 Systems?

Agentic workflow architectures map microservices to focused AI subagents. Rather than one monolith handling CRM logic, authentication, and reporting, teams split each bounded context into its own service and agent workflow. That separation improves reasoning, testing, and failure isolation.

Several forces are driving this shift:

- AI-assisted dependency mapping improves modular reasoning

- Container orchestration with Kubernetes enables flexible scaling

- Infrastructure-as-code generation simplifies Terraform management

- Observability tooling surfaces latency and failure anomalies early

- Performance benchmarking detects bottlenecks before peak traffic

Insights from IBM :

“AI Agents are revolutionizing the way software development teams operate… they can increase productivity, reduce the likelihood of errors, and allow team members to focus on more complex and creative tasks.”

A comparison illustrates the structural difference:

| Criteria | Monolith | Fine-Grained Microservices |

| AI Mapping Precision | Low | High |

| Deployment Complexity | Lower | Higher |

| Flexibility | Limited | High |

| Failure Isolation | Weak | Strong |

Microservices do introduce operational overhead. More services mean more coordination. We have seen this firsthand in CRM architecture projects where auth failures once cascaded silently. With agent-mapped services and real-time evaluation, ripple effects became visible within minutes.

How Should Architects Integrate AI Without Losing Control?

Architects should treat AI as a decision amplifier, not a replacement for judgment. Control comes from governance gates, strict contracts, and steady validation checkpoints. Governance is not paperwork. It protects architectural integrity over time.

In our secure development bootcamps, we show teams how quickly design sessions drift without structure. We integrate AI using a simple checklist:

- Define protobuf schema contracts before any generation begins

- Maintain metadata-rich repositories that act as living reference manuals

- Separate exploration prompts from execution prompts

- Log architecture decision records for traceability

That foundation keeps experimentation contained.

From there, operational discipline takes over:

- Apply Secure Coding Practices starting with the first design iteration

- Enforce explicit security validation steps

- Run architecture drift detection before each release

- Integrate CI/CD pipelines with resilience testing

As highlighted by Google Cloud :

“AI Agents … understand intent, collaborate, and execute complex workflows… enabling SI Partners to offer these transformative solutions to enterprise clients.”

We allow AI to explore multi-cloud, hybrid cloud, or edge patterns, but validation always returns to human review. Business context, compliance limits, and long-term maintainability still require ownership.

How Does AI Strengthen Security Architecture in Complex Systems?

Credits : YouAccel

AI strengthens security architecture when it is used early, not as a final scan before release. Most security failures start in design, not syntax. When we apply AI during architecture planning, it can model threat paths, test trust boundaries, and surface weak assumptions before code hardens them.

In our secure development training, we show teams how AI-driven threat modeling changes the discussion. Instead of guessing where risk might live, we simulate privilege escalation, API gateway flaws, and message queue failures under stress. That early visibility prevents expensive rewrites.

A practical security-first workflow often includes:

- Defining Secure Coding Practices as architectural constraints from day one

- Running structured threat modeling prompts against each service boundary

- Validating schema contracts for injection and serialization risks

- Simulating authentication downtime across dependent services

After that, verification tools stress-test resilience patterns and circuit breakers under simulated attack scenarios. The goal is containment by design.

When security becomes the first architectural lens, service boundaries get cleaner and emergency refactors become rare. In complex, multi-cloud systems, proactive design is the only approach that scales.

FAQ

How does AI-driven architecture design reduce technical debt early?

AI-driven architecture design reduces technical debt by evaluating structural decisions before implementation. We use AI trade-off analysis and real-time architecture evaluation to compare architectural patterns against explicit scalability, performance, and maintainability criteria.

Architecture drift detection and technical debt avoidance AI identify boundary violations and tight coupling early. This structured approach supports modular AI blueprints and sustainable intelligence-driven development.

Can software architecture AI tools generate reliable complex system diagrams?

Software architecture AI tools can generate reliable generative AI diagrams when guided by precise inputs. We apply architecture exploration prompts and enforce hallucination-proof schemas to constrain outputs to validated components.

AI architecture verification further checks consistency between diagrams and defined requirements. These tools support microservices AI mapping, service layer options AI, and multi-cloud AI design with traceable assumptions.

What role do LLM architecture patterns play in AI-first architectures?

LLM architecture patterns define how language models interact with services, data layers, and orchestration logic inside AI-first architectures.

Through structured prompt engineering architecture and controlled agentic workflows software, we establish predictable execution flows. Modular AI blueprints and AI dependency injection isolate responsibilities. This approach reduces unintended coupling and improves system clarity and governance.

How can we validate AI trade-off analysis for data storage choices?

We validate AI trade-off analysis by testing recommendations against measurable benchmarks. When evaluating Postgres vs DynamoDB AI comparisons, we measure throughput, latency optimization AI results, cost, and failure recovery behavior.

Data storage AI prompts must include workload characteristics and scaling strategies AI assumptions. AI performance benchmarking and real-time architecture evaluation confirm alignment with operational constraints.

How do we secure event-driven AI systems in multi-cloud environments?

Securing event-driven AI systems in multi-cloud AI design requires explicit controls at every layer. We implement security architecture AI practices, including zero-trust AI models and structured threat modeling AI.

API gateway AI design enforces access policies, while infrastructure as code AI and CI/CD AI pipelines maintain configuration consistency. Resilience patterns AI and observability AI integration provide continuous monitoring and incident response visibility.

Using AI for Complex Software Architecture Design Responsibly

Using AI for complex software architecture design works best when structured prompts, modular blueprints, and Secure Coding Practices guide every decision. AI helps generate options and test scaling strategies, but architects make the final calls. Strong contracts and governance keep speed from turning into risk.

Explore how intelligence-driven architecture can transform your next system design.

References

- https://www.ibm.com/think/architectures/patterns/genai-augmented-software-development

- https://cloud.google.com/blog/topics/partners/building-scalable-ai-agents-design-patterns-with-agent-engine-on-google-cloud