Advanced Workflows and Strategies create scalable, compliant, production-ready automation by combining agentic systems, AI orchestration, and secure coding from the start across industries and teams today. Teams that treat automation as core infrastructure outperform those chasing short-term hacks.

Across Reddit threads, engineering forums, and enterprise reports, the pattern repeats. PwC estimates AI could add more than $15 trillion to the global economy by 2030, yet only structured systems capture lasting value.

In practice, strong automations begin with secure foundations, then expand through multi-agent design, workflow scoring, and constraint testing under real conditions. Keep reading to see how to build them.

Quick Wins – Building Secure, Scalable AI Workflows

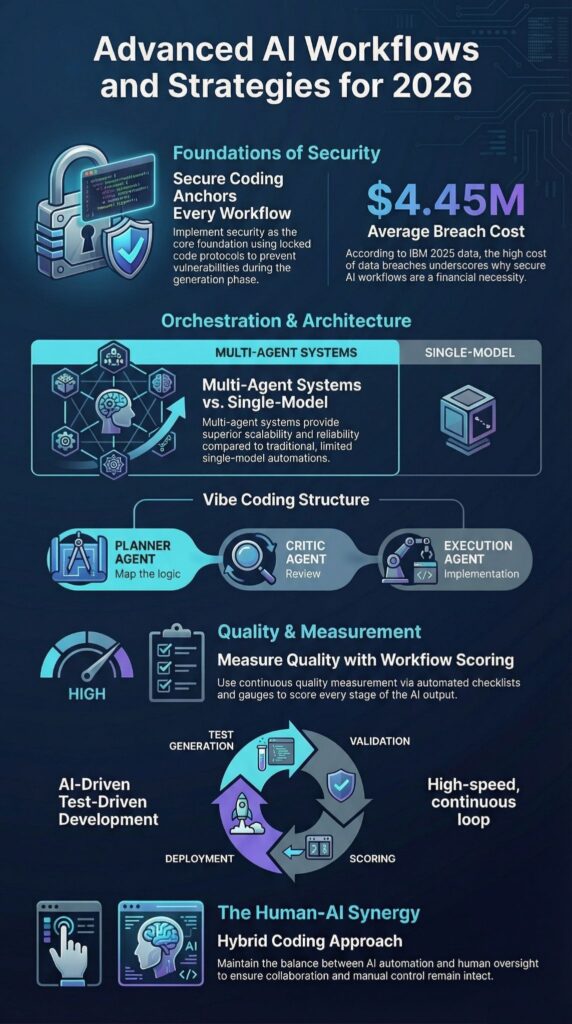

- Secure Coding Practices must anchor every advanced workflow before orchestration layers expand.

- Multi-agent systems outperform single-model automations in scalability, compliance, and reliability.

- Workflow redesign, failure analysis, and intelligent monitoring determine long-term success.

How to Manage Large-Scale Projects with Vibe Coding

Large-scale AI projects succeed when vibe coding runs inside modular, agent-driven workflows with strict security and monitoring. Without structure, it drifts. With structure, it scales.

We treat vibe coding as a coordination layer, not improvisation. Planner agents define system architecture and dependencies. Critic agents review logic, surface gaps, and pressure-test assumptions.

Execution agents build and refine modules. The structure mirrors multi-agent systems used in enterprise DataOps environments, where each role has clear boundaries and measurable outputs.

Engineers discussing AI stack design for 2026 often repeat the same point: “no babysitting” only works when workflows are scored, monitored, and tied to compliance automation. Autonomy without oversight fails quickly.

In our deployments, large-scale workflow automation includes:

- End-to-end mapping before code generation

- Failure analysis checkpoints at every sprint

- Intelligent monitoring across staging and production environments

Secure Coding Practices start on day one. Input validation, controlled API boundaries, and audit logging reduce risk before it compounds. Without these controls, vibe coding creates technical debt. With them, it supports durable automation.

Moving from experiments to enterprise systems demands architectural discipline.

Using AI for Complex Software Architecture Design

AI improves complex architecture design when multi-agent systems test outputs against defined constraints and security requirements. Without guardrails, drafts drift. With review layers, they hold.

We use generative AI as a drafting engine, not a decision-maker. Proposed architectures move through prompt chains, retrieval-augmented generation (RAG) pipelines, and critic agents before approval. Governance frameworks tie technical choices to compliance rules and business priorities, so design decisions are documented and defensible.

In practice, architecture refinement includes:

- Retrieval-augmented generation using internal documentation

- Critic agents enforcing dependency and version standards

- AI/ML integration tests that probe edge cases

Below is a comparison of common architecture automation approaches:

| Approach | Strength | Risk | Ideal Use |

| Single LLM Draft | Speed | Hallucinations | Prototyping |

| RAG Implementation | Context precision | Index maintenance | Knowledge-heavy systems |

| Multi-Agent Validation | High reliability | Operational overhead | Enterprise agents |

| Critic + Scoring Loops | Measurable quality | Longer build time | Regulated environments |

Secure Coding Practices guide every automated recommendation. Teams review permissions, validate dependencies, and secure deployment pipelines before release. Architecture is strategic. It shapes how systems behave under pressure.

How to Integrate Vibe Coding into an Existing Team

Integrating vibe coding into an existing team works when automation supports human oversight instead of trying to replace it. Teams push back when tools force sudden workflow redesign. They adopt faster when orchestration reduces burnout from repetitive tasks such as refactoring, test generation, and documentation.

Successful integration typically follows these steps:

- Define tactical automations that deliver quick, visible wins

- Introduce workflow builder tools in controlled phases

- Implement benchmark environments to measure output quality and speed

Online discussions about no-code and low-code pipelines often show skepticism toward overhyped platforms. That caution is useful. Trust grows when cross-platform connectivity works and workflow improvements are measurable, not promised.

Secure Coding Practices anchor onboarding. Engineers review guardrails. API roles are clearly defined. Compliance automations are transparent. That clarity reduces anxiety around autonomous systems and keeps hybrid coding models stable.

In a recent analysis by Google :

“Our experience… the development and deployment ecosystem… has significant influence on its security posture, so we consider how to ensure that the developer ecosystem encourages secure design and prevents vulnerabilities and errors.” – Google

When structured well, agentic AI engineering strengthens collaboration and preserves accountability.

What Are Advanced AI Refinement Techniques

Advanced AI refinement techniques combine RAG pipelines, critic agents, workflow scoring, and structured validation loops. They turn raw model output into something you can trust in production.

Refinement starts with controlled generation. Planner agents propose structured solutions based on defined inputs and constraints. Critic agents review logic, check assumptions, and flag inconsistencies. Scoring modules then rank outputs against measurable criteria before anything moves to execution. Weak responses stop early. Strong ones advance.

In multi-tool chaining environments, refinement includes:

- Prompt engineering chains with explicit constraints and fallback logic

- Sentiment scraping to detect tone and quality signals

- Lead or confidence scoring models to validate outputs before deployment

Refinement also accounts for real-world limits. Edge cases, malformed inputs, and policy conflicts are tested intentionally rather than discovered in production.

In compliance automation and marketing workflows, structured refinement reduces false positives and inconsistent outputs. Systems without critic layers tend to drift over time. With scoring loops in place, quality stabilizes and becomes measurable.

Refinement is not cosmetic. It forms the reliability layer that separates experiments from operational systems.

How to Maintain High Code Quality with AI

High code quality with AI depends on Secure Coding Practices, automated testing, and continuous workflow scoring. AI can draft quickly, but speed without validation creates risk. Generated code must pass the same checks as human-written code, sometimes stricter ones.

Our structured quality framework includes:

- Automated test generation within AI-driven TDD cycles

- Static analysis embedded in agent-based workflows

- Compliance automation for regulated environments

Secure Coding Practices sit at the first layer. We require strict input sanitization, controlled environment variables, dependency validation, and permission reviews before AI-generated code moves toward production. Audit logs track changes. Scoring systems evaluate reliability over time, not just at merge.

Insights from IBM indicate:

“Standardize secure coding practices and automate security testing.” – IBM

When teams bypass this foundation, small issues compound. Misconfigured permissions, untested edge cases, and unchecked dependencies surface later, usually under load. When security anchors the workflow, AI shifts from novelty to leverage.

Engineers spend less time patching preventable errors and more time refining architecture and performance.

Quality with AI is not automatic. It is enforced through structure, measurement, and discipline.

Using Vibe Coding for Legacy Code Modernization

Legacy code modernization works best when AI agents operate inside modular transformation nodes and audit-ready pipelines. Large rewrites fail. Controlled, component-level upgrades hold.

We deconstruct legacy systems into isolated units, similar to node-based workflows. Each transformation runs independently, is validated against defined criteria, and is logged for traceability. Multi-agent systems split responsibilities: one handles refactoring, another updates documentation, and another runs regression tests against historical behavior.

Modernization pipelines typically include:

- Automated refactoring suggestions scoped to specific modules

- Regression validation against archived outputs and edge cases

- Structured failure analysis drawn from prior production incidents

DataOps processes protect database migrations and API changes. Schema adjustments are versioned. Backward compatibility is tested before rollout. Monitoring systems track anomalies in real time during staged releases.

A hybrid coding model works best. AI accelerates repetitive refactoring and pattern detection. Engineers supervise architectural decisions, security boundaries, and compliance requirements. That balance prevents overreach and protects institutional knowledge.

Modernization succeeds when change is incremental, observable, and reversible—not disruptive or opaque.

How to Build Custom AI Coding Agents

Custom AI coding agents depend on modular design, secure integration layers, and measurable workflow scoring. Without structure, agents overlap and conflict. With defined roles, they perform predictably.

Enterprise builds start with role separation. Planner agents break down objectives into scoped tasks. Execution agents generate or modify code within those limits. Critic agents review logic, enforce standards, and block weak outputs before they move forward. Clear boundaries reduce noise and prevent runaway automation.

A structured build process often includes:

- Defined prompt engineering chains with constraint logic

- API rate limits, access controls, and detailed logging

- Continuous workflow scoring tied to accuracy and stability metrics

Secure Coding Practices begin at the prototype stage. API scopes remain narrow. Keys are encrypted and rotated. Dependencies are reviewed before integration. These controls prevent hidden vulnerabilities from spreading across environments.

Agents perform best when connected through orchestration frameworks that coordinate tasks across repositories, cloud services, and CI/CD systems. Cross-platform integration matters. Scalable automation depends on it.

Well-designed agents reduce manual supervision without removing accountability. They operate inside guardrails, not outside them.

What Is the Best Workflow for AI-Driven TDD

The best workflow for AI-driven TDD combines automated test generation, critic validation, workflow scoring, and secure deployment controls. AI can draft tests quickly, but structure determines whether those tests protect the system or simply inflate coverage numbers.

In a disciplined AI-driven TDD cycle, the sequence looks like this:

- Generate test cases directly from defined functional requirements

- Validate coverage thresholds and edge-case inclusion automatically

- Refactor code through controlled execution agents

- Score output quality and stability before merge approval

This approach reduces repetitive test writing while preserving engineering authority. Developers review failures, approve refactors, and define acceptance criteria. AI handles iteration speed, not final judgment.

Workflow scoring ties test quality to compliance requirements, performance baselines, and regression history. Coverage alone is not enough. Tests must detect meaningful failures and enforce dependency constraints. Without scoring, TDD becomes surface-level. With measurable thresholds, it supports long-term reliability.

Secure Coding Practices apply at every step. Input validation, dependency checks, and protected pipelines ensure that faster cycles do not introduce hidden risk.

How to Automate Tedious Refactoring Tasks with AI

AI handles refactoring well when guided by failure analysis and structured scoring loops. Left unchecked, it rewrites blindly. Constrained properly, it improves clarity without breaking behavior.

Refactoring automation typically includes:

- Dependency mapping through retrieval-augmented pipelines tied to internal documentation

- Multi-agent review cycles where critic agents flag logic drift

- Regression validation against historical benchmarks and production outputs

In marketing systems and finance AI stacks, these automations remove repetitive edits, formatting fixes, and structural cleanups that drain time. The gain is focus. Engineers spend less effort correcting boilerplate and more time reviewing architectural impact.

Still, edge-case handling must be defined in advance. If boundary conditions are vague, automation will miss them.

Content automation pipelines often fail when context retrieval is incomplete. Missing documentation leads to incorrect assumptions. After adding structured prompt chains and critic review layers, reliability improves and regressions decline.

Automation should respect operational limits. Refactoring is not wholesale rewriting. It is controlled transformation that preserves intent while improving structure, readability, and maintainability.

Why a Hybrid Coding Approach Is Often Best

Credits : ExtFox

A hybrid coding approach balances AI acceleration with human judgment, creating systems that scale without losing control. Fully autonomous pipelines look efficient on paper. In practice, strategic platforms still require oversight, especially where compliance, security, and customer impact are involved.

Hybrid workflows combine no-code tools, low-code pipelines, and manual review checkpoints. Each layer serves a purpose. Automation handles repetition and pattern recognition. Engineers focus on architecture, edge cases, and risk boundaries.

Benefits of hybrid systems include:

- Fewer deployment failures caused by unchecked automation

- Stronger compliance automation tied to review processes

- Clearer shared mental models across technical teams

PwC AI forecasts indicate that enterprises pairing automation with governance capture more long-term value than those pursuing autonomy without controls. Balance drives durability.

Secure Coding Practices should anchor that balance. Systems start with strict access controls, validated dependencies, and protected deployment pipelines. From there, agent-driven workflows expand capability, while scoring and monitoring maintain quality over time.

AI works best as a force multiplier. Human judgment keeps it aligned with business and regulatory reality.

FAQ

How do agentic workflows improve process optimization in real teams?

Agentic workflows improve process optimization by assigning defined decision rights to autonomous intelligence instead of relying only on static rules.

Unlike basic workflow automation, multi-agent systems coordinate tasks, escalate exceptions, and adapt using workflow scoring metrics. Teams combine AI orchestration, failure analysis, and intelligent monitoring to reduce manual task reduction gaps and maintain strategic systems that perform reliably under real-world constraints.

What makes AI orchestration different from basic workflow automation?

Basic workflow automation executes fixed triggers and predefined paths. AI orchestration coordinates multi-tool chaining across automation stacks and integrates AI/ML integration, RAG implementations, and critic agents into a structured system.

This approach enables scalable automations that adjust to data changes instead of following rigid sequences. Clear end-to-end mapping and deliberate workflow redesign prevent deployment pitfalls and improve enterprise agents performance.

How can multi-agent systems support scalable automations without chaos?

Multi-agent systems support scalable automations when each agent operates within clearly defined boundaries. Agentic AI engineering assigns responsibilities such as insight generation, compliance automations, and quality control through critic agents.

Workflow scoring frameworks and intelligent monitoring detect drift early. Structured how-to documentation, blunt optimizations, and consistent edge case handling ensure automation stacks remain stable and aligned with sustainable growth systems.

What are common deployment pitfalls in advanced workflow transformation?

Common deployment pitfalls include overengineering an AI stack 2026 roadmap before validating tactical automations. Weak process mining, incomplete failure stories analysis, and neglected technical edge cases often cause breakdowns.

Poor benchmark setups and missing end-to-end mapping increase cross-platform connectivity issues. Teams avoid these risks by applying theory-free tactics, measurable workflow scoring models, and disciplined workflow transformation planning.

How do hyperautomation strategies support business scaling tactics long term?

Hyperautomation strategies support business scaling tactics by integrating DataOps workflows, marketing workflows, and finance AI stacks into unified strategic systems. Organizations combine RPA combinations, AI/ML integration, and workflow builder tools to reduce manual dependencies.

Continuous process optimization, structured insight generation, and intelligent monitoring enable scalable automations while preventing burnout prevention risks and preserving sustainable growth systems.

Advanced Workflows and Strategies for 2026 and Beyond

Advanced Workflows and Strategies work when Secure Coding Practices, agentic systems, and orchestration frameworks operate as one disciplined structure. Tools alone do not scale. Workflow design, compliance automation, and monitoring determine durability.

As AI shifts toward orchestration-first models, secure foundations and measurable scoring will define advantage. Advanced workflows are infrastructure, not hype.

Build Your Secure Agentic Workflow Framework Today

References

- https://blog.google/technology/safety-security/tackling-cybersecurity-vulnerabilities-through-secure-by-design/

- https://www.ibm.com/thought-leadership/institute-business-value/report/ai-secure-design-cyber-resilience