The simplest way to manage complex AI-written code is to stop treating the AI as an oracle and start treating it as an intern. You give it small, precise tasks, you review every single line it produces, and you never, ever accept a sprawling function without breaking it down.

This discipline, rooted in secure coding practices, is what keeps technical debt from piling up. The alternative is a codebase that becomes a fragile, incomprehensible mess. Keep reading to learn the exact loop that turns chaotic AI output into clean, maintainable code.

Key Takeaways

- Treat AI code as a first draft requiring mandatory human review and refactoring.

- Break every task into the smallest possible scope before prompting to prevent LLM context overload.

- Implement automated quality gates, like static analysis and security scans, as non-negotiable checkpoints.

The Hidden Cost of Letting AI Write Your Code

The first time an AI handed us a 200-line Python script, it felt impressive. It ran, the output looked right, but only under perfect conditions. The moment we stepped outside that narrow path, everything broke.

Reading through it was like untangling yarn after a cat attack. Logic circled back on itself, variables were named temp_var_1, error handling changed style halfway down the file.

We spent longer understanding the code than we would have writing it ourselves, securely and clearly. That’s the real trade: fast generation, slow debugging, especially if you skip the discipline of debugging and refining AI-generated code before it reaches production.

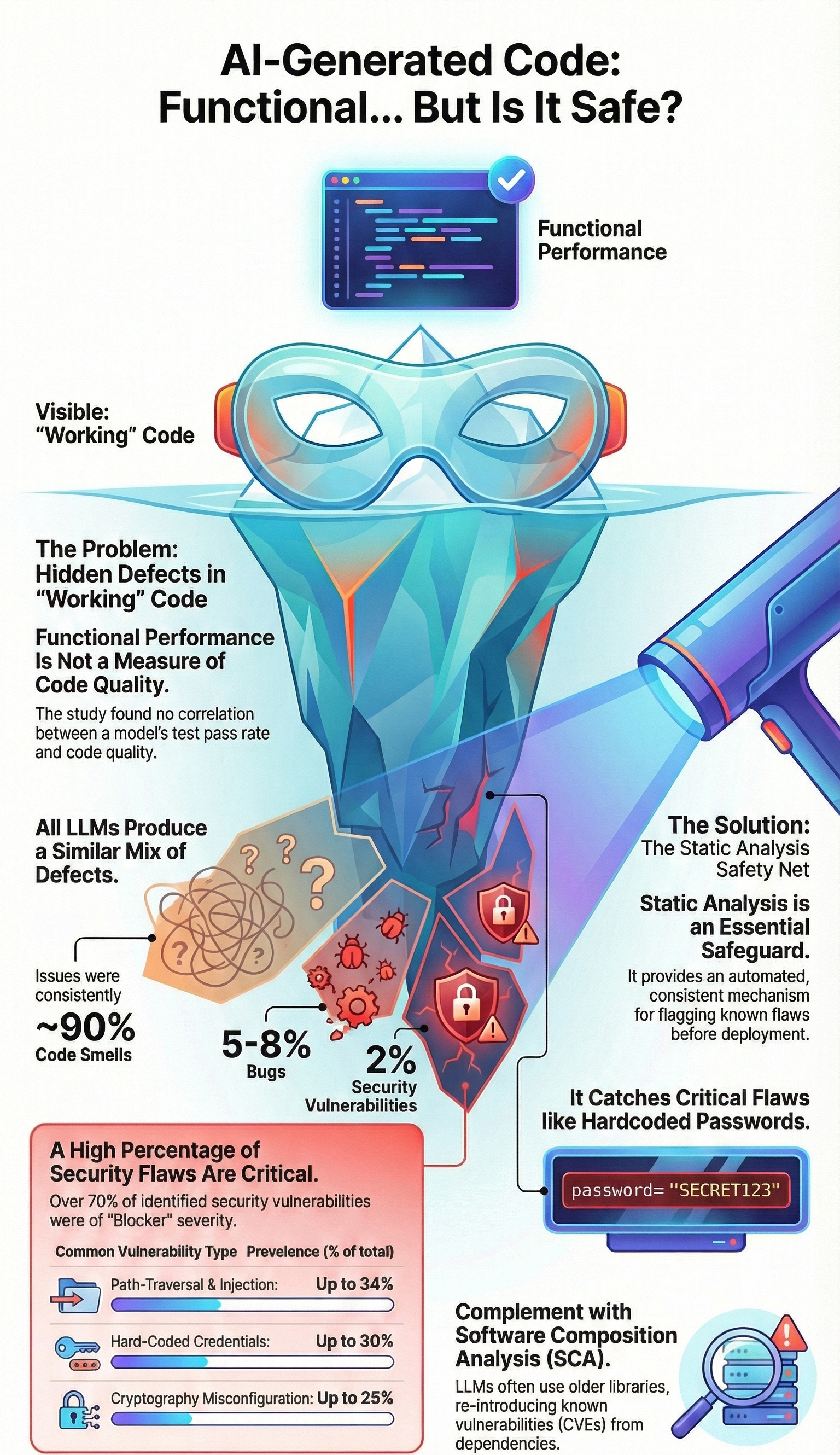

In our secure development training, we see this constantly. AI can produce functional code, but it doesn’t understand security, maintainability, or creeping complexity. That’s our job. So we treat AI-generated code as guilty until proven clean , review it, refactor it, and run security checks , turning a risky shortcut into a controlled part of our workflow.

The Plan-Act Rhythm

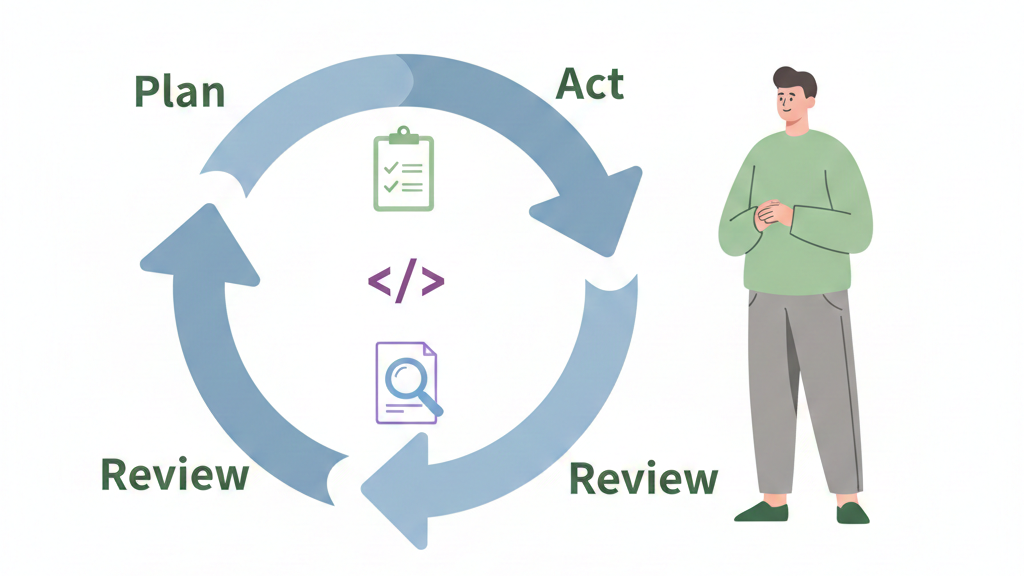

There’s a point in every secure coding journey where people realize they don’t need more tools, they need a ritual. That’s why we train people to expect constant oversight, what we call the AI babysitting problem, instead of assuming generated code understands context, security, or long-term maintenance.

Our students see this all the time in bootcamp: the ones who grow fastest aren’t the ones with the fanciest IDE, they’re the ones who treat their process like a loop they never skip. For us, that loop is Plan–Act–Review–Repeat, and it feels almost boring on purpose. That’s the charm.

| Phase | Human Responsibility | AI Role | Risk Reduced |

| Plan | Define smallest possible task and constraints | None | Scope creep, context overload |

| Act | Provide precise prompt with inputs and rules | Generate first draft | Over-engineering |

| Review | Line-by-line human review and refactor | Optional revision on request | Hidden bugs, insecure patterns |

| Repeat | Adjust prompt or split task further | Regenerate focused output | Accumulated technical debt |

When we plan, we refuse to ask the AI for a giant “user authentication module.” That’s how you get a tangled ball of logic no one wants to touch six months later. Instead, we zoom in:

- design validate_password_strength

- define create_user_session

- write the test for each first

Students in our secure development bootcamp learn to treat each function as a mini-mission. Small scope gives the AI a fair shot at getting it right, and it gives us a clean, reviewable unit. We see better code, fewer surprises, and fewer late-night “why does this break login?” moments.

Act-Review-Repeat in Practice

Once the plan is sharp, acting becomes almost mechanical, and that’s where we like it. We feed the AI a narrow, well-defined task with everything it needs up front: language, framework, and expected patterns from our secure coding curriculum.

Our instructors often tell learners that knowing how to debug AI-generated code effectively matters more than how fast the first draft appears. That means:

- define inputs and outputs with no guesswork

- call out error-handling rules

- spell out security rules (like “no raw SQL strings, only parameterized queries”)

Then comes review, which we treat as sacred. We read every line. In our courses, we train people to hunt for: odd abstractions, leaky security, hardcoded secrets, and those classic AI signatures like over-nested logic or functions doing too much.

When the first draft lands, we assume it’s just that, a draft. We refine prompts, request revisions, sometimes reset the chat if context goes sideways. That steady repetition, Plan–Act–Review–Repeat, is where secure habits get wired into muscle memory.

Breaking the Monolith into Human-Sized Pieces

There’s a pattern we see in almost every cohort: someone asks the AI for help and ends up with a cathedral-sized function when they only need a simple, secure shed.

Our role, especially in a secure development bootcamp, is to teach them what happens next, refactoring becomes the real job. The goal isn’t cleverness, it’s lowering cognitive load so anyone on the team can read the code without squinting.[1]

We usually start here: grab that giant, dense function and hunt for natural seams in the logic. You’ll almost always find:

- a block that validates or sanitizes input

- a block that performs calculations or business rules

- a block that formats or returns the response

| Original Problem | Refactored Component | Purpose |

| Large validation block | validate_user_input() | Centralize and secure input checks |

| Mixed business logic | calculate_order_total() | Improve readability and testability |

| Embedded formatting logic | format_api_response() | Separate presentation from logic |

| Repeated utility logic | shared_utils module | Reduce duplication and fix once |

Each block deserves its own clearly named function. Something like calculate_tax(subtotal, region) tells the story much better than a 20-line math chunk hiding inside process_order. In our secure coding exercises, we treat names as the first layer of documentation and the first defense against confusion.

DRY, Consistent, and Clean with AI as a Helper

Once the logic is split, our bootcamp students learn that the next enemy is duplication. AI loves to repeat itself, often with tiny variations that look harmless until a security fix has to be applied in six different places. During reviews, we guide learners to spot those repeated patterns and fold them into:

- a single utility function

- a shared module

- or a reusable service with tight, clear scope

That’s just the DRY principle,[2] but with a security mindset. One safe fix, applied once, is better than hunting the same bug across the codebase. Then we push for consistency. On real projects, we’ve seen AI mix camelCase and snake_case, or swap between log and logger in the same file.

Our approach is simple: use linters, run formatters, and when we’re tired, let the AI handle cleanup with prompts like, “Refactor this to PEP 8, rename variables for clarity, and keep behavior identical.” The machine is very good at pattern matching; we just have to point it in the right direction.

The Quality Gate: Your Last Line of Defense

Credits: Web Dev Simplified

There’s a moment in almost every secure coding exercise where someone says, “It looks fine,” and then the scanner lights up like a warning siren. That’s why our workflow at the bootcamp never ends with, “the AI said so.”

Human review is necessary, but we’re all tired sometimes, we skim, we assume. Dense, generated code makes that even worse. So we build quality gates that don’t care how confident we feel.

In our projects, we keep a simple rule: no AI-generated code gets merged until it passes automated checks, especially for security. We lean on:

- linters to enforce style and spot obvious mistakes

- static analysis tools for insecure patterns

- security scanners tuned to our stack

From there, we wire those tools into the CI/CD pipeline, so the system enforces what we preach. Our builds fail if:

- complexity gets too high

- coverage drops below an agreed level

- a security scan flags a real issue

For sensitive zones, auth, payments, data access, we add one more barrier: a deliberate, line‑by‑line peer review. That’s where experience and generated code meet at the same table.

FAQ

How do teams manage AI-written code without losing control as projects grow?

AI-written code management works best in small steps. Use incremental AI coding and frequent git commits AI to track changes. Set up AI code review workflows with human review AI code. Add AI code quality gates to control risk. This approach limits managing AI technical debt and avoids AI context overload from LLM token limits.

What practical steps reduce complexity when refactoring AI-generated code?

Start with AI-generated code refactoring focused on complex code simplification. Apply code modularization techniques and breaking down AI functions. Use AI module extraction, pattern-based refactoring, and variable renaming AI. Add AI code deduplication to remove repeats. These steps support code complexity reduction and cleaner refactoring AI outputs.

How can reviews and testing improve trust in AI-assisted code changes?

Build trust with test-driven AI development and static analysis for AI code. Use linting AI-generated code to catch simple issues early. Require peer review AI commits and human review AI code before merging. Strong AI code review workflows help teams discard flawed AI code before it causes larger problems.

How should security be handled when simplifying LLM-generated code?

Run security scanning AI code inside CI/CD AI pipelines. Apply defense in depth coding and least privilege code design. Use separation of duties code and access control AI code checks. Regular AI weakness mitigation reviews reduce risk while keeping simplifying LLM code safe and easier to maintain.

What workflow helps teams refactor AI outputs without slowing delivery?

Use a Plan-Act-Review cycle with automated code transforms and intelligent refactoring tools. Keep work limited to small scope AI tasks. Apply context-aware code changes often. This supports AI code optimization strategies and lowers quality costs AI code while keeping delivery steady and predictable.

From Chaos to Control

AI coding doesn’t replace your thinking, it accelerates the first draft. It handles boilerplate and structure so you can focus on architecture, design, and judgment. Your role is editor: enforce style, tighten logic, and build security in from the first prompt. Start small.

Break one task into steps. Plan, act, review, repeat. The early slowdown pays off in fewer bugs and easier maintenance. The machine is fast; your workflow makes it valuable. Join here

References

- https://martinfowler.com/books/refactoring.html

- https://pragprog.com/tips/you-dont-know-dry/